Most enterprise security programs are running quite a silent crisis under the running. It is not about firewalls and phishing. It is about identity, that is, thousands of non-human identities (NHIs) that are running silently in cloud environments, SaaS architectures, CI/CD pipelines and production workloads without much or any central control.

Combine that with cryptographic properties – certificates, keys, and tokens that are distributed across hybrid infrastructure and you have an attack surface that is scarcely visible to most organizations, much less under the control of management.

Discovery and Inventory: This has ceased to be a security best practice to gain Visibility of AI Agents and Cryptographic Assets. The new policy instructions at the national level have clarified it: you cannot protect what it has not mapped.

This article disaggregates the reasons that visibility is so challenging, what the discovery targets actually appear like and how modern tooling goes about handling the issue as well as why the migration of post-quantum cryptography (PQC) exacerbates all this further.

Table of Contents

Why Visibility Is So Hard Right Now – And It’s Getting Harder

Multi-Cloud and Hybrid Environments Break Traditional Inventory Models

The environments of most organizations were not developed with the NHI in mind. They grew. One crew deployed an AWS infrastructure. Another team moved to Azure. One of them began operating workloads on-prem and incorporating three SaaS platforms. Before many years, you have service accounts communicating with APIs in five clouds and nobody has a clean list of what is related to what.

I have audited settings where the permissions of the same service account were spread across three cloud providers- and no one in the security team was aware of its existence until a scheduled audit was able to raise it. That’s not unusual. It’s almost the norm.

The problem is structural. NHIs API keys, OAuth tokens, service accounts, machine certificates, workload identities, etc. do not exist in a single place. They are hard coded in commits to codes, are stored in CI/CD pipeline variables, hard coded into containerized micro services, and have permissions, which are not assigned by IAM, but increase gradually over several months.

An AI agent introduced into a workflow physical presence introduces its identity layer: access credentials to authorized data source, access keys to external API calls, secrets to authenticate to downstream systems. These are machine fast, they are run 24/7 and most seldom pass through the same review gates as human user accounts typically do.

Secrets Are Hiding in Plain Sight

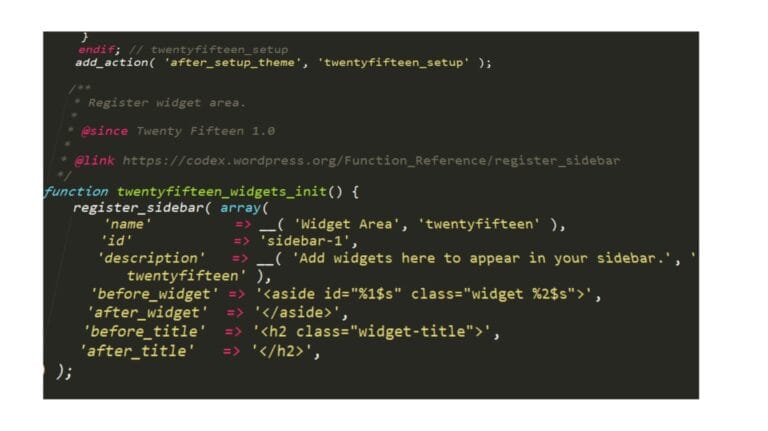

The following is what complicates the problem of inventory, in particular, a major fraction of active secrets are not contained within a secrets manager. My experience looking at security posture in various organizations of varying size demonstrated a general trend, namely, hardcoded credentials embedded in application source code, API keys stored in environment variable files authored to internal repositories, and tokens in CI/CD pipeline configurations dating back years.

These frequently go unnoticed by security teams until there is some breach, an unwary developer commits to a public repository, or a code scanner is first executed. At the time, the issue of locating a single exposing key is not the entire task it is developing a piece of art of the whole, constantly-revised image of all credentials in all settings.

What You’re Actually Trying to Discover: NHIs and Cryptographic Assets

Non-Human Identities Across Every Layer

The discovery scope is broad. NHIs exist across:

- Cloud names IAM – service names, workload names, instance names, managed names (Azure, GCP, AWS)

- SaaS applications authentication – OAuth, API, Third-party linked applications.

- CI/CD pipelines CI/CD pipeline tokens– deployment tokens in tools such as GitHub Actions, GitLab, Jenkins, CircleCI Environment secrets in tools such as GitHub Actions, GitLab, Jenkins, and CircleCI

- On-premise systems- On-premise service accounts as Active Directory, timed task accounts, LDAP bind accounts.

- IoT and OT space – certificate of devices, signing keys of firms such as signature of the firmware, and industrial system authentication keys.

- SSOs and automation processes AI agents– identities (LLM-related), tool-use Credentials, RPA bot identities

All these possess an access scope, a person in charge (often unknown), a purpose (often not documented) and a risk profile that evolves with time. Finding them is a challenge in itself but mapping each NHI to context is a challenge. Who owns it? What can it access? When was it last rotated? Is it currently in use, or an orphan of its time with the live permissions?

The underlying aspect of this issue – especially with the introduction of AI agents into enterprise processes – is exploited to an extent in the contextualisation of Identity Crisis – Securing Non-Human Identities to AI Agents which takes a closer look at how agent-based architectures introduce new breeds of NHI risk that conventional IAM was not designed to accommodate.

Cryptographic Assets: The Algorithm Layer

The crypto asset discovery is a parallel line. Where NHI inventory would be interested in who is authenticating, cryptographic discovery is concerned with how – the nominated algorithms, certificates, keys, and protocols that establish data in transit and at rest security.

The cryptographic inventory must provide an answer to:

- Where do all certificates, TLS/SSL certificates? i.e. when do they expire?

- What encryption algorithms are being used in applications, databases and APIs?

- Does it have depreciated or weak ciphers that were still in use in its production systems?

- Which are the keys where that are who and which policy of rotation?

- What cryptography definitions are vulnerable to quantum?

I observed that in the vast majority of organizations partial answers to some of these are available, typically the certs that are run by a recognized CA or a commercial PKI product, but very few have a wholesome picture.

Shadow certs, test certificates created by code developers and deployed, test certificates created by tools, and Alphabet diversity of algorithms in third-party libraries all leave gaps that are not evident in tooling-level certificate monitoring.

Discovery and Inventory: Gaining Visibility into AI Agents and Cryptographic Assets

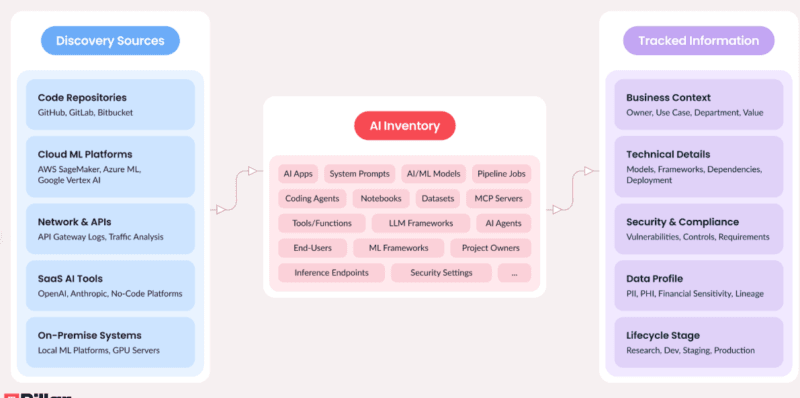

Automated Discovery: The Tool Stack

Inventory scaling does not work with manual inventory. Layered automated discovery is used as the methodology towards which organizations are converging:

IAM tools and CIEM tools– Cloud Infrastructure Entitlement Management (such as Wiz, Ermetric, or Sonrai) tools scan IAM settings to reveal all identities, including human and non-human, and permissions as well as pattern of usage. These tools are able to determine over-privileged service accounts, credits that have not been used and cross-account access that is not intentional.

Secrets scanners – These create a scan of the source code of a secret along with CI/CD settings and histories of secrets discoveries. Applications such as Trufflehog, GitLeaks, and Semgrep can be used to scan this code. Commercial versions are part of developer processes and are used to intercept exposures prior to getting into production.

CSPM (Cloud Security Posture Management) — CSPM solutions allow maintaining visibility over cloud resource settings and identify undesirable settings that expose the credentials or unauthorized access to service identities.

Network and passive monitoring– Network-level discovery Network traffic can reveal undocumented API usage, find services speaking on port with unusual port usage and reveal problems in cryptography protocols (e.g. insecure TLS versions, use of weak cipher suites) with the aid of traffic analysis.

Code and SBOM scanning Software Bill of Materials (SBOM) tools and a static analysis can be used to find cryptographic libraries in use, highlight implementations of vulnerable algorithms, and also give a software-level representation of the application of cryptography.

New NHI-specific applications– such as Astrix, Entro, and Oasis Security, take it one step further by establishing identity graphs that identify each NHI with its access paths, owners, the context in which it was created and the systems it serves.

Such tooling has been useful in the Proof-of-concept world, including in environments where identity graph view is really helpful: as a flat list of credentials, the identity graph view creates a kind of map of relationships and risk to explore instead of a list.

Mapping NHIs to Context: The Four-Field Framework

Discovery in no context is but a list. The operational aim is to identify the location of participating NHI in four fields:

- Owners— Who is in charge of this identity? What team, system and/or person?

- Purpose — What is it used for? What workflow does it enable?

- Environment — In what environment does it work? On-prem, prod, dev, staging, cloud?

- Access scope What are its permissions? Which data or systems is it able and allowed to access?

A security team cannot make decisions on risks without these four fields. The broad S3 read permissions on a service account would be an ideal fit not only to a data pipeline but also could be a misplaced credential of a retired tool. The two are differentiated by the context.

Cryptographic Discovery Platforms

Specialized cryptographic asset discovery and inventory (CADI) systems, such as those provided by Keyfactor, AQtive Guard by SandboxAQ, or research initiatives such as the CADI work of TNO, go as deep as to software algorithms.

These systems combine network scanning, API connectivity and passive monitoring to construct Cryptographic Bill of Materials (CBOM) – a formal catalog of all the active cryptographic assets, where it is located, which algorithm it is performing, key length, validity duration and compliance level.

CBOM is gradually coming to be talked of similarly to SBOM as a structured artefact that companies ought to have in place on a continuous basis and not only be created during audits.

Policy Is Catching Up – And It’s Moving Fast

Executive Orders and the NHI Requirement

The policy environment changed significantly over the recent years. The executive-level cybersecurity directions of the United States have driven federal agencies (and their contractors and supply chain partners, by implication) to ongoing asset visibility as a minimum standard.

Inventory is a governance service covered by the NIST AI Risk Management Framework (AI RMF), which imposes the responsibility on organizations using AI systems to have records of the AI elements, such as identities used and integrations made by such technologies.

In the case of cryptographic resources in particular, the continued cryptographic migration guidance at NIST considers cryptographic inventory to be a requirement and not an option, but a mandatory activity before any PQC transition can be implemented. You cannot even migrate catalogued algorithms.

PQC Migration: Why Inventory Is Step Zero

The nearest future trend to favor cryptographic discovery now is post-quantum migration of cryptography. Most of the cryptographic algorithms that form the basis of the modern internet are RSA, ECC, Diffie-Hellman, are vulnerable in theory to sufficiently powerful quantum computers. In 2024 NIST completed the first round of post-quantum cryptographic standards, and federal agencies were ordered to startup the process of migration planning.

Migration cannot occur without being visible. Companies must understand specifically what types of systems are running what types of algorithms, which certificates are bound to quantum-vulnerable key exchange, and where cryptographic logic execution is coded. It is also a CBOM issue and must be discovered with the methodology mentioned above.

The early organizational experience that I had during the PQC planning demonstrates that there is a constant bottleneck: the teams are aware of the urgency but they are unable to start prioritizing the matter since they simply lack a full cryptographic inventory. The preparation is not a part of the discovery phase, but the rate-limiting one.

What’s Just Beginning: The Emerging Capability Layer

AI Agent Identity Graphs

AI agent NHI management is the least developed area at the moment. With each new agent being launched (whether in the form of a customer support agent, code generator, data analyzer, workflow automator, etc.), a new layer of identity is added.

APIs are called by agents, databases of access are read and written to files, and agents gain entry through downstream services authentication. They frequently give them credentials that have been provisioned in haste, and they are not given the lifecycle management afforded to human user accounts.

And where to find them can be tooling specifically dedicated to this space, such as the AI Agent Discovery module of Astrix, and the NHI management tooling of Oasis Security, are depositing towards real-time inventories that cover agent identities itself: what part of the infrastructures the agent can identify, and what credentials it possesses, how it was provisioned, and whether its credentials have been rolled or scoped accordingly.

This is one of the areas to monitor. There is a risk surface with the implementation of AI agents that have machine-speed and widely-scoped NHI credentials of which most existing security programs are not adequately prepared to monitor.

CAASM + NHI Convergence

Cyber Asset Attack Surface Management (CAASM) reasons are starting to combine with NHI-specific software. The rational final state will be a single asset database comprising human identities, non-human identities, cryptographic assets, software components (SBOM), and AI systems – all in a single continuously-updating graph.

That is not convergence that is quite here yet. The vast majority of organizations are continuing to use different tools to perform IAM, CSPM, secrets management, certificate management, as well as NHI visibility. The trend is obvious, however, unified, persistent, context-sensitive asset inventory of all identity types.

Trust Booster: Two External Resources Worth Bookmarking

Two outside sources are particularly useful to the reader who develops practical programs or expands on his or her knowledge:

- NIST SP 800-57 (Key Management Guidelines) 800-57 is the underlying document of the policy and practice of cryptographic key management. Immediately pertinent to the development of cryptographic inventory program.

- Anchor text consideration: NIST Key Management Guidelines.

Link: https://csrc.nist.gov/publications/detail/sp/800-57-part-1/rev-5/final - TNO CADI Report (Cryptographic Asset Discovery and Inventory) – A comprehensive technical report about the current circumstances regarding cryptographic discovery tooling and methodology consisting of CBOM frameworks.

- Anchor text: TNO Cryptographic Asset Discovery and Inventory Report.

Link: https://publications.tno.nl/publication/34645425/j6EewK7a/TNO-2025-P11921-GB.pdf

Where Things Stand and What Comes Next

Discovery and Inventory: Becoming Visible to AI Agents and Cryptographic Assets remains more of an aspiration than a reality to the majority of organizations. The tools are maturing. The policy pressure is real. The PQC time-line is approaching.

Nevertheless, the distance between what organizations are supposed to be able to see and what they actually have the ability to see is a big one.

Practically the point of departure is never other than to prepare the store before you require it. Map NHIs to controllers, access scopes. A CBOM is a good thing to build in advance of your PQC planning stall since you have no idea what algorithms you are executing.

Discover should be a part of CI/CD because it is important to make sure that new credentials do not pass without evidence.

The identity aspect of this issue will exclusively increase to anyone tasked with handling AI-integrated settings in particular. Agents will multiply. Their footprint of credential will increase. And those organizations that have already achieved continuous NHI and cryptographic visibility will be in a paradigmatically different risk position than the ones that have not.

The disparity in visibility can be bridged.

However, to shut it down, one has to elevate discovery and inventory to continuing operational practice rather than a project.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!