In the present day, quantum computers are not breaking encryption. Nonetheless, the protocols and certificates being issued currently may still be used when they do. And that is the unpleasant reality why NIST took almost ten years to host one of the most high-traffic cryptography contests in history – and why the standards that the agency published in 2024 are significant to firmware engineers and enterprise architects alike.

This paper dissects the NIST PQC process, describes what the actual difference between KEMs and signatures really is, and discusses the real-life limitations that you will bump into when attempting to realize these algorithms in practice. No deep math required.

Table of Contents

What NIST Actually Did and Why It Took So Long

The Competition in Brief

In 2016 NIST already launched an open call to post-quantum cryptography algorithms. The concept was simple; identify alternative versions of RSA and elliptic-curve that would be resistant to both classical and quantum attacks. Applications were received by research teams all over the world, 69 applicants in the first round alone.

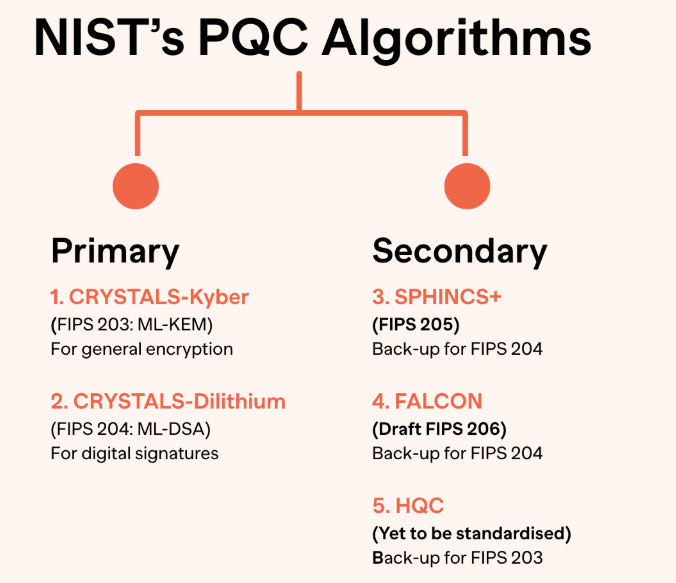

Having undergone numerous public publications and cryptanalyses along with civil commentary, NIST published its first completed standards in August 2024. There were three algorithms that completed the race:

- ML-KEM (FIPS 203) – a fundamental encapsulation system on the problem of the Module-Lattice.

- ML-DSA(FIPS 204) is a lattice-based digital signature scheme.

- SLH-DSA (FIPS 205) – a hash-based signature scheme, a scheme that is slower with weak

security behavior. Two additional ones remain under consideration: FN-DSA (a compact lattice signature, Falcon) and HQC (code based KEM backup to ML-KEM). The process is not complete, it is taking a new level.

These algorithms are being developed as slotting algorithms to be inserted into protocols already dependent on RSA and ECC: TLS, VPNs, secure email, code signing, X.509 certificates and S/MIME. Critical infrastructure does not have a choice to make when it comes to transitioning to it is not a matter of choice, but a matter of a when.

NIST Post-Quantum Standards Explained: KEMs and Signatures Are Not the Same Problem

Here much early publicity is confounded. KEMs and signatures address various issues, and their replacement in solution needs to be informed by the knowledge of what role each one plays in your protocol stack.

What a KEM Does

Key establishment, which is the handshake stage whereby two parties acknowledge a common secret, but never transmits the secret directly over the wire, is dealt with by a Key Encapsulation Mechanism. In classical, this is tackled with RSA key transport or ECDH exchange of key.

ML-KEM replaces that. One of the parties comes up with a public/private key pair. The other employs the public key to encapsulate a random generated shared secret, to generate ciphertext. The former party undecrypts using its secret key to get the same secret. The secret was not transmitted by either of the parties.

It will appear in: TLS 1.3 key exchange, TCPsec/IKEv2, SSH, and any protocol that attempts to negotiate session keys.

What a Signature Scheme Does

Signatures manage authentication and integrity – demonstrating that a message, certificate, or code worked its way out of who it claims it originated out of and that it has not undergone an integrity violation. This is currently occupied by RSA-PKCS1 and ECDSA.

That is substituted with ML-DSA and SLH-DSA. A signer prepares a signature of a message with the help of his or her private key. It can be verified by anybody having the corresponding public key. The underlying math of this is completely dissimilar to RSA, though the protocol flow of protocols such as X.509 and S/MIME can be cleanly mapped.

In which you will find it: Code signing pipeline, TLS certificate chains, document signing, firmware authentication, and secure boot.

Why Both Matter Together

Such a connection as TLS consists of both a KEM (to set session key) and signatures (to check the authenticity of the sever certificate). The impact of the migration of one but not the other exposes half the chain to vulnerability. It is one of the reasons why Post-Quantum Cryptography Migration planning is more complicated than changing a cipher suite setting.

The Trade-Offs Nobody Warned You About

I have read more than enough migration documentation to tell you this in a nutshell: on paper, the numbers look well but once you begin to test with real stacks, things begin to go wrong. Here’s what actually shows up.

Key and Ciphertext Sizes

This is the nearest contention centre. Compare these rough figures:

| Algorithm | Public Key | Ciphertext / Signature |

|---|---|---|

| RSA-2048 | ~256 bytes | ~256 bytes |

| ECDH P-256 | ~64 bytes | ~64 bytes |

| ML-KEM-768 | ~1,184 bytes | ~1,088 bytes |

| ML-DSA-65 | ~1,952 bytes | ~3,309 bytes |

| SLH-DSA-128s | ~32 bytes | ~7,856 bytes |

ML-KEM is manageable. The size of Signatures manually generated by the ML-DSA is approximately 13 times bigger than ECDSA counterparts. The size cost of SLH-DSA signatures is enormous – that security assumption that is conservative and based on hash is actually expensive.

In TLS-shakes, such bigger signature chains and certificate chains cause latency. Inlimited protocols with regular sized messages (DTLS, CoAP, Zigbee), they are able to shatter it completely. This was where I, on experience, found lab testing to begin to markedly go off sequence with theoretical expectations.

CPU and Memory Overhead

Lattice is also computationally expensive compared to ECC in devices that do not have hardware support. ML-KEM and ML-DSA scale relatively well on current server hardware The post-quantum whitepaper by NXP has been able to achieve readable performance on Cortex-A class processors with AVX2 optimization.

The image is displayed in lockup devices. Microcontrollers of RAM size 64Kb, no FPU, no hardware crypto acceleration have real issues. SLH-DSA signatures are large and they need storage, bandwidth which many embedded systems simply lack. I observed this especially through paper aimed at upgrading pipelines in IoT firmware updates – the decision that simply updating the signature scheme is sufficient fails quickly.

Hybrid Designs: The Transition Bridge

Since the confidence in new algorithms is developed gradually, in most realistic applications, hybrid schemes will be employed – classic algorithms are executed simultaneously with post-quantum algorithms. An example of this is X25519 + ML-KEM-768 that can be trusted to be classically secure in the current and quantum secure in the future.

In certain instances this doubles important material and introduces complexity of handshakes. However, it is the suggested practice in the period of transition mainly where long-lived certificates and infrastructure are involved. The migration guidance provided by NIST is specifically interested in hybrid operation.

What’s Still Being Evaluated and Why It Matters

FN-DSA (Falcon)

produces much smaller signatures than ML-DSA, but its signature verification algorithm has the disadvantage that it needs floating-point numbers, which again are difficult to execute reliably and safely across hardware. The resistance of side-channel is more difficult to ensure. It is still heading slowly towards standardization as FIPS 206 though guidance on deployment is only yet to keep pace with it.

HQC – The Backup KEM

Another mathematical family that is not a lattice, but is a code-based algorithm, is HQC (Hamming Quasi-Cyclic). It is standardizing it, and specifically as a hedge: in the event that a breakthrough in lattice cryptanalysis ever undermines ML-KEM, HQC offers an independent fallback. It has larger key sizes than ML-KEM but is portfolio insuring instead of a default selection.

Deployment Reality: What Architects and Engineers Are Actually Facing

Protocol-Level Integration

The IETF has been developing hybrid key exchange of TLS 1.3, and vendors of browser have already started testing ML-KEM on real traffic. In 2023, Chrome and Cloudflare also completed preliminary engines of Kyber (the initial name of ML-KEM). They were not experiments, but large-scale production traffic tests.

In the case of the majority of enterprise stacks, the realistic way is as follows:

- Inventory – listing of RSA/ECC implementation by your certificate authority, TLS termination-gateways, VPN gateways and signing infrastructure.

- Evaluate – determine which systems are able to have software updates versus those systems that have to be replaced.

- Test – test ML-KEM and ML-DSA with your protocols and test them in a laboratory set-up.And hybrid first In hybrid mode, install classical and PQC before switching over.

- Monitor– observe the interoperability malfunction with third-party systems that are not yet migrated.

This type of structured lab migration of an enterprise and government setting is exactly what is being implemented by the NCCoE (National Cybersecurity Center of Excellence). The data of their project outputs will be valuable to be followed as a reference in real-life.

The Embedded and IoT Problem

The hardest category is that of constrained devices. The firmware signing key shipped in a machine today may be required to check signatures in the year 2035. Unless the bootloader of that device can accept the size of ML-dsa signatures or even has the RAM to generate the key using ML-KEM, the migration path may mandate hardware replacement, rather than a software one.

That is why, IoT Post-Quantum Cryptography Migration planning can not begin when quantum computers will be able to do it. It must begin at this point, during the product design stage. I could observe that any device manufacturer that is viewing this as a problem in the future is already implementing technical debt.

Governance and Crypto-Agility

Crypto-agility, or the capability to change cryptographic algorithms without needing to design the entire system is a common theme running through all serious migration roadmaps, including those that have been published so far by TNO. Systems that are hard-coded with RSA-2048 in their design instead of coming up with the algorithm as a configuration parameter will have to be completely rewritten to upgrade.

This is a design issue and not a cryptography issue. Any company which develops crypto-agile systems today will incur significantly lower costs and time when it comes to algorithm transitions

The Standards Timeline: Where Things Stand Right Now

| Standard | Algorithm | Status | Role |

|---|---|---|---|

| FIPS 203 | ML-KEM | Final (2024) | Key encapsulation |

| FIPS 204 | ML-DSA | Final (2024) | Digital signatures |

| FIPS 205 | SLH-DSA | Final (2024) | Digital signatures (hash-based) |

| FIPS 206 | FN-DSA (Falcon) | In progress | Digital signatures (compact) |

| TBD | HQC | Under evaluation | Backup KEM |

Last History and Present Situation. The three standards that have been finalized are in a position to be implementedtoday. FN-DSA and HQC are going to be followed, but planning must not be deferred.

Two External Resources Worth Bookmarking

Two sources I consider to be truly helpful to any person creating implementation plans or writing about this space are:

- The official page of PQC project at NIST. The reference material of the FIPS documents, draft standards and migration directions. Use the NIST Post-Quantum Cryptography project as a bookmark to get specifications of different algorithms and official schedules.

Propositions: NIST Post-Quantum Cryptography Standards. - PQC Migration Project of NCCoE. The National Cybersecurity Center of Excellence is releasing enterprising-level migration counseling. The projects page of their PQC migration (real-world testing, interoperability findings, and deployment) is discussed on their migration project page. Anchor text: NCCoE Post-Quantum Cryptography Migration Project.

What This All Means – My Take

The finalization of NIST 2024 was not the final story of post-quantum. It was the starting gun.

The algorithms are ready. The actual work, inventorying systems, testing performance on real hardware, updating certificate infrastructure, retraining teams, etc. is only underway. KEMs and signatures are not comparable tools to do different jobs and treating them as the same will bring actual issues of integration.

Those organizations that will do the transitioning readily are the ones that already test the ML-KEM in their own stacks of TLS, specify crypto-agile architectures in new products, and read the NCCoE lab documents.

As my experience demonstrated, organizations have to scramble when they have to complete a task by a real deadline when they wait until they are given a final migration guide and start doing any work. Start with a KEM. Test ML-KEM-768 hybrid (TLS) based. Numerate the difference of handshake size. All that one experiment can instruct when over with white paper.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!