Table of Contents

Why Most Organizations Are Already Behind

Nothing is repeated here, and I would say that the biggest blocker in Post-Quantum Cryptography Migration is not locating the right algorithm. It is neither low cost nor executive buy-in. It is that the majority of organizations really do not know the location of their cryptography.

TNO -the Netherlands Organisation for Applied Scientific Research- explains it clearly in their PQC migration information: you cannot migrate without knowing that you have it. That is not a theoretical issue. It is an issue that is being acted out today in IT departments of enterprises, the governments, and even in critical infrastructure groups of the world.

It is the reason why the NCCoE (National Cybersecurity Center of Excellence) has a specific workstream known as cryptographic discovery that is so widespread. Cryptography is embedded in applications, protocols, code, and third-party libraries, like it is undocumented or unknown owned, and usually unnoticed by the rest of the team.

The national PQC roadmap of Canada and the analysis provided by PQShield regarding various government migration plans explicitly documents inventory and planning to be accomplished between 2026 and 2028. That’s not a far-off horizon. It’s now. And organizations which are yet to embark on the discovery are already lagging behind.

What Is Cryptographic Inventory and Quantum-Vulnerability Diagnosis, Exactly?

The Inventory Side

A cryptographic inventory refers to a constantly updated document of all cryptographic assets within an environment. Not just TLS certificates. Everything.

That means:

- Protocols TLS (all versions in use), IPSec, SSH, S/MIME and any custom or old protocols that may still be in service.

- Stacks Any of the above components: libraries and stacks: OpenSSL, BoringSSL, libsodium, platform-native crypto, and embedded crypto built into a chip or otherwise part of the firmware in an IoT device.

- Hardware Hardware security modules (HSMs), smart cards, trusted platform modules (TPMs), and cryptographic accelerators on internet of things devices.

- Certificates, keys, and PKI the entire picture of a lifetime: their location, owners, expiration dates and algorithms.

- Applications and services – anything which accomplishes authentication, encryption, signature, or key exchange by use of the public-key cryptography. All of them should be attached to metadata: which owning system, stack position, data classification, and lifecycle status.

In the Cryptographic Bill of Materials (CBOM) worked on by IBM — now networked in the CycloneDX v1.6 standard – there is a structured means of describing precisely this. Imagine an SBOM, but the crypto assets.

The Diagnosis Side

Quantum-vulnerability diagnosis, having created and established the inventory, categorizes each asset based on quantum attack exposure. The main ones are the RSA, classical Diffie-Hellman, and elliptic curve cryptography (ECC) three are all broken by a quantum computer powerful enough to run Shor’s algorithm.

Suggesting a diagnosis is not limited to the identification of algorithm families. It also looks at:

- Elapsed durations at the unsafe level.

- Outdated hash algorithms (MD5,SHA-1)

- Mixing configurations Quantum safe algorithm collections with vulnerable algorithm collections in bead-cancelling ways.

I observed that weak configurations, and not only weak algorithms are underreported during my experience with reviews of enterprise crypto posture assessments. A TLS 1.3 server with a powerful cipher string may still be compromised by one that has an intermediate with an RSA-1024 key in its certificate chain. The way Discovery Really works in Practice.

How Discovery Actually Works in Practice

The NCCoE’s Cryptographic Discovery Workstream

The NCCoE does not only suggest discovery in theory, they are even running a migration project that divides discovery into tangible technical work streams. It is an amalgamation of techniques, since no technique identifies all of them.

Ascribing to Static code and binary analysis is used to scan source code, compiled binaries, and build artifacts in an effort to identify cryptographic API calls, hard-code keys, and references to algorithm. Such tools as the Quantum Safe Explorer of IBM are capable of dealing with various languages and identify quantum-vulnerable algorithms within the codebase.

My experience with similar pipelines of static analysis of medium-size codebases has shown that the number of undocumented crypto calls is always shocking; and that is particularly true of modules that were last updated years ago.

Network traffic inspection is approached differently. Programs that perpetually scan network traffic can locate where PK cryptography is actually being executed over TLS connections, VPN tunnels and email streams, such as that of shadow IT applications and partner access that do not appear in the code bases at all. This is particularly needed to locate legacy protocols that are negotiable TLS 1.0 or weak cipher suites.

This is what network monitoring does not provide: offline keys, code-signing material, stored credentials, and PKCS12 / jks / pem files on endpoints are all covered by endpoint and keystore scanning.

The ACDI (Automated Cryptography Discovery and Inventory) work by TYCHON is a demonstration of how to find keystores on both Windows and Linux systems starting with PowerShell and native tools and inverting whether the keystores are in fact holding quantum-vulnerable algorithms.

PKI Discovery identifies the complete certificate ecosystem – within an organization, external certs, program chains, and fleet-based enabling device certs in the IoT. This is a direct feed into Kirki migrations planning that is one of the most complicated components of any PQC migration.

Why One Method Isn’t Enough

There have been blind spots recorded in each discovery method. Code scanning is not able to detect runtime settings and dynamic loads. Network monitoring fails to identify dormant keys and data at rest. File-system scans make false positives – they make crypto files which are no longer being actively used.

The practitioner guidance provided by Post-Quantum suggests multi-method approach specifically, using a statical analysis, network packet monitoring, certificate discovery, and CBOM correlation processes all in parallel; the overlap is not to be taken as redundancy; instead, it can be viewed as validation.

My practice presented the same trend the weaknesses of one approach were the advantages of another. You can only get a picture that you can trade in by running them together.

Classifying Urgency: Tagging for Quantum Risk

The Four Tags That Matter

It is half the battle in inventorying assets. The other half is sorting them in regard to their urgency in the need to migrate. Qualitative-vulnerability diagnosis is now a tool of decision-support.

Four attributes, which include all the assets in the inventory, should be tagged on each asset:

- Status family RSA/ECC/DH (vulnerable), symmetric (usually safe at large key size), or even already PQC-ready.

- Key size – even in families of vulnerable algorithms, a 4096-bit RSA key is more time consuming than a 1024-bit key.

- Data sensitivity Public metadata does not pose any significant risk compared to encrypted health records or secret messages.

- Data lifetime – it is the most crucial dimension that is underweight in most organizations.

The harvet now, decrypt later threat is realised in data lifetime. A party intercepting encrypted traffic today does not require a quantum computer (but requires one in the near future) they simply require one before the information they have obtained becomes useless.

The window might be 10-20 years in the information of the national security, medical records, or long-term records of finances. In the case of a session token then it could be 30 minutes.

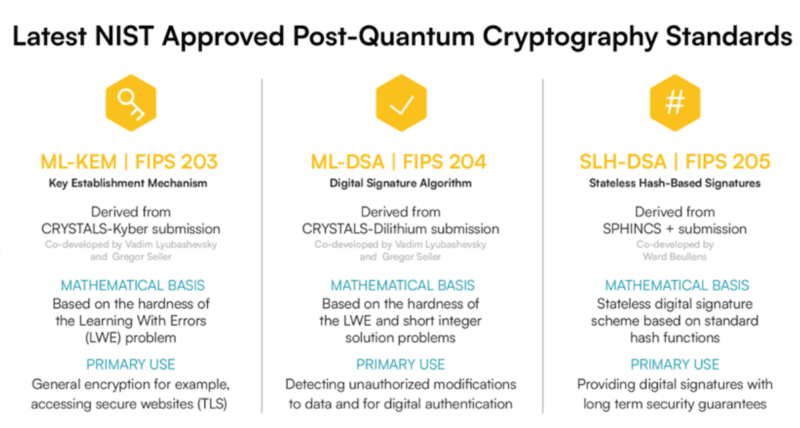

This combination of vulnerability against algorithms, sensitivity of data, and data lifetime puts up a level of risk. This form of tiering has been adopted in both PQC migration guidance by NIST and TII analysis of the original PQC standards, to define urgently adopting systems, i.e. systems about which long-lived or high-value data is secured using quantum-vulnerable algorithms and must need to be migrated first.

Connecting to Broader Security Frameworks

The ease with which this work can be mapped to the existing security programs is an under-acknowledged thing about this work. NIST has clearly linked the PQC migration tasks, such as crypto inventory and vulnerability diagnosis, to the CSF practices (asset management, vulnerability identification) and SP 800-53 controls (configuration management, risk assessment).

It is important since this redefines inventory work. It is not a stand-alone PQC initiative that exists outside security program. It is a continuation of what should already be being done by the mature organizations in terms of asset management and risk governance.

Those teams that already have a vulnerability management program, supply-chain security assessment, or compliance audit underway have the added advantage of being already ahead of the curve, they only need to expand their scope to encompass cryptographic assets.

This drag-over to supply-chain practice is also naturally related to The Future of Cyber Defense where such organizations are increasingly anticipated to have an overview of all dependencies, including cryptographic ones, that may ultimately be an attack surface.

What’s Already Working and What’s Still Catching Up

The Mature Toolkit

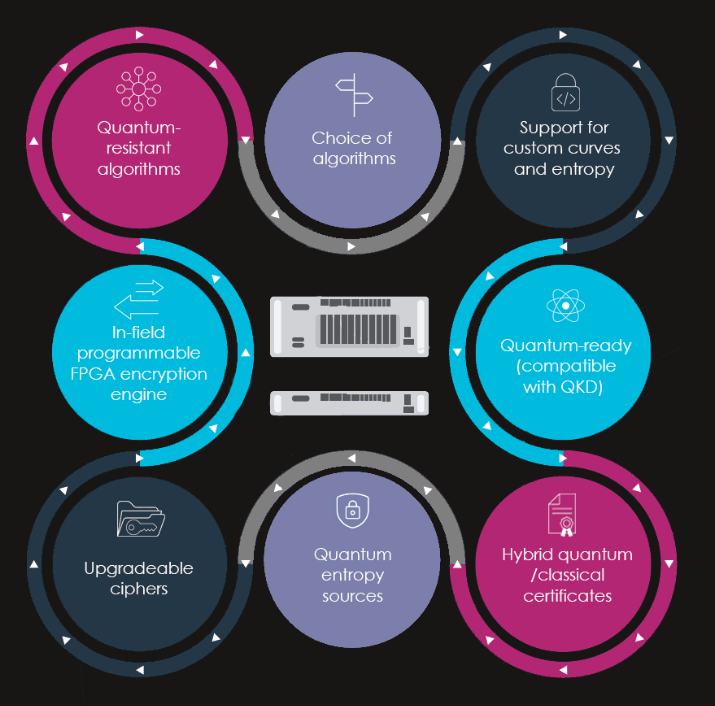

A number of types of tooling are literally industrialized nowadays. Code and binary scanning is a well-established and of code analysis tool. Discovery platforms based on networks and operating based on the exploration of traffic inspection are used on a largescale basis in financial service and government setups. The CycloneDX-based CBOM standard provides an interoperability point between tools and feeds into current SBOM systems.

Vendors such as QryptoCyber, QuSecure and Quantum Safe Advisor by IBM have built enterprise platforms that include inventory management features, discovery and quantum-risk dashboards, which are tightly maintained as systems. They are not proof of concept tools, they are already in operational use in live environments to provide regulatory compliance and migration planning.

What’s Still Developing

It is equally important to know the areas that are yet to gain ground:

Adaptive inventory based on AI is coming into existence. Systems start to transform fixed CBOMs into active remediation plans with the help of machine learning correlating the results of discovery tools and suggestions of migration sequencing. This is early but moving fast.

Most organizations are still unable to have infrastructure wide visibility in a layered manner. The scope of inventory work still tends to be applications, network layers, whereas HSMs, identity infrastructure, and hardware-based roots-of-trust and cryptography at the firmware level are not well-studied. In case those layers contain vulnerable algorithms, the blast radius of an eventual compromise is relatively much larger than a single TLS endpoint.

One of the most difficult open problems is ot, IoT, and firmware scanning. The cryptography needed to be embedded to ensure another application is commonly the cooperation of the vendor, or the specialized tooling to even view the CBOM data of a particular device.

The notion of The Rise and Types of Digital Twins already finds its features in sophisticated crypto inventory conversations, to be more exact, application of the models of digital twins that use the infrastructure as the simulator of places where cryptographic dependencies are fundamentally encoded and then some live discovery takes place in some sensitive application.

I Mapped My Own Stack – Here’s What That Looked Like

The beginning of this process does not need a commercial platform. It requires a methodology.

The steps that I gave myself followed in building my first inventory on a mid-complexity stack were as follows, and reflect the progression as proposed by both NCCoE and Post-Quantum in their published documents:

Start with the CBOM schema. Prior to the executing of all the tools find your all-tracking fields: algorithm, key size, library version, owning service, data classification, certificate expiry and data lifetime. The checklist of what a crypto inventory should contain can start with a breakdown published by QuSecure of what an inventory should contain.

Complete a first run of a static analysis run. It is quick, not invasive, and shows up the most unexpected results right away – hard-coded strings of algorithms, forgotten imports of libraries and utilities in crypto modules.

Introduce visibility of network traffic. A capture window on internal traffic, even of a few seconds, will show protocols and cipher suites, which are never found in the code.

scan endpoints and keystores. Here, there is appearance of offline keys and code-signing material. I observed that PKCS12 files were scattered around the working stations of developers but this did not take the form of documentation with anyone in the team.

Tag by risk tier. As soon as the inventory is available, the simplest of simple passes of tagging, such as algorithm family, data sensitivity, data lifetime, will reveal the assets that must be migrated fast.

Feed it back into governance. Any inventory remains useful only in as long as it is maintained. Introduction of CBOM generation to CI/CD pipelines and, more importantly, integration of CBOM with asset management system is what transforms a one-time scan into the practice.

The Road Ahead for Cryptographic Inventory and Quantum-Vulnerability Diagnosis

It is not an abstract pressure that is driving this work. Due to the government requirements and the regulatory framework, as well as the explicit timelines within the national PQC roadmaps, crypto inventory is turning out to be a compliance artifact – not an engineering best practice. Companies that manage it as a project in the future are already lagging behind regimes of the national guidance that are realistic.

Migration roadmaps created by Post-Quantum Cryptography in the framework of TNO, NCCoE, PQCC, and Canada all have the same initial step.

Prior to the choice of algorithms, prior to a vendor analysis, prior to planning of migration – discovery, inventory. You cannot put priority on what you have not discovered.

The positive part of this is that the tools, the standards and the methodologies to do this well are available at this time.

The guidance provided by NIST (the CycloneDX CBOM standard), released by NCCoE (published workstreams), and Post-Quantum, Encryption Consulting, and TYCHON, among others, can provide any organization with a full starting framework. Most of it is free.

It is not a matter of whether or not cryptographic inventory is important. Whether or not your organization is now mapping: or waits till the time to orderly migration has passed.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!