Table of Contents

Why This Migration Can’t Wait And Why It’s Easy to Break Things

The cryptographic infrastructure that most organizations are sitting on was designed to last decades. RSA, ECC, AES – they are so old and reliable as the foundations of secure communications that they have become almost transparent. They are embedded in TLS handshakes, VPN tunnels, pipeline code-signing, hardware security enclosures and hundreds of applications enterprise-wide that no one has touched in decades.

Then there was quantum computing on the table.

The problem is not the fact that quantum computers are breaking RSA today. They’re not- not yet. But a more terrifying threat is a technique sometimes referred to as harvest now, decrypt later. Proponents of the previous claim are already capturing encrypted traffic, hoping that they can decode encrypted traffic once we have sufficiently powerful quantum machines.

The clock is already ticking years ago in data that have a long shelf life of their confidentiality – government communications, financial records, health care data, intellectual property etc.

I have deployed many security audit frameworks in to enterprise settings, and the trend has been nearly uniform: everybody knows that PQC is being used, but no one wants to touch the crypto layer because it seems like opening the heart of a running system. It is natural to be so shy. It’s also dangerous.

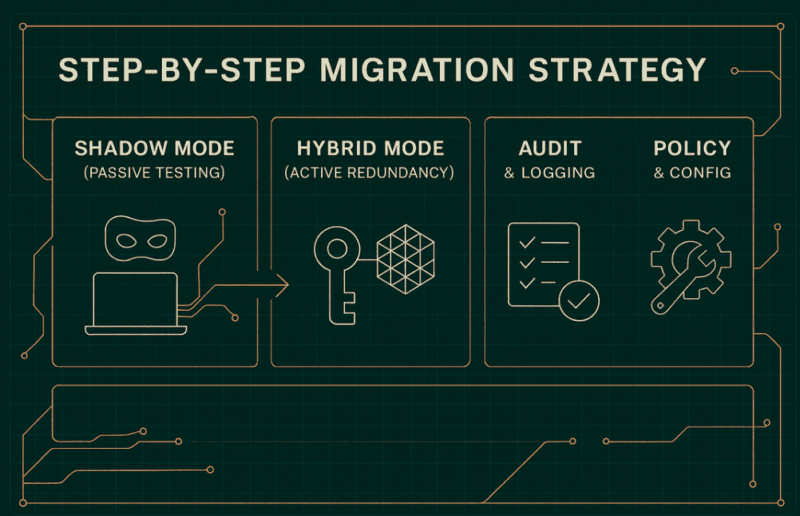

Here is where Hybrid and Crypto-Agile Strategies: Migrating Without Breaking Everything comes in as the most workable framework out there. It does not mean tearing out your current stack. It has to do with creating a path of migration that keeps things running until you are done.

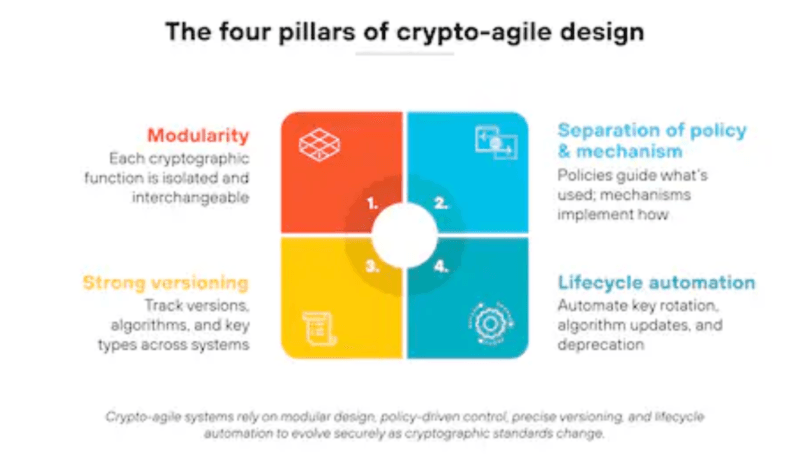

What Crypto-Agility Actually Means

The Core Idea

Crypto-agility A property which allows the replacement of cryptographic algorithms (or introduction of new ones) without the capability to rewrite all software that relies on those algorithms. It sounds simple. Actually, the majority of systems are written in reverse: algorithmic code is soldered directly into protocols, embedded software, which forms the foundation of the firrmware itself, plus libraries and APIs, without an abstract layer between them.

As NIST puts it in the Considerations for Achieving Cryptographic Agility, even the post-quantum algorithms currently under standardization might change, be phased out, or be parameterized differently. Today, the long-term risk of hard-coding ML-KEM into a device firmware is identical to the long-term risk of hard-coding RSA into a device firmware two decades ago.

Crypto-agility does not aim to identify the ideal algorithm the first time. It is to engineer systems in a way that there is no permanent choice of algorithm.

Why Standards Bodies Are Insisting On It

The NIST IR 8547 transition plan, the NCCoE post-quantum migration practice guide, and the state reports on PQC by ENISA all seem to carry the same message: organizations must not assume that PQC migration is a one-time upgrade event. It’s an ongoing capability. Algorithms will change. Threat models will evolve. The regulatory requirements will become more stringent.

I found that teams that had assumed that their first PQC pilot was the last. The key to a smooth (not a crisis) migration is agility that has been designed into the architecture on day one.

Hybrid Deployments: Running Two Cryptosystems at Once

What Hybrid Actually Looks Like in Practice

In a hybrid deployment, the classical and post-quantum cryptography are deployed together in the transition period. The simplest form is dual key establishment – an ECDH key exchange and an ML-KEM key exchange are both carried out and the resulting shared secrets are summed up with a KDF (key derivation function). This session is safe provided that either of the two algorithms holds.

This pattern shows up in:

- TLS 1.3 hybrid key exchange Cloudflare has been using X25519+ML-KEM768 in practice since many years prior, and has been providing it to browsers that accept it in addition to the classical fallback.

- IPsec hybrid tunnels Cloudflare in March of 2026 announced the deployment of post-quantum IPsec, one of the first large-scale hybridizing IPsec deployments in a production network.

- Enterprise encryptors – The hardware marketed by vendors such as Thales and ID Quantique has configurable crypto modules, capable of executing both classical and PQC code at the same time.

Dual signatures are based on the same principle: a document or code artifact will be signed by a classical ECDSA signature and by a Dilithium/ML-DSA signature. Systems not yet trying to support PQC can still be verified by the classical path. Individual systems supporting PQC do so.

The Performance and Protocol Reality

Hybrid doesn’t come free. Two exchange mechanisms can be used, which doubles the size of the handshakes and incurs a CPU cost. ML-KEM public keys are much larger than ECC keys. ML-DSA signatures are significantly larger than ECDSA signatures.

On constrained IoT endpoints, I found that hybrid TLS handshakes incurred a measurably high latency cost – not disastrous, but significant to protocols sensitive to latency. Hybrid rollouts must not be deployed at scale and only succeeded by any team with proper protocol and network tests in place.

Some aspects to evaluate prior to becoming a hybrid:

- Fragmentation due to MTU size — When a VPN or tunneling setup causes packets to exceed the limits of the MTU, larger keys are able to shove them.

- Sizes of certificate chains Certificate chains are bloated by dual-certificate constructions.

- HSM firmware support – most HSMs need to have their firmwares updated to allow key operation in PQC, and some older models cannot be updated further.

- Load balancer TLS termination – When TLS is terminated at a load balancer, then that layer requires PQC support as well, not only the backend.

At this point, Post‑Quantum Cryptography Migration like planning documents such as NIST IR 8547 would prove practically beneficial – these include conditioned assessment checklists with specifically these protocol-layer questions.

Designing Agile Architectures: Patterns That Actually Work

Abstract Your Crypto APIs

The biggest structural change that an organization can make is to add a clean abstraction layer between application code and cryptographic operations. Operations themselves do not call RSASign or even ECDHcompute key functions directly out of any application logic, but instead go through an internal crypto service or SDK which is mapped on whatever algorithm is being used at the time.

This pattern means:

- As instead, algorithm adaptations occur in a single location, rather than throughout hundreds of code files.

- New PQC algorithms can be tested in staging, without ever having to touch production code.

- Cryptographic operation audit trails are centralized.

This should be a minimum requirement, not an optimization, to organizations developing new services. Users of existing codebases should become familiar with cryptographic inventory – finding all the locations where hard-coded algorithms exist – before embarking on any migration.

Configuration-Driven Algorithm Selection

In addition to API abstraction, the second pattern is to shift algorithm selection out of code and into configuration. A centralized crypto policy engine – be it a home-grown internal service or a vendor offering such as Keyfactor of CyberArk – enables security teams to propagate algorithm selections throughout the estate without making client code rewrites.

Just as versioned API policies, versioned crypto policies can have a policy version policy v1: RSA-2048 + SHA-256. Policy v2 adds hybrid ML-KEM + X25519. Once the ecosystem has reached up to date the classical component is removed by policy v3. The services itself proclaim their support of a policy version, and the engine takes care of the rest.

It is analogous to the manner in which Digital Twin Development Tools control configuration of a version-controlled environment – the concept of configuration versus execution logic also holds in cryptographic policy management. Crypto policy engines will be conceptually familiar to organizations that already have experience with that pattern.

Centralized Policy Engines vs. Scattered Decisions

The other option – to have individual teams, services, or vendors make their own algorithm decisions – stitches it precisely into the kind of fragmentation that is agonizing to migrate. This makes crypto decisions decentralized, spread across code-bases, firmware, and network devices, and any third-party integrations, resulting in any one of these not having a clean inventory, having no consistent upgrade path, or being able to react quickly when an algorithm has to change.

Having a centralized policy engine does not equate to having one point of failure. It implies one answer to what algorithms will be permitted, enforced at the level of the service.

According to the NCCoE post-quantum migration practice, it is highly recommended in enterprise settings, especially where an organization has a large certificate inventory or relies heavily on a PKI.

Working With Vendors and Ecosystems

Ask the Right Questions Before You Sign Anything

The readiness of the vendors to PQC is simply crazy at the moment. Hybrid PQC is already deployed in production by some major platform vendors, including Microsoft, Google, Amazon, Cloudflare. Some remain at the stage of roadmap, which may imply anything between “we are working on it currently” and “we have one engineer assigned to research it some time in the future too).

Security and procurement teams should be posing questions to include: Before renewing any contract or signing a new procurement agreement with a technology vendor, they should be asking:

- Does it establish hybrid keys today in your product? If not, when?

- Do you get involved in the Migration to Post-Quantum Cryptography project by NCCoE?

- What would make you consider in PQC Coalition recommend in your product category?

- When do you expect full ML-KEM and ML-DSA support?

- How will updates to the algorithm be provided – firmware update, SDK update, configuration update?

The contract should include these questions and not only during the sales conversation. To support timelines by binding vendors to PQC via procurement clauses is a sensible risk management measure that is already being undertaken by a number of financial sector organizations, under the cryptographic agility framework formulated by FS-ISAC.

The Ecosystem Interoperability Challenge

Among the least-documented challenges with hybrid deployments is that various vendors enforce hybrid patterns in different ways. No single hybrid TLS standard exists yet – different browserserver implementations negotiate a hybrid key exchange with slightly different drafts and algorithm combinations.

At this point, the Digital Twins vs Simulations differences relevance is unwonty in a curious manner. Cryptographic migration test environment Teams developing cryptographic migration test environments frequently discuss the question of whether to build a full digital twin of their production environment, modeling every network path, every TLS endpoint, every certificate chain, or whether only a more lightweight simulation of the critical paths is needed.

In my experience, only full-environment tests would reveal the interoperability edge cases, not simplified simulations. The difference matters.

Procurement and Contract Clauses

The CSA Practitioner Guide to Post-Quantum Cryptography contains special advice on the assessment of a vendor. The most important rule: consider PQC preparedness as any other security control during your supplier risk management effort. Demand it, review it and make renewal weighs upon proven improvement.

This is starting to be a compliance requirement, but not a best practice, even in regulated industries, such as financial services, healthcare, and defense contractors. Reporting by ENISA on the adoption of PQC in the EU member states reveals that regulatory pressure is driving vendors schedules at a noticeable quicker pace when compared to voluntary adoption.

What’s Already Deployed and What’s Just Getting Started

Already in Production

The hybrid PQC ecosystem is not as far off as most organizations think:

- X25519+ML-KEM768 hybrid-key exchange Cloudflare Cloudflare is currently in the production stage of rollout of IPsec tunnels using X25519+ML-KEM768 hybrid key exchange.

- Hybrid PQC key exchange TLS connections have long since defaulted in Google Chrome.

- Signal Protocol – Signal Protocol made a post-quantum addition (PQXDH) to its key agreement protocol in 2023.

- NIST standards, including ML-KEM (FIPS 203) and ML-DSA (FIPS 204), and SLH-DSA (FIPS 205) are complete and can be implemented.

What’s Just Beginning

The vacuoles are factual and worthy of naming:

- Automated cryptographic discovery tooling – most of the organizations have not fully catalogued the locations in which classical cryptography is deployed at their infrastructure. Vendor tools such as those of QuSecure and others are premature, but getting better.

- AI-assisted migration pipelines – current exploratory protocols to use ML-powered tools to inspect codebases and identify hard-significance dependencies on algorithms have merely become succoring yet neither in manufacture-sensible nor supplementary.

- Scale PKI migration — Large scale migrations of certificate authorities and certificate chains in large enterprises remains a manual and slow process.

- Longest tail of migration challenge IoT and embedded systems Hardware with lifetimes of 10-15 years to deployment and which can not be conveniently updated with firmware.

It is, at this point, that Digital Twins in Product Design styles are beginning to affect security architects to plan PQC migrations. Simulation of cryptographic surface area of a product or system prior to physical deployment – testing hybrid configurations with simulated protocol stacks will mitigate the risk of finding out about compatibility issues in the aftermath of hardware in the field.

My Take: Good To Begin With If You Have Not.

A Practical Starting Point

The studies of NIST, ENISA, NCCoE, and FS-ISAC all lead to the first step which is inventory and then nothing more. It is impossible to migrate something you cannot see. Everything is built on a cryptographic bill of materials – mapping out all the protocols, all the key types, all the certificates, all the algorithms, which are in use in the environment.

Based on that, the priority order that keeps on reoccurring throughout the migration roadmaps:

- Secure long term data first – anything that is encrypted now which you do not want seen over a period of 5-10 years (or longer) is the most important.

- Hybrid key exchange by pilots in less risky services – internal services, dev/staging infrastructure, non critical external endpoints.

- Renew the vendor contracts – take advantage of the next upgrade to cement PQC support support.

- Develop the layer of abstraction – begin to treat crypto as a service, not code.

- Scale across critical paths Scale of external API, authentication systems, certificate infrastructure.

Trust Boosters: External Links Worth Bookmarking

There are two out-of-house materials that should be included in a serious PQC reading list:

NIST IR 8547 – First Public Draft: The Migration to Post-Quantum Cryptography Standards.

Suggestion of the anchor text: NIST Post-Quantum Transition Roadmap.

This is the authoritative source of algorithm deprecation schedules, transition planning documents and the decision making of NIST on PQC. Any plan of migration that fails to refer to this document lacks a backdrop.

The guide is dedicated to the organizations in the financial sector, but is more generally applicable, including the governance architecture, evaluation of the vendor, and a feasible consideration of hybrid implementation in a regulated setting.

Wrapping Up

Hybrid and Crypto-Agile Strategies: Migrating Without Breaking Everything is not a motto but is literally the most reasonable model that applies to organizations that have to shift to PQC without dismantling production mechanisms in the process.

The core takeaways:

- Not an option is crypto-agility. The new PQC algorithms will also be developed. Changeable building systems.

- The intermediation is hybrid deployments. Run classical and PQC simultaneously, evaluate occasional performance differences back in time.

- Patterns are significant to architecture. The distinction between a manageable and a multi-year chaos migration lies in abstract APIs, versioned policies and their policy engines being centralized.

- Accountability of the vendors begins with procurement. None of the soft addresses, invest promises in contract.

- Start with inventory. You have no ability to migrate what you have not mapped.

Quantum timeline is truly unpredictable. The non-absence of the harvest now, decrypt later risk is not. The organizations managing this migration as infrastructure labor–planned, managed, and geared towards a change

even those that tend to wait and see before they are compelled by situation to act will be far ahead of those that are waiting to be pushed into place by a crisis.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!