Last updated on March 13th, 2026 at 03:42 pm

Table of Contents

The 2026 Machine Identity Crisis Nobody Saw Coming

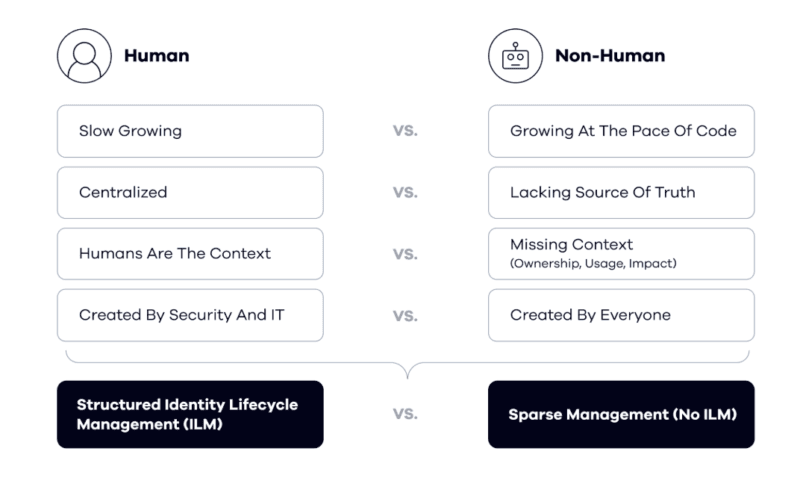

In the recent past, identity security implied the presence of a strong password by the employee and the ability of the IT to deprovide an account once the person had left the firm. Clean, simple, human-shaped. The model has now failed – and the failure has occurred more quickly than some security teams would have reacted to.

AI agents changed the rules. They are no longer chatbots that are inactive behind a prompt window. In modern agentic systems, everything happens asynchronously, i.e. they invoke APIs, query databases, initiate cloud executions, spin up sub-agents and accomplish a series of steps without a human in the loop at each point. There is a credential that is required in every of those acts. All the credentials are non-human identities (NHI).

The statistics are a narration that should not be comfortable to any security architect. Machine identities already exceed human identities by 45:1 to more than 100:1 in an enterprise environment – and in practice most organisations maintain less than half of them. With complex cloud deployment research, SailPoint states the ratio to be approximately 82:1. In the meantime, one of the most frequently quoted statements on the topic, of the NHI security community, indicated over 99 percent of agent and NHI credentials as currently uncontrolled.

The thesis on this is quite simple, to be able to ensure the non-human identities that your AI agents will be based on, there will be no way to secure your AI agents. Full stop. The remainder of this paper unravels why that is actually the case, what the risk environment will look like in 2026 and what actually qualifies as a realistic answer.

What Are Non-Human Identities in the Age of AI Agents?

We shall begin with being clear on what exactly an NHI is before proceeding any further as the name encompasses a bigger area than what most people anticipate.

A non-human identity denotes any computer-generated identity bestowed upon a system, service or automated process instead of on a human being. In practice that includes:

- Rules that authentication by third-party services The applications are authenticated using API keys and Oauth tokens.

- Cloud accounts (AWS IAM roles, Azure Managed Identities, GCP service accounts).

- Workloads and encryption keys of workloads and containers.

- Bot accounts that perform within SaaS ads such as Slack, Salesforce, or Jira.

- IoT identities between sensors and edge elements with the central infrastructure.

- The pipeline credentials in a CI/CD system such as GhActions or Jenkins.

- identity of AI agents – the most recent and the quickest-growing type.

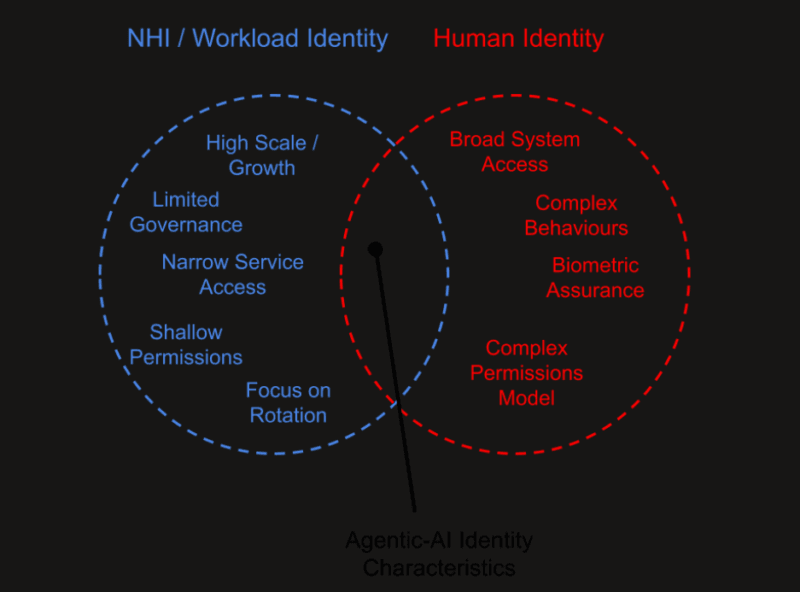

Now the density and autonomy of NHI usage are the distinguishing characteristics of the agentic AI as opposed to the previous automation. It may take just 10 or more individual NHIs to get one of the AI agents, tasked with a moderately challenging task, to complete a full session: one to authenticate against a knowledge base, another to write to a CRM, another to invoke a notification service, another to call a financial API. Every connection comprises a possible attack space. There is no security and therefore each long-lived credential or over-privileged credential or non-monitored credential is an open door.

I observed this firsthand when I was looking over a medium-sized SaaS setup that had adopted an agent-based customer support process. The agent was configured to use one service account that had read and write access to all the customer database – since it seemed like a burden to create provisioned scopes credentials of every tool call at the time. It is the type of shortcut that works out well until something goes wrong.

Why NHIs Became the New Perimeter – And Why Legacy IAM Is Failing

Conventional identity and access control was based on a very basic cadence: someone logs into the system, works, logs out of the system. Visibility is associated with a role. There is time-based access to a working day. Conduct is more or less foreseeable.

AI agents do not beat on to that rhythm. They are not only self-executory, but scale-to-order because they generate sub-agents as they are required, recycle credentials, and then run like a machine, with no human supervision per step. The suppositions which are built in legacy IAM systems are not true.

Identity as the Control Plane for AI Risk

NHI governance is becoming widely characterized by industry scholars as well as security researchers as the identity layer as the key control plane in AI risk. The framing is accurate. In the case of an AI agent user being compromised, misconfigured, or over-privileged, an attacker does not need to exploit the model but only exploits what the model can do on its behalf.

The breakdown of legacy IAM in agentic environments has a couple of particular unsuspected outcome breakages:

- Lack of agent lifecycle concept: The identities are not created and released in large numbers; IAM systems were not developed to add and remove identities at the pace and frequency at which agents are developed and disposed.

- Role-based access in Static permissions of a dynamic world: Role-based permissions apply in situations where job requirements are fixed. The roles are dynamic as agents move around and that is why fixed positions do not suit them.

- Human approval gate is too slow: High risk processes (those needing approval) can occur in milliseconds – quicker than most human inspection.

- Embedded credentials: Developers will routinely encode API keys and service account tokens in code and other configs without going through a vault or rotation policy.

According to the 2026 agentic AI and cybersecurity report of the World Economic Forum, one of the most pressing governance failures associated with the emergence of AI agents are cryptographic blind spots i.e. credentials and certificates that no one knows exist. It is proven by my experience of reviewing enterprise deployments: the credentials available to the credentials teams tend to be controlled reasonably. The issue is the ones that no one tells about.

Risk Landscape: Over-Privileged, Invisible, and Long-Lived Machine Identities

The essential risk patterns have been crystallised by the OWASP Agentic AI Top 10 framework and OWASP NHI Top 10 that were published within the past 18 months. The following is what is constant in those frameworks and recent research of vendors:

Over-Privileged Tokens and Service Accounts

The scoping permissions available to the agent are often excessive in the sense that scoping permissions are sometimes time-consuming and necessitate very detailed understanding of what the agent does in particular. The outcome is services accounts that have extensive administrative privileges and remain between tasks idle, a boon to anyone that succeeds in compromising them.

Secret Exposure and Credential Sprawl

The API keys and tokens will be stored in the source code, build logs, messages in Slack, and in spreadsheets. An unintended leak of a credential in its purposeful vault may last months and even years before being detected. All the automated scanning tools discover exposed secrets fairly often – however, reactive detection is not a good replacement of never leaking it.

Shared Credentials Across Agents

Multi-agent teams frequently have a single shared account of service to minimize set-up overhead. In the event one agent is compromised, all agents with that credential will be compromised. Shared credentials also cause a forensic investigation to be practically impossible – you cannot say that an action is performed by this or that agent when credentials are shared.

Long-Lived Credentials With No Expiry

The fundamental principle of a zero-trust network is short-lived tokens which automatically rotate. In practice, a large number of production deployments continue to use credentials whose expiry date is nonexistent since rotation is too complicated to implement at the moment.

This has been observed by me in almost all the cloud environments that I have looked through. Investigated credentials that have a long shelf life are not merely a risk, but a muffled insurance that with passage of time, a compromise shall be large-scale.

Cascading Failures and Lateral Movement Without a Human Attacker

The most disturbing of the patterns in the OWASP NHI Top 10 is the rogue agent pattern: a compromised agent credential allows lateral movement, both within the cloud and SaaS environments, and automated activity at high volume hides the intrusion in the logs intended to monitor human behaviour. No phishing email. No man, at a keyboard assaulting. Only a cog in the machine to do just what its credentials permit – in wrong hands.

Important stat: According to certain estimates, in the multi-cloud setup featuring multiple-complexity, a single AI agent might make use of 10 or even greater individual NHIs in SaaS, cloud, and internal setups. In the absence of an entire map of those identities, it becomes nearly impossible to respond to an incident.

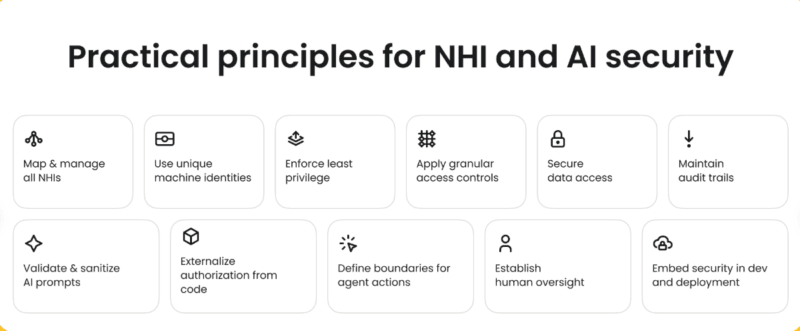

Three Pillars of Securing Non-Human Identities for AI Agents

Throughout the research, vendor advice, and documentation of a framework that will prevail in 2026, three pillars strong across all of them appear to be what organisations strive to get NHI security right. These are not some optional extras, but the minimum base necessary.

Pillar 1 – Visibility and Inventory: You Cannot Protect What You Cannot See

The very starting step which is the most fundamental is being familiar with the existence of NHIs. This may be blatant but the vast majority of organisations are very blind. Shadow agents AI automations used by separate groups without a central management are typical. The accounts of forgotten services that were used long ago by degraded integrations are kept with fully valid credentials. The expiry of certificates goes unnoticed.

The scanning of cloud environments, SaaS platforms/frameworks, CI/CD pipelines, and agent frameworks remains under constant scanning and is a general expectation now. Discovery tooling such as Astrix, Aembit and SailPoint have developed NHI-specific discovery tooling relying on machine learning to expose the unmanaged credentials and indicate abnormal access patterns. Its approach is to have a living inventory, an inventory that automatically changes and has an explicit owner to each NHI.

To present a systematic vocation of cultivating this ground, the Discovery and Inventory: Gaining Visibility into AI Agents deep-dive detail the tooling, processes, and governance models that constitute continuous NHI visibility because of production settings.

Pillar 2 – Least Privilege and Lifecycle Management

All AI agents must run with the least necessary permissions to perform its particular task, nothing more. credentials must be time limited, and turn around like time based cookies, do not serve a group of agents on a single cookie.

This would in practice imply: designing identities at agent-role needs instead of team or system needs; enforcing finite expiry on identities; enforcing high risk operations such as bulk data access or infrastructure modification on human approval or step-up control; and automated rotation into the deployment pipeline on the first day, but not retrofit.

The guide Least Privilege for AI Agents further elaborates on setting out permissions in the right way, drawing up time-restrained credentials and deploying approval gates without introducing a bottleneck to legitimate agent access.

Pillar 3 – Monitoring, Governance, and Identity Threat Detection

Least privilege and inventory make the situation much safe, although not free of risk. Monitoring closes the gap. Identity Threat Detection and Response (ITDR) with extensions to include machine identities implies baselining normal agent behaviour, oddity of behaviour alerting, and detailed audit trails that would ensure that each instance of agent activity is associated with a particular NHI and, where feasible, human owner.

The challenge is volume. The agents create a significant amount of activity compared to people and traditional SIEM tools were not meant to analyse and put into context the amount of activity it did. New tooling, such as AI-aided anomaly detection, is starting to fill this requirement, but this governance layer (ownership, review cadence, escalation policies) still must be carefully crafted by humans.

A framework-based model of continuing NHI governance (including alignment to NIST CSF) alignment, alignment to ISO 27001 controls, and alignment to OWASP NHI Top 10 remediation patterns, can be found in see Governance for Agentic Identities deep-dive.

How NHI Security Fits Into Zero-Trust and AI Governance

The principle of zero-trust as an architecture is nothing novel, however, the application of such a principle to identities that are not human remains still in its infancy. The main zero-trust principles are a direct correspondence to the NHI issue:

- Explicit Verification: NHI requests need to be authenticated and authorised (network location, whether in a network or not). To a functioning extent, implicit trusting on IP ranges or internal network status is inadequate.

- Access least privilege: As it has been mentioned above scope, time-bound and rotate. Use this on all credentials and not only the obvious ones.

- Breach assumption: The assumption entails that credentials will be compromised on the assumption that Design NHI governance will take place. It is aiming at curbing blast radius and not completely eradicating any form of compromise.

The directions provided by Microsoft on managing identity in a non-human fashion specifically positions NHIs as a zero-trust issue, suggesting that the focus lies on centralised IAM integration, temporary credentials, and ongoing monitoring as opposed to the current practice of using an out of band approach adopted by most organisations.

Regulations Are Catching Up

Laws are becoming more clear regarding machine identity hygiene. Both US Executive Order 14028 and NSM-10 contain difference requirements on cryptographic inventory and automated key management that involve non-human identities. The transparency and auditability of the EU AI Act have a kind of implicit commitments to knowing and forthcoming what AI actors are doing, but only to the extent that they have a discernibly identifiable identity layer beneath them.

Another control point of regulation is the post-quantum cryptography (PQC). The certificates and keys will require the use of NIST-standardised post-quantum algorithms not only the ones used by humans. Organisations that fail to keep a real-time cryptographic inventory will have difficulties proving compliance 5 or even migrating in time.

My Take on the IAM + PAM Stack

I have worked with old versions of PAM platforms as well as newer NHI-specific tooling and the fair evaluation is that they meet various requirements that are moving toward convergence. Privileged human accounts, and the high-value service accounts still need privileged accounts. NHI-specific platforms introduce the discovery, agent specific governance and machine speed automation that legacy PAM was not created to facilitate.

The IAM + PAM guide utilising Non-Human Identities discusses the options and structures of the capabilities into a consistent stack including the selection of the tools to be used, the architectural patterns, and the organisational model that needs to be enforced to support it successfully.

Where to Go Deeper – My Recommended External Reading

Two external resources are specifically noteworthy to any one developing or reviewing NHI security programmes.

The article on the identity of non-humans as the new cybersecurity frontier of agentic AI by the World Economic Forum is an outstanding example of high-level framing of how agentic AI is driving the growth of NHI, the blind cryptography spaces are forming, and the policy-level responses are already starting to emerge. It is addressed to a general audience and does not involve a sophisticated technical background.

In case of a more technical one, OWASP NHI Top 10 can serve as the community-driven framework that is used to establish a reference base of NHI risks category. It is worth reading it together with Governance for Agentic Identities guide as it provides a good basis when constructing a remediation roadmap.

Trust Booster Anchor Texts (for CMS Use)

The above anchor textings are to be used when referencing to the external resources when trying to create the maximum context relevance:

- In the case of WEF: non-human identities the new cybersecurity frontier of agentic AI – enters weforum.org.

- OWASP NHI top 10: OWASP NHI Top 10 risk framework – OWASP project page.

The Roadmap: Deep Dives Into Every Layer of NHI Security

This paper has outlined the three pillars and the problem strategically. One pillar and the knowledge that the pillar is founded on should be given a deep-dive to be action-seeking indeed. The individual child articles will fall under the following programme:

NHI Fundamentals and Types

To introduce yourself to the subject or brief a non-technical stakeholder, the fundamentals article will discuss each type of NHI how each type is constructed, how it authenticates, and the type of governance that is required. It is the place where one enters before anything else.

Discovery and Inventory: Gaining Visibility into AI Agents

The major gap is continuous discovery in which most programmes have failed. The guide addressing tooling alternatives, scanning strategies in the cloud and SaaS system, candidates about such shadow agents, and creating an inventory that remains updated have also been discussed. The article called Discovery and Inventory: Gaining Visibility into AI Agents is the logical continuation of reading this piece.

Least Privilege for AI Agents

Permission of an agent is more difficult to acquire than permission of a human since the boundaries of the tasks of an agent are less predictable. The Least Privilege of AI Agents guide takes a tour of the design patterns as it has just-in-time access, time-constricted credentials, and human-in-the-loop approval gates of high-risk operations.

IAM + PAM for Non-Human Identities

The IAM + PAM For Non-Human Identities guide addresses the technology stack: what platforms do NHI discovery vs governed vs vaulting and what is the comparison between legacy PAM and new NHI specific tooling and what an integrated architecture actually means in a mid-large size enterprise.

Governance for Agentic Identities

The intersection of the human and technical layers is in governance. Analysis Individual Governance programme The Governance for Agentic Identities article includes coverage of the ownership models, review cadences, alignment of NIST and ISO and OWASP NHI remediation mapping, as well as how to build a governance programme to add to the agent estate.

The basis is centralised secrecy storage in a specific vault ( HashiCorp Vault ( AWS Secrets Manager ), Azure Key Vault or other equivalent ). In it, the main practices are:

What Good Secrets Hygiene Looks Like

As my experience proved, the disconnect between the secrets management policy and the secrets management reality in an organisation is also frequently large. Policies dictate that all the secrets should be kept in a vault. The secrets in reality shows, environment variables, .env files that were stored in private repositories that were not as secure as they should be, and Slack messages that seemed easier than setting up a vault did back then.

NHI security work exists in a category that can hardly come to a featured position on conference agendas, but one that has a disproportionately large number of incidents happening in the real world: secrets management. The term practitioner encompasses all of the information about the storage of the API keys, and the rotation of the certificates, and the possibility of your build pipeline returning tokens to the standard output in a silent manner.

Secrets Management and Cryptographic Hygiene: The Unglamorous Work That Actually Matters

Automatic searching of the code repositories, build artifacts and container images to accidentally exposed secrets.

- No secret sharing among agents anything, they are granted their scope credential.

- Each secret access event is logged in the audits with reference to the individual NHI, or agent, that transmitted a request.

- Expiry enforcement: non-renewed credentials are not flagged, they are also revoked.

- Rotated automatically at a schedule or due to some event, as opposed to being manually rotated when somebody remembers.

- Even though migration may be two or three years away, organisations that use AI agents by 2026 need to be recording the cryptography posture of their NHI estate. The agents currently being provisioned will very likely still be operational when there is a mandate to start migrating to PQC.

In 2024 NIST finalised its opening quantum cryptography standards. Migration is a long process, but the planning needed to migrate machine identity credentials is even more protracted than many best teams expect, since it needs to know what cryptographic algorithms all the certificates and keys in the environment are currently running. Even in the case of an audit without live inventory, it can be months.

Post-Quantum Cryptography: A Longer-Horizon but Urgent Preparation

Key rotation automated would be easy. Practically this demands that all systems that consume a secret can support a change of credentials without any downtime, i.e. not necessarily tested in production, but in advance.

What the 2026 NHI Challenge Means in Practice

The problem of machine identity crisis is not in the future. The proliferation of AI agents has already occurred, and the credentials of the AI agents are already traversing cloud environments, SaaS applications, and internal systems, mostly without the oversight, control, and cleanliness that human identity programmes assume.

The review of these environments I have conducted has allowed seeing a pattern, where the groups that are ahead of the problem are not necessarily working with the most advanced tooling. It is them who have viewed NHI governance as a first-grade security issue since the beginning of their AI agent deployments, and not retrofit. They did discovery prior to going live. They were agent-role scoped credentials. They developed a rotational nature into the pipeline.

The three-pillar model, which includes visibility of information, least privilege, and monitoring, is not complicated in its concept. The problem is implementation at the rate and scale of the agentic AI will require. This is why the individual capsules should receive the specific attention the child articles will occupy.

To security architects, platform engineers, and anyone in charge of deployments of AI agents: It is high time that we start discussing Identity Crisis – Securing Non-Human Identities for AI Agents, because the issue of non-human identity self-declared agent identity mismanagement will become urgent in no time. The qualifications are as good as gold. The question is could you see them.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!