Table of Contents

Why the AI Supply Chain Is Now a Security Problem Everyone Has to Care About

Not so long ago, “supply chain security” remained largely a discussion of infrastructure teams comfortable with the fact, that this is something you heard about after great compromise, and then hung in the closet. Then there was SolarWinds incident, Log4Shell and the gradual awakening of finding out that all what we are building is built on top of parts we did not write and we do not quite understand all the time.

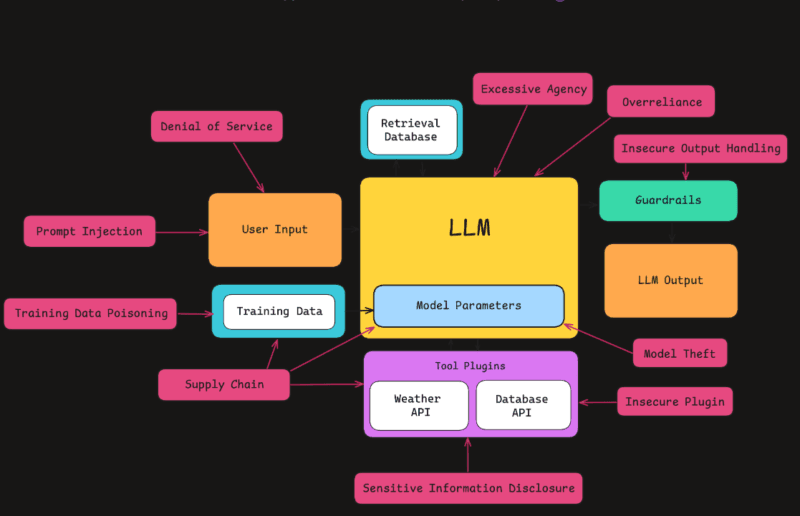

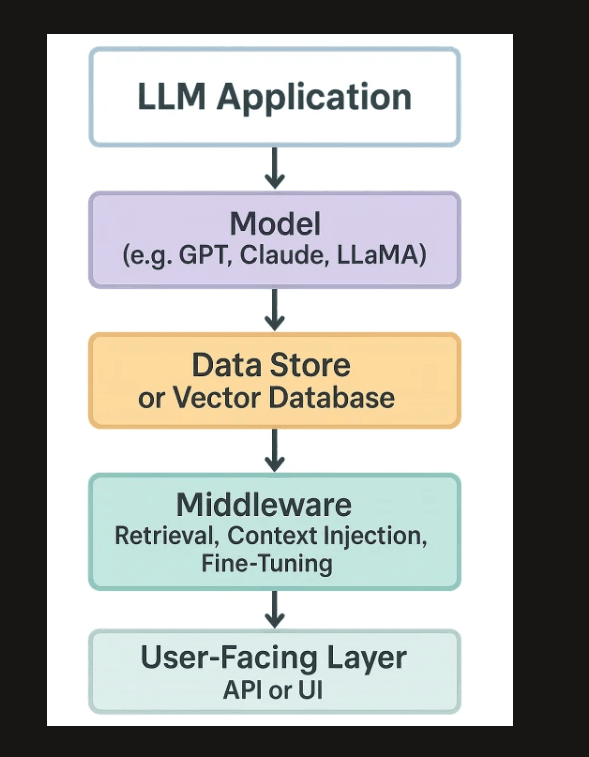

However, due to large language models found within products, workflows, and enterprise systems, the same issue now manifests itself differently. The LLM Supply Chain is no longer a matter of code dependencies. It includes pre-trained model weights obtained via open-source or third-party vendors, open-source datasets, and fine-tuning pipelines, an orchestration layer as well as an expanding ecosystem of open-source plugins which have integrators to live tools, databases, and APIs.

I have in the last one year adopted a variety of LLM platforms – both tooling and customer-facing applications – but one thing I came across repeatedly was as follows: the weakest element is often not the model itself. Something around it is normally it. Untrustworthy permissions on a plug-in. A dataset that wasn’t audited. An extremely sensitive model inspection point retrieved out of a public source without verification process.

The article has divided LLM supply chain security into a mixed readership, or is it a developer that is creating with LLMs, or is it a security professional that is assessing risk, or simply a person that wants to know what AI supply chain risk actually entails in practice. The idea is simple, describe what has already grown up, what is still being formed, and the direction of the field she is definitely going.

Understanding the Three Layers: Models, Data, and Plugins

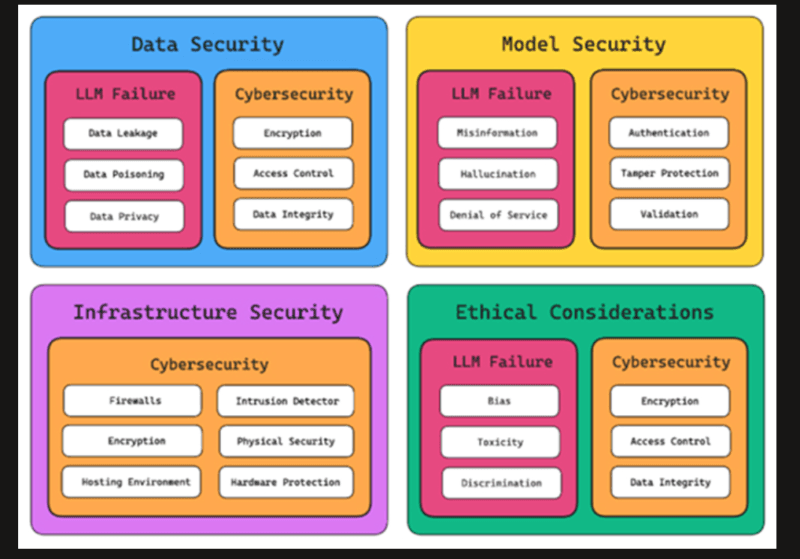

It is better to map the terrain before getting down to what is and what is not working. The three layers that are associated with the supply chain security of LLC are distinct yet differentiated and the vulnerability of any of the layers can lead to a blight of the entire stack.

The Model Layer

This is the foundation. Training organizational LLMs would not be done alone, commonly in most organizations it involves drawing its own models, which is done through providers, such as OpenAI, Anthropic, Mistral, Meta (via Llama), or Hugging face. All these are questions that raise questions of trust:

- Who trained this model?

- What data was it trained on?

- Did the model checkpoint undergo post-release alteration?

- Is it what the provider issued which you are downloading?

These are not ideological issues. There are hundreds of thousands of models alone on Hugging Face, and none of them are all meaningfully vetted before being published, as described by LLM Supply Chain 101. Malicious actors have been observed to post models that contain backdoors or are subtly hyper-sensitive to act in a certain manner, know as a trick of sort called a trojan attack.

Here the practice that is undergoing development is the Model Provenance and the Model SBOM – having a software bill of materials on AI models is maintained in the same way that package dependencies are maintained in traditional software teams. Training data lineage, knowledge of fine-tuning predecessors, hash of model weights, and third-party components used are recorded in an AI-SBOM. It is still at a young age of adoption but it is spreading rapidly.

The Data Layer

LLMs are based on data, which means it is a high value attack surface. The dangers in this case fall into two groups, namely, pre-training data dangers and fine-tuning data dangers.

Pre-training datasets such as Common Crawl, The Pile and others are massive and typically un-audited in full. Even the addition of a single piece of contaminated data into these datasets, such as data aimed at causing the machine to act in a particular, attacker-intended manner is an established attack description which OWASP explicitly mentions in LLM03:2025.

The more immediate issue among most organizations is fine-tuning. When a firm is able to adapt a base model to datuments owned by the firm to serve its purposes, this is often not investigated carefully as it should have been. When the fine-tuning data is compromised in some manner: either due to the addition of an adversarial example or by a broken data pipeline the resulting model can generate slightly dangerous outputs that cannot be easily identified without red-teaming.

To every developer or auditor of LLM systems, the topic of Securing Training and Fine-Tuning Data Against Poisoning is no longer an option. It is one of the most apparent emerging fields of practice in the field.

The Plugin and Tooling Layer

It is particularly more complicated in 2025 and 2026. LLMs start to act rather than respond to questions more and more. Through API/s, run code, web search, email, record update, and call-out to external services via the plugins and interfaces, etc.:

The trust boundaries of any given plugin are effectively a new trust boundary. A potentially exploitable vulnerability in a plugin that reads and writes to a database, sends messages, or communicates with authenticated services is a privilege escalation or data leakage or timely injection in case the code is not carefully designed.

Most advanced agentic AI uses, countermeasures to this flaw do not occur because of the flaws in the underlying modelling, but rather because they reuse existing software supply chain failures – all AI uses continue to be developed on top of the same collection of programming languages, CI/CD pipelines, and open-source dependencies. straiker

What’s Already in Place: The Mature Foundations

OWASP Top 10 Application LLM for llm/hyper Environment (2025 Update)

The OWASP Top 10 LP Application is a community project aimed at defining and providing solutions to the most significant vulnerabilities related to LLM, and to fulfill systems based on generative AI, the idea is to inform developers, architects, and organizations on the potential dangers of the implementation of such models.

The 2025 update perfected some of the entries that have a direct bearing on the supply chain. LLM03:2025: Supply Chain Vulnerabilities – has specifically addressed the vulnerabilities in the third-party model components, training data, and replace an ecosystem. Trying to have a breakdown, Plugin and Tooling Security: LLM03 Supply Chain Risks in Practice has narrated the entrance with real-life examples and mitigation solutions.

Some of the practical lessons that can be observed based on this category of the OWASP are as follows:

- Check hash prior to deploying a model.

- Assume that every plugins is an unknown third party.

- Keep the principle of least privilege on all tool calling interfaces.

- Permission of Audit plugins API level, not exclusively through description.

NIST AI Risk Management Framework

The AI RMF offered by NIST, which was developed to a sufficiently mature level by the end of 2024 and was offered as a supplement to the generative AI-specific guidance, offers a governance framework of how to consider AI risk at scale. It splits risk into four functions which are Govern, Map, Measure, Manage and provides an avenue by which teams can connect technical controls of supply chains to organizational accountability.

My experience proved that companies who had implemented NIST RMF had dealt with the AI wave much better. They had the vocabulary, the shareholder alignment and the documentation culture that LLM supply chain security requires. New teams have a more steep curve.

In free learning, the NIST AI RMF Generative AI profile in the OpenLoop research PDF is among the more comprehensible open documents on the application of the framework to LLMs, specifically – and the profile is open-source.

Snyk and Dependency Scanning for AI

Rudimentary software composition analysis (SCA) tools such as Snyk have started to expand their serviceable range into model registries and package risks that are AI-specific. The free learning resource Snyk offers on the vulnerabilities of supply chains in LLM deals with known CVEs in Python packages, CUDA libraries, and AI system risk-producers like PyTorch or Hugging Face Transformers cascading into teams that are only interested in the behavior of the AI system.

It is a more practical area of development that can be immediately put in place by development teams as it does not perforce a complete reconsideration of the security processes to be implemented, as well as because it only requires a superimposition of existing tooling.

What’s Just Beginning: The Emerging Frontier

Model SBOMs and Provenance Standards

The principle of a Software Bill of Materials (SBOM) is exhaustively proven in conventional software. In the case of AI models, the counterpart is being established. Incertain programs like Model Provenance and “Model SBOM” are now emerging in the industry – such as the model card ideals of Hugging Face or new interoperability deliberations in organizations like CISA or AI Safety Institute.

A list of dependencies is not enough with an AI-SBOM. It ideally captures:

- Sources of training data, and audit of known quality/bias.

- Refining information lineage and constituents.

- Each checkpoint has a model weight integrity hash.

- System prompt version and version of inference configuration.

- pinned version of a deployed registry version of plug-in.

This remains more of a day-dream than a reality in most companies, but the equipment is starting to keep up. Structures, such as Sigstore (near code-signing structures) are being scaled to model signing, where end users would use cryptography to authenticate that a model checkpoint has not been modified.

Runtime Plugin Monitoring and Agentic Security

The most dynamic aspect of the LLM supply chain risk now is arguably its ecosystem of plugin. With the shift in the type of organization to which the chatbots are confined to single-turn exchange, the number of the plugins increases, as well as the attack paths.

The actual risk that OWASP signifies is prompt injection indirectly, via the use of a data plug-in: an attacker with the capability to manipulate the content that a plug-in retrieves (such as a web search result or a document accessible in a related file store) can inject instructions into the context of the LLM using that content. These instructions are then followed by the model and may have a high level of privilege.

New mitigation measures in this case are:

- Tool call sandboxing: Separating execution environments of the main system resources and their plug-ins.

- Application-time permission scoping: Not only verifying that a given permission is granted to a given entity, but also verifying that the purpose of the task at hand warrants the granted permission.

- Output filtering: The Post-processing output is given before the model context is re-read.

- Detection of anomalies in tool use patterns: Waving queries that include unusual API sequence patterns as a potentially injected query.

Plugin and Tooling Security: LLM03 Supply Chain Risks in Practice discusses areas of implementing some of these controls within current API gateway architectures.

Monitoring, Version Control, and Incident Response

After the model is deployed, that is one thing that is normally neglected. The domain of Monitoring, Version Control, and Incident Response to LLM Supply Chains is one that existed scarcely as a formal discipline two years ago, and now is growing at a very high rate.

The major practices that are shaping up include:

Model version pinning: Sort of updated-independent Software teams have tended to pin dependency versions, AI teams are learning to pin model versions to a known-good state and to think of updates as something to deploy, not silent background updates.

Behavioral drift: APN accessible models can evolve under you. This occurred with one of my providers, a third-party, in the distribution of the model output within two weeks, without causing any alteration in the changelog. The primary defense is behavioral regression testing which is run on an unchanging evaluation set.

Playbooks for specific incident response in LLM-specific incident response: What does a supply chain compromise in an LLM system look like? It’s not always obvious. Signals can be an abnormal spike in specific categories of output, unexpected calls to a plug in or sudden refusal behavior. There is the beginning of codification of these to runbooks by teams.

Audit logging at the prompt and response level: In case of regulated industries in particular, the promiscuity to compose what a model got, what it summoned and what it gave back is an emerging compliance issue.

The Horizon: What Is Next to the Coming

Post-Quantum Cryptography and Model Signing

This is a simple one to overlook in the conversation of marking security in the LLM however it matters. The model weight signing integrity, the cryptographic scheme that enables you to check whether a model is the one shipped by its source, is dependent on the security of the underlying cryptography implementation(s).

With the increasing quantum computing power, the algorithms that are currently in service (RSA, ECDSA) are prone to attack. As is directly applicable to this poster child: Migration to post-quantum cryptography: Step-by-step Guide to 2026: The migration of an organization to NIST-approved post-quantum algorithms, such as CRYSTALS-Dilithium or SPHINCS+, to sign its models, should be on the roadmap of any organization that takes long-term integrity in AI supply chains seriously.

It may seem high-technology, yet the planning of migration has to begin now – the time between the point of the quantum threat becoming practical and the point where you are producing signed models is not as much time than it seems.

Federated and Private Training with Verifiable Provenance

Differential privacy Privacy-preserving machine learning is leaving the research laboratories. Federated learning Homomorphic encryption A line into which the field is moving: a combination of these techniques and cryptographic proofs of training integrity. Essentially, what this means is that one day a model provider might be able to provide a zero learning demonstration that a model was trained on a particular, audited dataset, without disclosing the dataset itself.

This would revolutionize the model of trust behind the data layer of LLM supply chains. It remains mostly experimental, yet a number of research organisations are taking it very seriously, and its overlap with regulatory aspects (GDPR, the EU AI Act) is generating actual business motivation to realise it.

Regulatory Pressure Creating Minimum Standards

The supply chain issues are almost directly translated into the requirements of the EU AI Act regarding high-risk AI systems, as it includes documentation over training data, model cards, and continuous monitoring among other things. Through the official executive order on AI by Bible managed by the Biden administration and later NIST activity, minimum expectations have been set regarding AI, which the present administration will hardly undo, particularly where national security concerns are involved.

The practical impact: those organizations investing today in the efforts of AI-SBOM, model provenance tracking, and plugin security controls are constructing towards regulatory compliance, rather than security hygiene. The two are even becoming identical.

How to Actually Use This: A Practical Starting Point

In the case of mixed audiences this is useful translated into role specific starting points.

In case you are a developer who is a builder on LLMs: Begin with OWASP LLM Top 10 2025 article, it is free, has not been underfeeding and can be put into practice. Consider the LLM03 (Supply Chain) and LLM01(Prompt Injection). Hash verify any model pulled out of a public registry. Consider all the additions to the plugins as third-party dependencies that must be reviewed on the security side.

In the case that you are on a security/ Appsec team: Trace the usage of LLCM in your organization to a threat model. What are the numbers that each model hits? Which plugins have what permissions of which type? Begin with an inventoriness nothing can be guarded of which you have not discovered. Behave behavioral monitor afterwards.

As a leader of a business or product: The important question to give your AI sellers is: what is your model update and change management policy? In case they are not able to respond without uncertainty, then that is a red flag. Pressure on change notification and incident response contractual commitments.

Assuming that you are taught the space: The discoverable materials in the study used to assemble the present piece (OWASP official documentation through genai.owasp.org, the LLM supply chain lesson created by Snyk, and free-of-cost material offered by NIST, the AI RMF documentation) create a no-cost base. Whistlepractice them ahead with Types of Supply Chains 101 of Lex LLM before proceeding to the technical levels.

Monitoring and Version Control: The Operational Gap Most Teams Miss

The security teams use considerable time to complete pre-deployment drillage – model choice, data audit, vetting of the plugins and not in rare cases, give it to the operations teams who were not involved in the said discussions. The outcome is that there is a gap of visibility in production.

This is dealt with directly in Monitoring, Version Control, and Incident Response to LLM Supply Chains. The essence of the advice: allocate the operational acumen of exploiting an LLC to any other production service – basic versioning, change management, change detection tooling.

Some of the aspects that I observed when analyzing production LLM configurations in several teams: the majority of them included logging at the application tier but nearly none of them included behavioral regressions testing on a predetermined evaluation harness. That is the same thing as operating a web application without uptime monitoring. You will learn that something has gone over, when it is too late.

It is converging towards a combination of practices which are similar to DevSecOps with traditional software: continuous behavioral testing, automatic anomaly notification, and a written rollback process of model versions. These are not strange but are applied to a novel type of component.

Two External Resources Worth Bookmarking

To trace further into sources of greater authority and vendor-neutrality:

- OWASP GenAI LLM Top 10 (Official) – The OWASP LLM Top 10 Security Project is the most mentioned publicly available area of LLM application security. The supply chain risks in the 2025 update have a comprehensive supply chain risk coverage and mitigation guidance maintained by communities.

- NIST AI Risk Management Framework – NIST AI RMF offers the structure of governance and documentation, which connects supply chain controls to organizational responsibility. The most applicable document in the context of LLM guidance is the Generative AI profile.

Wrapping Up: Where Things Actually Stand

LLM supply chain security is now not an unresolved problem – but a white space is no longer either. Outstanding framework, governance guidelines and tooling offered by providers such as Snyk such as OWASP 2025 and NIST provide groups with real and practical starting points. Provenance tracking and model SBOMs are becoming a reality instead of a theoretical idea. The most lively and rapidly moving field at the moment is Plugin security, and the risk environment as well as the equipment is rapidly changing.

What becomes evident both in the research and in the real world: the organizations that are practicing this well are not approaching AI security as another field and add it on retrospectively. They are adding model provenance review, data pipeline audits and plugin security controls to the processes they already have in place to manage software supply chain management – and expanding those processes to AI-specific risk.

The difference between the teams that practice it and those that do not is ever increasing. Due to the pace of the adoption of the LLM, such a gap will end up costing the form of a breach report. Any team can defend itself forward-looking by beginning with the OWASP LLM Top 10, knowing how to Securing Training and Fine-Tuning Data Against Poisoning, and takching action in advance in regards to the cryptographic implications of the situation discussed in the Post Quantum Cryptography Migration – Step-by-Step Guide for 2026.

Now, the AI supply chain is the problem of everyone. Luckily, means to deal with it are on par.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!