Table of Contents

The Risk Nobody Talks About Until It’s Too Late

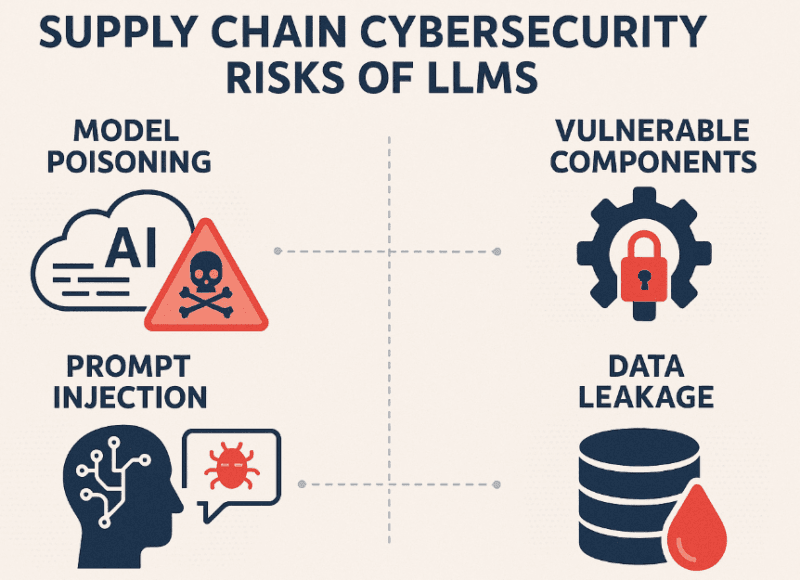

Everybody is fixated on the quick drug delivery and jail escapes. Ok then – those are headlines. Seldom mentioned in the background, the LLM Supply Chain Security issue is becoming one of the worst weaknesses of AI systems in the present day.

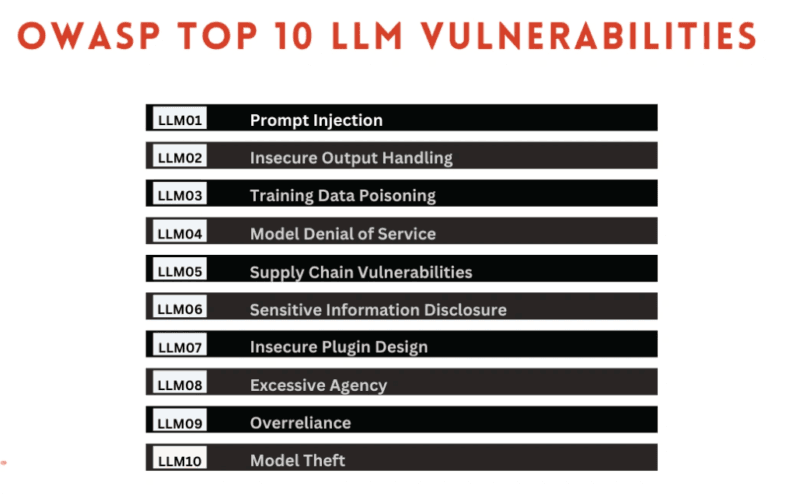

The LLM03 Supply Chain Vulnerabilities is the name of the list of the top 10 risk in OWASP. And truly speaking the name is a misnomer of how harmful it is.

The following is the idea of the fundamental issue: huge language models are no longer isolated. They are attached to plugins, invoked to external tools, they are executed within IDE integrations, and they invoke third-party API during the conversation. All such connection points are potential entry points of attackers. Not logically abstract ones, but real ones, having CVEs attached.

It dissects whatLLM03 means in practice, where the life forces of risk lie in the reality (some risks you may already be working with as of this very moment), and what are some of the patterns of such secure use when executed properly by teams.

What OWASP LLM03 Actually Covers

The Supply Chain Problem Is Bigger Than You Think

By definingLLM03, OWASP was not referring to your weights or training data in a model. The supply chain of a modern application powered by an LLC is:

- Third party pre-trained models.

- Fine-tuning datasets that have been fetched off the publicly available or semi-public sources.

- Tool extensions in the form of Plugins and tools that the model does not do.

- Artificial intelligence coders integrated straight into developer programs.

- Context Feeding context into prompts via system of vector databases and retrieval systems.

- Automatic deployment pipelines of model changes.

All these can be undermined. And due to the speedy nature of LLM applications (in practice, startups run business, developers integrate whatever works), security considerations are not considered prior to creating new features, and, in fact, are rarely considered at all.

According to the OWASP LLM Top 10 project, the notion of the supply chain risk is vaguely defined: any compromised components, insecure integrations as well as lack of proper vetting of tools trusted by your LLM is considered a threat. The latter one is the last one, the aspect of trust, where things become the most tricky.

Insecure Plugins: How LLMs Get Exploited Through Extensions

Plugins Operate With Inherited Trust

One such thing has not been clearly stated: connecting a plugin to an LLM can tend to be associated with the access level of the model. So in case your LLM can access a database, file system or an API, and there is a plugin that can execute operations in that LLM context, then the action of the plug will be capable of doing the same.

I have tried a number of the LLM frameworks with a plug-in, and the difference that is obvious is that there is not much friction between the model and the environment where the plug is executed. An ill-intentioned or bad-written plug-in may:

- Exfiltrate conversation context

- make unaddressed API calls.

- Alter outputs prior to being passed down to the user.

- Vitalize prompted content in the future.

This risk was early revealed through the ChatGPT plug-in ecosystem – through models All ChatGPT Models where the tool can be used. Researchers demonstrated that indirect prompt injections could be conducted through the use of plugins where a content delivered in a particular model on the part of a plugin included instructions on how to redirect the model behavior. The model did not have a native mechanism of making the distinction between data and a plug-in versus a new instruction.

Real Exploits, Real CVEs

This is no hypothetical ground. The appearance of code assistants in IDE provided by GitHub Copilot and other AI code assistants has revealed weaknesses that relate to their ability to parsify local environment context.

In the actual sense, the risk presents itself in the following form:

- An AI extension into a coding assistant called VS Code is installed by a developer.

- The extension brings context to the open open project – files, configs, even.env variables.

- In case of the extension being compromised or when phoning home to a server that is controlled by an attacker, then that local context is leaked.

Apparently, there are instances that VS Code extensions (not necessarily AI-specific) have been spotted to transfer data to third-party endpoints. In line with the increasing number of AI-powered extensions, this attack surface expands too.

As my experience demonstrated, the majority of developers who use AI coding assistants do not look at the network permissions of the extension, requested scopes, or update history and install it. The tool is good, it serves its purpose, that is the only point of vetting that occurs.

Unvetted Tools and Third-Party Model Risk

When the Tool Is the Threat

In addition to the use of plugins, computer applications based on LLM are becoming more and more dependent on external tools via frameworks such as LangChain or AutoGPT-style agents, or custom levels of orchestration. These tools might:

- Execute code

- Browse the web

- Write and read files.

- Make external API calls to the user.

Whenever one of these tools is a part of a third-party package – a PyPI library, an npm node, a third-party provided integration, it becomes a part of the supply chain. And community-contributed does not imply being security-reviewed.

Python and JavaScript ecosystems have a long history of malicious packages pretending to be other legitimate packages (typosquatting). That trend is currently spreading into AI tooling. Anything that identifies itself as a LangChain memory extension or a vector DB connector could have been denoting stuff as the harvesting of an API key or a malicious instruction being installed into the tool call outputs of the LLM.

Pre-trained Models From Unverified Sources

There are hundreds of thousands of models hosted on Hugging Face. Most are legitimate. Some aren’t.

Scholars have shown that the serialize exploits can be present in models deployed in community repositories, e.g. Pickle silences that the python language uses to store model weights. A load command which loads a model based on an unverified source may load an arbitrary code onto the host machine even without having trained or inferred anything yet.

I found that on several developer tutorials, the users are being taught to load models without much evaluation of the source account, the number of downloads, or the community flags. The ease of from pretrained causes an illusion of the security, as it appears like a regular import, rather than like a probable remote code execution exploit.

AI Coding Assistants: The Trusted Insider Risk

Why IDE Integrations Are Uniquely Dangerous

AI coding assistants, Copilot, Cursor, Codeium, Tabnine, etc., are in an advantaged situation. They can have access to:

- Open source files in the entire project.

- Some of these configurations use terminal and shell context.

- Commit messages and history of git.

- Environment variables when not in isolation.

This is because of their high value targets. An insider attacker may also read not only your current file but also whatever secrets you have, what architecture choices you have made, what API endpoints you have.

The AI Influencers of the developer demographic the one of the most vocal engineers and tech teachers with huge followings have begun to bring flags on this. When they do, the discussion is generally a question of centrally there is the core-question: who is actually reading the code that is being sent to these models and what is being stored server-side?

Majority of the assistant providers have their privacy policies stating what is held. Majority of the developers have not read them.

The Update Problem

This is a supply chain peculiarity point that can be readily missed: automatic updates.

When an AI coding assistant has been updated without any notification (this is the default configuration of most extensions in IDEs):

- Request new permissions

- Alter the data to be transmitted to the model provider.

- Add a dependency of known CVE.

In the absence of version transparency and managed updates there developers are releasing blindly into an ever-changing target. The extension which they had vetted in January is not necessarily the one that runs in July.

Secure Patterns That Actually Work

Allow-Lists: Don’t Trust, Verify

Strict allow-lists are one of the best mitigations to use against the risk of the use of plug-ins and tools. We do not ask ourselves what we should block, but what have we given an express sanction to.

Concretely, this is to be:

- Approved plugin registries – the LLM can only interface with plugins on a list of curated internally reviewed list.

- Tool call restrictions The LLM may only call tools which belong to some specific set of authorized functions.

- There are API endpoint allow-lists – outgoing calls by the execution environment of the LLM are confined to authorised domains.

The result is that this strategy minimizes the blast radius in case any of the things are compromised. The potential of damaging is lower than it is with an open network access because a plugin can only call three authorized endpoints and read one source of data.

Sandboxing LLM Tool Execution

Isolation is brought about by the technical layer referred to as sandboxing. In a case where the tools of an LLM are executed in a sandboxed environment:

- The access to file system is restricted to particular directories.

- The accessibility of the network is screened by its allow-list.

- Implementation of processes is managed or put off.

- The access to the memory cannot leak in the host environment.

Such tools as the gVisor, microVMs based on Firecracker, and container-level isolation (with appropriately scoped network policies) can be useful in this case. It is aimed at such that in case of a tool misbehavior – or a plug-in being exploited – the environment the application is running in contains the harm.

Infrastructure-level Threat Containment, which is automated, goes well with this. A system with anomalous tool behavior detection (unusual amount of outbound calls, outbound amount of data, repeated unsuccessful API calls etc.) can then automatically place the system in isolation before even a human is notified to look at the alert. This comes in handy particularly in agentic LLM systems which operate with a small human supervision.

Reviewing Plugin Code Before Deployment

This one seems to be self-evident. It rarely happens.

A practical assessment of the security of the plug in should consist of:

Static analysis:

- What are the external requirements of the plugin?

- Are those requirements pegged to particular versions?

- Have any known CVEs on those dependencies?

Behavioral review:

- And what is the actual functionality of the plugin?

- Does it generate any uninvestigated calls on any network?

- Is there any input /output data that is stored or logged?

Permission audit:

- What is the requested scope of the plugin?

- Is it so much the better requiring all of them to its declared purpose?

- What then happens in case those scopes are annulled?

I have tested a couple of open source LangChain applications and I have realized that dependency pinning is not reliable. Most of them stipulate minimum versions containing >= instead of precise versions, indicating that a transitive dependency attack on the supply chain has the potential to drift unnoticed to the subsequent install.

Version Transparency and Controlled Updates

Since the risk of update highlighted above is real, the teams that work with LLM tooling should define:

- Production LLM All major packages and plugins are pinned.

- Review of Changelog as one of the approval procedures of updates.

- Dependency scanning of each build Dependency scanning Every dependency version Code that depends on another can encounter hidden vulnerabilities before it gets to production.

- Plugus rollouts Stage model or plugus updates, which are staged with behavior checks between phases.

Version transparency is also the knowledge of the version of model you are a calling. Other hosting providers of LLM do not have versioned URLs to update their model endpoints, so it is not necessarily the case that gpt-4 is the same today as it used to be last month.

Such is important to security-sensitive applications, and locking to certain model snapshot versions where they are available is the correct solution.

LLM03 in the Context of a Broader Security Strategy

Plugin and Tooling Security: LLM03 Supply Chain Risk in Practice Fall Within a Bigger Attack Surface.

LLM03 does not work on its own. Other risks in the OWASP LLM Top 10 will be interacting with supply chain vulnerabilities:

- LLM01 (prompt injection) is potentially transmitted through a compromised plugin.

- The tools with permissions that are too high and insecure allow LLM06 (sensitive information disclosure).

- Unvetted models can enhance LLM02 (insecure output handling) when the model has been trained to give malicious outputs.

LLM applications security Security teams should not consider the security of the tools and application as a disjointed and unrelated checkbox in their risk model.

LLM Supply Chain Security finally demands the same amount of discipline as for the traditional software supply chains – only more so due to the behavior of the components (models, plugins, tools) usually being non-deterministic and behavior testing being progressively more difficult than in the traditional code.

What Good Looks Like: A Quick Reference

| Area | Risky Practice | Secure Practice |

|---|---|---|

| Plugin sourcing | Install any community plugin | Approved registry with code review |

| Tool permissions | Broad API and file access | Scoped allow-lists per tool |

| Model loading | Load from any Hugging Face account | Verified sources, hash checking |

| Updates | Auto-update all extensions | Pinned versions, staged rollouts |

| Execution environment | Tools run in app process space | Sandboxed, isolated environments |

| Monitoring | Log errors only | Behavioral anomaly detection |

Wrapping Up

As it is the case with LLM systems, its supply chain risk is not novel, but the problem of the old software supply chain with a fresh countenance. However, due to AI tooling being some of the most rapidly-adopted technology, and the privileged entry that AI code assistants and code loading typically comes with, it is becoming more of an urgent issue than many teams are currently making it.

The many surface solution is to apply to all plugin, tool, and third-party model components some combination of approach that a security-sensitive team will apply to any third-party dependency: explicit vetting, scoped permissions, version management and behavioural monitoring.

The creation of such habits today, in allow-lists, sandboxing, code review, controlled updates, etc. will prove much more helpful as LLM applications become increasingly more complex and, as will inevitably happen, as the attacks against them become more clever.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!