Whenever you watch a video, send money over the Internet, or pose a question to an AI assistant, it has to take at least a milliseconds to process a request. This is that it is a data center. Their existence is never considered by most people in that they are mere infrastructure but when one of them fails to work, people will feel it.

This article dissects the actual meaning of what data centers really are to understand why their significance continues to increase, how they are now needed and where the technology is going. You can be an inquisitive novice, a tech-savvy type, or a person who has to make infrastructure choices, and you can find something helpful here.

Table of Contents

What Is the Importance of Data Centers? A Closer Look at the Foundation

A data center is a specially designed facility that accommodates servers, storage systems, and networking devices – all 24/7. In addition to the hardware, such facilities also contain engineered power supplies, cooling systems and physical security layers that ensure all is running day and night.

It is believed that there is a misconception about the cloud, that the cloud exists in the abstract places. It doesn’t. The cloud is simply the method of consuming computing capabilities that are physically located in information centers that are run by firms such as Amazon, Microsoft, Google or expert colocation facilities. All SaaS products, all AI model output, all financial transactions go through one.

It is not simply the size which makes the difference between a data center and a basic server-room, it is the engineering redundancy within it. Proper UPS systems, back-up generators, alternative feeds of network and built-in and designed failover mechanisms imply that even when something goes wrong, services remain operational. Businesses pay to ascertain that.

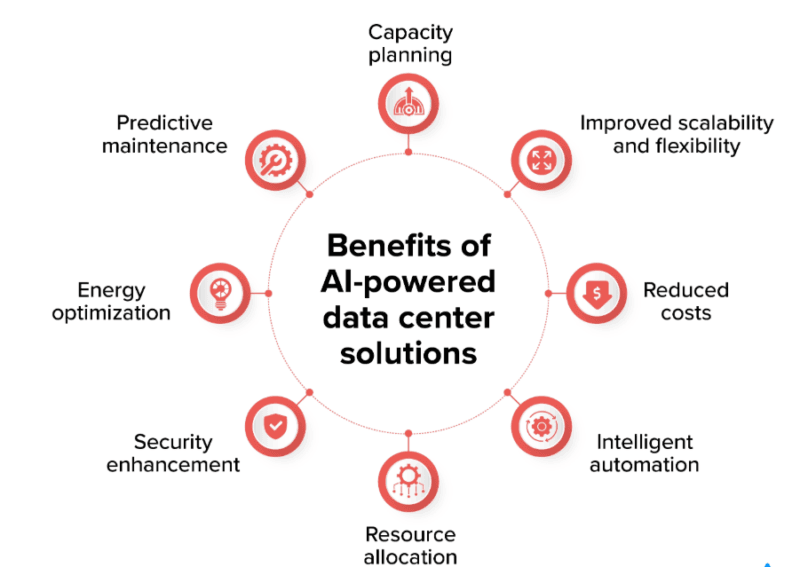

Key Benefits for Businesses and Society

Centralized Data Management and Security

Governance through centralization of infrastructure to a data center is much easier. IT teams can enforce similar security measures, access controls, and compliance frameworks in a single place instead of being decentralized on spread-out on-premise servers across the departments. I have applied colocation environments in which such a shift solely saved the time in preparing audits by significant steps.

Disaster recovery and built in redundancy solutions mitigate hardware and ransomware situations. Having the capability to duplicate information in facilities even in geographically different places implies that a flood, fire, or cyberattack in one place will not imply that data is lost forever.

Performance, Uptime, and Scalability

Modern data centers are designed to achieve 99.999% uptime goals – under 6 minutes down time per year. Fast backbone networks, low-latency storage arrays and modular server architectures allow organizations to scale of their needs horizontally as their demand increases without a significant change in their infrastructure.

Performance is not the wave-off with AI workloads in specific. Large language models are expensive to train on a decently powerful GPU cluster and real-time inference at scale is expensive and would demand high-bandwidth interconnects and cooling systems which cannot be accommodated by typical office infrastructure.

Cost Efficiency Through Shared Infrastructure

A private data center construction and maintenance is costly- territory and power facilities, air-conditioning systems, physical security, and special personnel. Colocation and cloud supports allow companies to get enterprise-level infrastructure at a small fraction of the cost, with the ability to have economies of scale that are impossible with a small operator.

In 2017 to 2021 the data centers had been estimated by PwC to add approximately 2.1 trillion to the economy of the U.S., not only by saving on IT costs, but also by both the construction and operations and local employment.

Main Uses of Data Centers Today

Enterprise IT and Cloud Platforms

Low-latency always-on architecture supports core business systems; ERP, CRM, billing, logistics. Cloud vendors operate IaaS, PaaS and SaaSs utilizing hyperscale information centers that are located in various regions of the world, enabling organizations to consume compute on demand without any hardware ownership.

AI, Analytics, and High-Performance Computing

The most dramatic infrastructure investment is currently happening here. The process of training AI models, as well as performing inference on a large scale, requires the use of a GPU-dense server design, very high-bandwidth storage, and cooling infrastructure, all of which could be unheard of five years ago, to support rack densities.

My review of data center capacity planning documentations revealed that AI workloads could cause rack power needs to rise outside of the historic 5-10 kW power range to 40-100 kW and higher.

Interestingly, the study of physical location information, such as the geographic positioning of edge data centers, overlaps practically with applications-oriented geotagging, in infrastructure mapping and the use of geotagging to locate assets globally.

Content Delivery, Streaming, and IoT

Content delivery networks (CDNs) utilize data center nodes at the regional level to deliver video, web content, and game updates with the least latency. In the meantime, IoT apps, such as smart factories, connected vehicles, create endless streams of data, which are processed in edge and micro data centers and synchronized with central locations.

What’s Already Here vs. What’s Just Beginning

Mature Capabilities – The Baseline Today

Containerization and virtualization is customary. Multi-tenant colocation, N+1 power redundancy, hot/cold aisle cooling efficiency and DCIM (Data Center Infrastructure Management) monitoring is no longer a differentiator – it is a table stakes. These are in place in any serious operator.

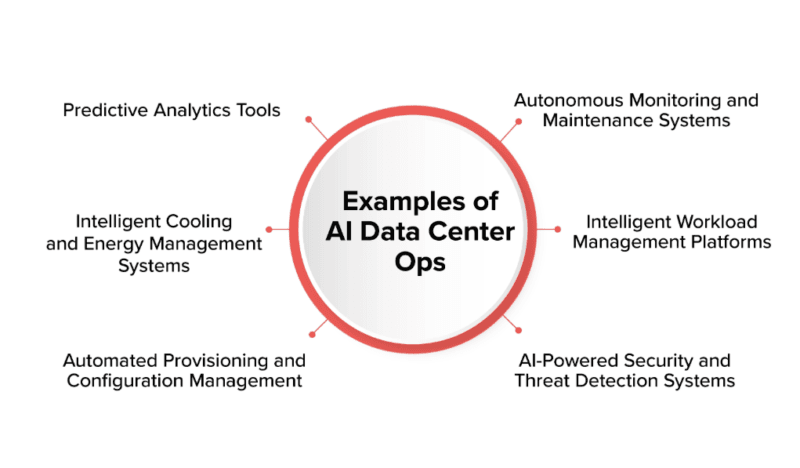

Evolving Fast – What’s Scaling Right Now

- Operation: The use of AI-driven operations to predict equipment failures prior to occurrence and automatically regulate cooling, based on real-time thermal data, has become a reality. Case studies present 15-40% cooling energy savings as a result of ML-based management.

- Edge and micro data centers: Smaller facilities bring services nearer to end users, to support 5G, autonomous systems, and real-time industrial applications. The market of global edge is expected to increase drastically over the decade.

- Liquid and hybrid cooling: If using AI racks that are dense with GPUs, direct-to-chip liquid cooling, and full immersion cooling is being used because conventional air cooling may not work. My initial review of vendor specifications indicated that the immersion cooling can be made to reach a PUE—near perfect efficiency of about 1.0.

- Prefabricated blog-and-assemble constructions: Prefabricated data modules produced off-site and deployed on-site reduces deployment times and enables incremental capacity additions, which is especially desirable in markets such as India, where demand are growing rapidly.

Frontier Directions – What’s Just Beginning

- Self-optimizing data centers: Autonomous balance between workload placement, cooling, and power sourcing driven by SLA requirements, cost targets, and sustainability metrics, controlled by AI control loops, and not by humans.

- AI-native campus design Facilities specifically designed to run a GPU cluster, designed as a ground-up architecture with ultra-high-density power distribution and physical security tailored to AI applications.

- More extensive grid integration: Data centers are now connecting more with smart grids, renewable generation facilities, and battery storage to participate in demand response initiatives and grid stability efforts.

- Non-conventional sites: Undersea sites, co-location in rural areas where renewable energy is present, and individual plants being co-located with waste-heat reuse programs are also under consideration by operators as a way to achieve not only cheap power but reduced carbon footprints as well.

Major Challenges – What’s Holding the Industry Back

My Take on Energy and Sustainability Pressure

The amount of data center power that is currently being used has reached about 12 percent, or even more, of the world power. As AI-generated workloads are increasing at a quicker rate, that number may reach many thousands, unless efficiency gains and the use of renewable energy sources do not match it. Cooling consumption of water is a concomitant issue especially in water-starved areas.

On the sustainability report of big players, I observed that the conflict between AI compute increases and ESG promises is increasingly difficult to brush. Investors, regulators, and enterprise customers are putting operators on real pressure to show some tangible improvement in reaching the targets on energy and water intensity metrics.

Power Density, Grid Constraints, and Physical Bottlenecks

AI racks that are dense in GPUs may need 40-100 kW or more – which is well out of range of most current facilities. Refurbishing old data centers with these densities is technically complicated and costly. In other markets, there are actual deployment backlogs due to grid capacity constraints and sluggish permitting.

Security, Compliance, and Data Sovereignty

Due to the sensitive and important information stored in data centers, they are ideal targets of physical attack as well as cyberattack. Multi-tiered security infrastructure, such as zero-trust network access, physical access control, encryption at rest and in transit, and continuous monitoring, are critical and not optional.

Localization laws such as the DPDPA of India and others across the world affect the construction of data centers and the international flow of data. To those whose career involves running infrastructure that requires compliance; it is a field with career potential behind it, at least it relates to the more general technology certifications. As an example, employees in the crossroads of secure software development and infrastructure might discover that knowing standards such as What is CSSLP Certification? is a useful certification in positions that are on the boundary between security policy and data center governance.

The Skills Gap – A Real Operational Risk

According to surveys, the majority of data center operators continue to face difficulty recruiting vital staff on-site, such as electricians, HVAC experts, network engineers, cybersecurity experts, and, most recently, AI infrastructure experts who are knowledgeable in how to implement high-density power infrastructure and the liquid cooling system. It is not going to be one in the future, but a current limitation to growth.

Cost and Community Acceptance

The cost of ownership is increased due to rising land, equipment, and construction costs. There is increased attention to noise levels, power consumption, and environmental footprint by communities and local regulators- occasionally delaying or preventing projects. ESG policies and considerate site selection is no longer a PR activity of a luxury; it is a business necessity.

Frequently Asked Questions

Q: what is the difference between a data center and a server room?

No. Mostly a small, informal space, in an office building, is referred to as a server room. A data center is designed with an engineered power, cooling, physical security and redundancy to sustain large, mission-critical loads at an enterprise or hyperscale scale.

Q: What is the impact that data centers have on the environment?

They are one of the most power consuming building types, which cause high electricity consumption, carbon release and the use of water to cool down. This issue is becoming tougher as AI loads demand more power – renewable energy procurement and something that effectively cools the computing tower gains importance.

Q: What is so special about GPUs and AI to data centers?

The training and inference of AI demand large-scale computer power, high-speed networking and high-speed storage – however, all confined in a way that creates excessive heat and necessitates special power delivery. Underloading these workloads imposes cooling (usually liquid) and facility design loads which are frequently not designed into the already-available infrastructure.

Q: Is edge data center a substitution of hyperscale facilities?

No – they’re complementary. At the edge run latency-sensitive applications (such as real-time industrial control or AR apps), but heavy compute and storage and other cloud services run in large regional or hyperscale data centers. The two models are simultaneously developing.

Q: What is the largest data center operation risk today?

The lack of power and cooling, as well as skills shortages, is always considered the highest operational risk, especially in the facilities with heavy AI that will exceed power and cooling capacities that current personnel and facilities can manage without significant investments.

Q: How to learn data center basics at no cost?

Begin by searching industry blogs, as well as vendor explanations of flex, by Flexential, Enconnex, and Data Center Frontier. Next get into SubZero Engineering and DataCenter-Asia cool-down and sustenance deep-dive. As per geography context – particularly when the case is about India – find articles that are based on challenges about power, regulatory environments, and labour matters in emerging markets.

Final Thoughts

Data centers are not only IT infrastructure, but economic and social infrastructure, too. All the industries such as health sector to money to entertainment sectors rely on the reliability, performance and security that the current facilities offer. And the stress on such facilities will continue to increase with AI workload.

To employees, the future is obvious: the positions that are in the interplay of AI infrastructure, energy management, and security, along with compliance, are scarce and precious. In organizations, the choices that are made currently concerning facility design, cooling plan and sustainability placement will determine competitiveness over the coming decade.

The distance between what is already present and what is only beginning is rapidly narrowing than in the knowledge of many people. Knowing that gap – and rehearsing on it – is what the difference between reactive operators and those who are really prepared to get ahead them.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!