Table of Contents

Cloud Computing in 2026 Isn’t a Strategy It’s Table Stakes

Cloud remained a decision in most business discussions about cloud in 2024. By 2026, that frame has fallen apart, to a great extent. Cloud services are already used by over 94% of enterprises. Currently, approximately, half of all workloads are affected in a cloud environment. Organizations are devoting almost 45 percent of IT budgets to cloud infrastructure.

It is not whether to use cloud that is the question that businesses are grappling at the moment but how to modernize faster, be able to integrate AI in the right way and be able to govern it all well enough to prevent making expensive errors.

This report deconstructs the best cloud computing advantages in business in the year 2026 with the particular focus on what is already fully fledged, what is still being formulated and where the resistance actually lies. It also includes how to utilize these trends in practice – in the case of the business, as well as in the case of those who are acquiring cloud careers themselves.

What Cloud Actually Delivers for Businesses Right Now

Cost control that works – when you run it properly

The transformation of capital expenditure to operational expenditure is a real and important thing. Businesses do not need to purchase peak capacity servers that are used a few times a year, but just pay their consumption. Auto-scaling takes care of traffic spikes. The right-sizing and usage-based pricing eliminate over-provisioning.

The flea is government. Cloud bills are not self-managed. AI and ML workloads – GPU clusters, high-performance storage – are costly to manage quickly unless closely monitored. The companies that are truly winning the cost battle are operating FinOps as a discipline: mandatory resource labeling, budget warnings, planned shutdowns of non-production settings, and routinely reviewing the cost by staff. Not technology, but process.

Elastic scale on demand – including for AI workloads

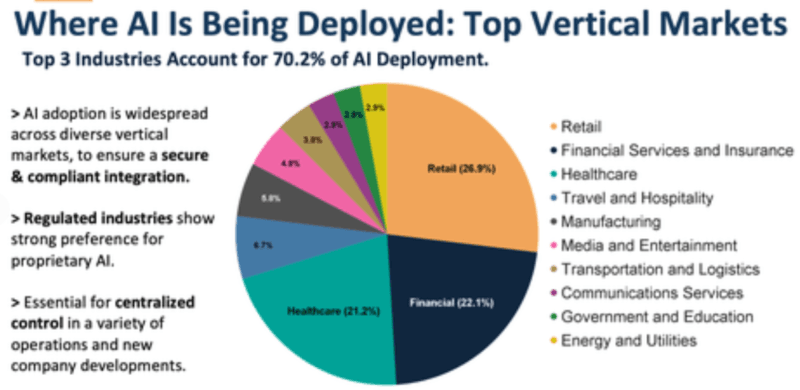

Scalability Elasticity has been a long-time selling point of the cloud. The more recent thing about 2026 is that this elasticity can now be useful to AI training and inference. A single model training run on a GPU cluster, spin-ups and release – such a flexible workload has transformed the economy of AI development on a fundamentally new basis.

Retail enterprises coping with peak season, medical services receiving appointment volume, fintech applications receiving viral traffic – these are the use cases of elasticity that are time-tested, and they are all adult. The new frontier is AI native scale burst compute with LLMs, high bandwidth storage with vector databases, inference infrastructure capable of making real time decisions at edge.

Faster shipping is the real competitive advantage

Previously, dev/test environments that would take weeks to deploy can now be created within minutes. CI/CD pipelines, serverless functions, managed databases – at your will. Teams with actual patterns of being cloud-native reduce their release cycles, rather than merely migrating their existing stack to virtual machines.

Hyperscalers additionally are directly bundling AI services with their services: MLOps tooling, vector databases, LLM APIs. Teams, like product teams, have the ability to add sophisticated AI features without developing the infrastructure. That alters what a small team can actually ship.

Personally, I have applied serverless architecture on AWS with side projects, and the difference in time (to set up) between serverless architecture and EC2 instances may be difficult to overrate. It takes around an hour what was a weekend of setting up.

Security baselines – strong at the provider level, harder across systems

Most small organizations could not afford to spend on security as large cloud providers do. Standard services include encryption, IAM, logging and a wide variety of compliance certifications (ISO, SOC, PCI, HIPAA). This is a real, material upgrade, to smaller businesses that would have been unable to develop that infrastructure on-prem.

The trick is consistency between multi-cloud and hybrid environments where it becomes more challenging. Security and compliance are mentioned as the most common reasons not to adopt the clouds in organizations, approximately 61 percent of organizations responded security and compliance as the main obstacles to cloud adoption, not due to the incompetence of the providers but because the coordination of the security posture among different clouds with varying IAM models and network configurations is actually difficult.

Poor identity management and misconfigurations on the customer side in the largest contribution to cloud incidents, rather than provider-level failures. Security is a collective responsibility. Infrastructure is managed by providers, and businesses by how they will configure it and utilize it.

The Maturity Map – What’s Settled vs What’s Still Developing

All these cloud benefits are not at the same level. Realizations of where things really are help to set realistic expectations.

Well developed and mature:

- Availability of core compute, storage and global region.

- Container orchestration, serverless, and managed databases.

- Hybrid and remote teams: use cloud collaboration tools as default.

- Simple pay-as-you-go price strategies.

- Provider compliance certifications

Coming out and not a sheen:

- AI-based cost anomaly detention with Finops.

- Single security posture control in multi-cloud and hybrid.

- Governance scale governance policy.

- The AI agent in the productivity and developer processes.

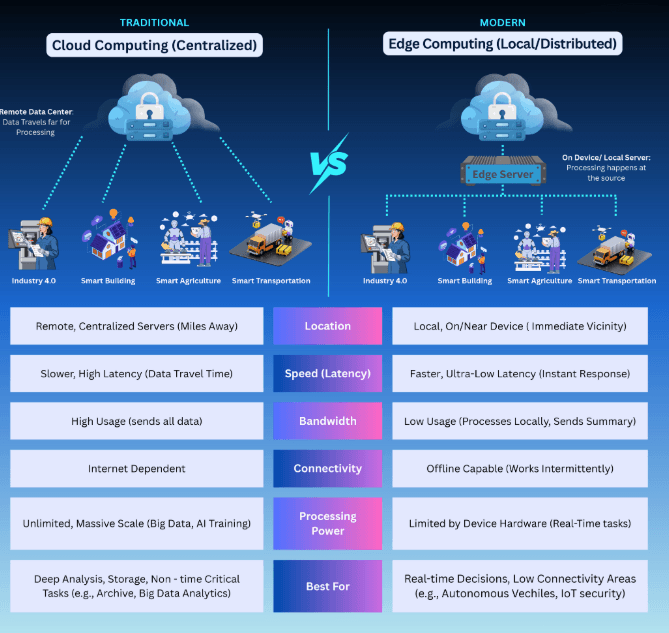

- Edge computing connected to the central AI devices to make real-time decisions.

Early-stage:

- Sovereign cloud as a mainstream need (nowadays in areas regulated by EU)

- AI factories Unlikely, AI-specific infrastructure constructed expressly as a workload mainly to support AI.

My experience revealed that the majority of businesses were in the intermediate stage:

operating multi-cloud systems and lacked the level of governance to operate them in a clean way. It is at that distance between infrastructure adoption and operational discipline where the majority of the cloud issues are being generated.

Where the Honest Challenges Live

The skills gap is bigger than most job postings suggest

About three-fourths (76 percent) of organizations say they do not have enough cloud security expertise or cloud skills overall, and that 95 percent are concerned about the overall cybersecurity talent deficit at least on the moderate side. The most significant gaps in practice are those in the fields of architecture, security engineering, DevSecOps, and FinOps.

All these deficiencies impede migrations, risk operations, and compel organizations to move toward the managed services that are more expensive to build than the capability would be in-house. This is the largest leverage point to individuals: cloud skills are indeed under-supplied versus the amount that is demanded, and security and AI-related skills are also severely scarce.

Multi-cloud complexity doesn’t manage itself

More than three-quarters of companies have two or more cloud providers. They have their respective console, IAM model, network model, and service APIs. It takes considered platform engineering to orchestrate governance, security policy and cost visibility in such a landscape, not mere purchase of the appropriate tools.

There are additional limitations due to sovereign cloud requirements. The regulatory instead of technical nature of architecture choices is caused by increased legislatures in countries on the topics of data residency and AI governance. This is shifting a niche EU consideration into a mainstream consideration in the regulated industries worldwide.

In the case you are reasoning out how cloud and edge combine to create distributed deployments, a good point of departure before going multi-cloud is Testing Edge Computing for Your Small Business.

AI workloads break the old cost assumptions

The challenge of cost management is accentuated by AI and ML workloads. Blades of GPUs and high-performance storage are costly at scale. With no tight government of AI infrastructure, such as resource tagging, planned shutdowns, anomaly detection may enjoy the benefits of spiral cost corescience previously unknown to standard compute costs.

The best organizations to deal with this best use management as a design constraint initially, not an optimization step to something having failed. Disciplined FinOps incorporated in development workflow outsmarts the ex-post facto reviews of costs.

How to Actually Get Value from These Trends

For businesses

Begin with results, not including migration plans. State what business outcome you intend to bring about (faster feature velocity, reduced infrastructure expenses, AI features, entering new markets) and then reverse-engineer it both to the architecture. Unspecificified cloud transformation language yields unfocused cloud strategy.

Go native where counts. The velocity and the resilience are provided by redesigning the core workloads to consume managed databases, serverless, and containers, and event-driven patterns. Lift-and-shift migration will save you a few costs. The speed is provided with cloud-native architecture.

Enact FinOps initially. Make all resources tagged. To prevent limit breaches, set budget alerts. Regularly right-size. Or close the non-production environments when not in business hours. Look at costs incurred by the team and not as overall. This is primarily not a technical field but management.

Be conscious of multi-cloud. Switch to use more than one provider when it introduces a given resilience, latency or regulatory value — not to default. Conceal differences between the provider of hides behind a platform layer (Kubernetes, service mesh, an internal developer platform) to ensure complexity of operation is controlled.

For individuals building cloud careers

Lay groundwork, then special. The basic certification by one vendor (AWS Cloud Practitioner, Azure Fundamentals or GCP Digital Leader) provides you with the language to deepen the knowledge. It has a basic architecture, security, data/ML, or FinOps specialization that you can then pick when you are interested in and what the local job sector is rewarding.

Create a real-world portfolio using free tiers: a serverless API, a CI/CD pipeline, an application that calls a managed LLM API. Actual projects which are underway are more convincing than courses completed in isolation.

Early invest in AI and security literacy. IAM, zero trust basics, rudimentary MLOps expertise are table stakes in the 2026 and not specializations in cloud roles. The digit of the expected usage of cloud infrastructure to execute LLM and agent workloads is less an option than a growing necessity to comprehend them.

The understanding of what cloud and edge do best is a worthy comparison to consider clearly Edge Computing vs Cloud Computing is a helpful resource to consider the architecture solutions to consider what architecture to assign to what workload before a design is chosen.

I observed that a candidate who knows security and AI infrastructure is disproportionately useful, in contrast to one who is only knowledgeable about one of the two. The overlap is less than would be implied by either of the fields.

Top Benefits of Cloud Computing for Business in 2026 – Free Resources to Learn Them

Want to go more in depth at no initial cost:

Vendor training & free tier laboratories: both AWS Training and Certification, Microsoft Learn, and Google Cloud Skills Boost have free regularly updated vendor training on basic, security, AI services, and cost management concepts. Free plans on each of the three allow you to test serverless, managed databases, and LLM APIs without or at a low cost.

Market and security reports The 2025 State of Cloud Security Report by Fortinet and Cybersecurity Insiders reports on multi-cloud risk, shortage of skills, and top security issues – must read if you are making a business case to invest in cloud security.

Free open courses and communities Coursera and edX audit tracks are free courses on cloud computing, security, DevOps and Kubernetes. The content of Linux Foundation and CNCF includes cloud-native patterns that are now standard in hybrid and multi-cloud operations.

Real-world troubleshooting of billing, performance and security settings can be gotten through stack overflow, r/devops and vendor Q&A communities.

To take a more concrete perspective of particular hardware that facilitates distributed and edge-cloud hybrid model, The Best Edge Computing Devices Ive Seen in 2025 discusses the physical infrastructure facet, which is frequently overlooked when discussing clouds.

FAQs

Is cloud still worth it in 2026, or is on-prem making a comeback?

Cloud is a necessity in the majority of enterprises, especially the ones that develop digital products or AI. On-prem is still reasonable when ultra-low-latency is needed, some legacy systems, or highly sovereignty-guilted workloads. Even such scenarios are more and more crafted as hybrid architectures as opposed to being wholly independent of cloud infrastructure.

How do businesses prevent cloud cost overruns?

Enforce resource tagging. Establish budget warning signs prior to threshold exceedance, and not afterwards. Store-size based resources periodically. Close down non-production environment during off business hours. Have monthly team review. Regular team cross-team reviews and dashboards are more effective than those based on ad hoc cost optimization projects.

Is cloud actually more secure than on-prem?

Provider infrastructure security is robust – oftentimes more robust than most organizations might develop themselves. The risk in 2026 resides in the misconfigurations, weak identity practices, and poor monitoring on the customer end side. Security is a collective duty: businesses do the configuration, access and monitoring of the infrastructure; providers maintain the infrastructure itself.

Should a new project start multi-cloud from the beginning?

Usually not. Being multi-cloud since the beginning would introduce a lot of operational complexity without obvious short-term value. An even more pragmatic trend: begin with a single provider, establish a good platform and security culture, and add other providers to achieve certain resilience, cost, or regulatory needs where the case is apparent.

How is AI changing cloud strategy?

Cloud growth is now primarily driven by AI workloads. The main offering of providers is nodes that are based on density in GPUs and optimization of the infrastructure as well as vertical AI platforms. The cloud strategy in 2026 must also clearly consider AI data pipelines, AI inference costs, governance, and sovereignty needs. AI is not an add-on any longer, but a design feature that should be considered, at the very beginning.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!