Your provider can see your data. Not necessarily because they want – but because they can. Encrypted disks protect data at rest. TLS protects data in transit. However, once a model begins handling your request, the data is sitting in memory, and anyone who can read (system) memory can read it – the hypervisor, the OS kernel, the privileged root user.

That’s where confidential computing comes in. And by 2026, it’s ready to go. It’s real and in production on public clouds, on NVIDIA Hopper and Blackwell GPUs, and in reference designs from companies such as Anthropic. If you’re designing or procuring any system that’s part of an AI workflow that works with sensitive data (medical, finance, law, multi-tenant SaaS solutions) then it’s a platform you should know about.

This article explains it without the tech-speak. What TEEs are, how blind inference can be achieved, what’s ready to go, and what isn’t.

Table of Contents

The Memory Problem Nobody Talks About Enough

The security talk often focuses on the perimeter: TLS and encrypted databases and firewalls. And data has to be unencrypted to use it. If you’re running a language model using your medical record or financial transactions, that data is in plaintext, in system memory – and even system memory is pretty easy to get to, as an attacker.

This is known as the data-in-use problem. Disk encryption? Terminated at the service boundary. TLS? Stops at the service border. If the host operating system or hypervisor is compromised – or if the cloud admin is looking over your shoulder, or if a cross-tenant side channel is successful – the sensitive data is compromised.

Hardware isolation is confidential computing’s solution. The answer is to offload the confidential computation to an isolated area where “no one” can see it.

What a TEE Actually Is And What It Isn’t

A Trusted Execution Environment (TEE) is a secure region of a CPU or GPU. Memory is encrypted, code runs, and – crucially – even raw ring-0 code can’t access this memory. The host OS is just a passthrough for blobs.

The most common TEE types are:

Process-level enclaves

The prime example is Intel SGX. It’s a small, encrypted memory region (enclave) that can run one process. Very strong isolation. The downside: it requires special processes, is limited in memory (originally megabytes), and is hard to debug. Not feasible without partitioning for frontiers LLMs.

VM-level TEEs

Intel TDX, AMD SEV-SNP and Arm CCA are different – they protect a guest virtual machine (VM). You can run unmodified apps in a confidential VM. They have a bigger Trusted Computing Base (TCB – code that needs to be trusted), and less fine-grained isolation. This is where things start most commonly for enterprises.

GPU TEEs

Here’s where confidential AI starts to get interesting. NVIDIA’s Hopper and Blackwell GPUs have an area of GPU memory (High Bandwidth Memory, or HBM) that is encrypted and has attestable access controls, called a Compute Protected Region (CPR). LLM weights and LLM activations are in that region.

Activation data is just forwarded by the CPU. My own experience with the Anthropic confidential inference architecture was illustrative of this: the host operating system is not trusted, and only the GPU TEE gets to see weights.

Remote Attestation The Trust Handshake That Makes This Work

A TEE isn’t enough. You also need to be able to attest, or verify, that the code is what you think it is, and the hardware is real. Remote attestation is how.

When the TEE boots up, it takes a hash of its code and configuration. The hash is signed by a root key in the hardware. A remote client (your app, your key manager) can check that signature and decide whether to release keys. If it doesn’t match, no keys.

This is a huge win for blind inference. The flow looks like:

- Client requests attestation from the TEE

- TEE provides a signed report of its measurements

- Client checks validity of report

- Client sets up a secure connection based on attestation

- Encrypted data come in; encrypted data come out

TLS and attestation have to be bound together. A USENIX 2015 paper demonstrated how naive solutions can be attacked using relay attacks – where an imposter transparently passes attestation nonces to a legitimate TEE. It’s not entirely obvious how to properly associate the TLS session with the attestation of the TEE, and it’s part of an ongoing engineering endeavour.

Blind Inference Isn’t Just “Encrypted API Calls”

This is the crux of information that’s usually wrong. Blind inference isn’t encryption of the HTTP request. That’s TLS, and with TLS alone the inference service can see your prompt when it has it in-hand.

Confidential Computing 101: TEEs, Blind Inference and Core Architecture is all about timing.

With normal TLS inference:

- Your data is encrypted in transit

- It’s decrypted and processed by the model provider’s system

- System admins and hypervisors, logs etc can see it

With inference inside a TEE:

- Your data is deciphered in an attested application

- The data is never decrypted for cloud provider stack (OS, hypervisor, storage)

- Even the model weights are encrypted in the vault

If you’d like more of a primer on how confidential computing relates to the larger issue of trust in AI, check out Confidential AI: How Confidential Computing Protects, which goes into threats in LLM deployments.

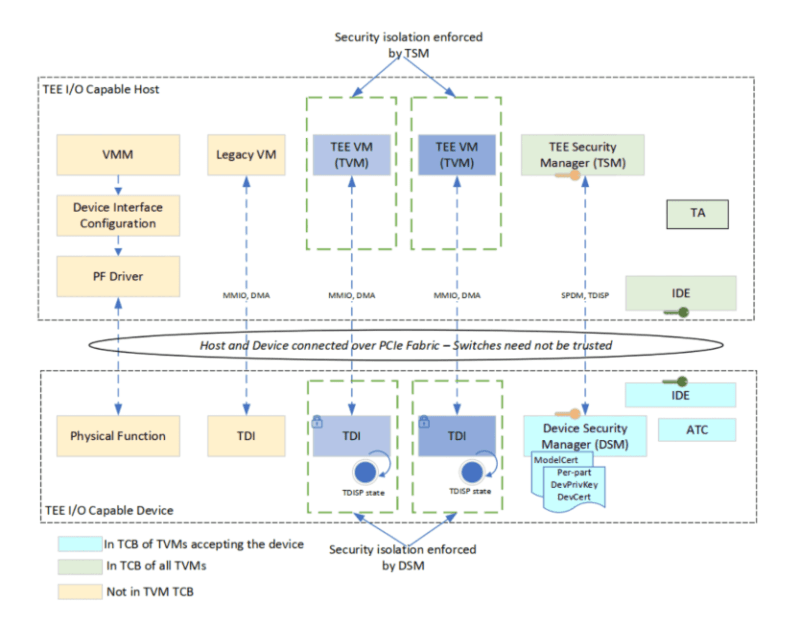

The Architecture in Practice What the Stack Looks Like

Here’s what a typical confidential inference system looks like in production, following best practices from Anthropic’s reference design and cloud stack today:

The enclave contains the model weight, the inference system and cryptographic keys. No one else can access this region.

The host OS is untrusted. It manages input and output, scheduling, and routing – but only of encrypted blobbiness. It can’t see or alter what goes into or comes out of the model.

The client is responsible for: verifying attestation to a TEE image, setting up a secure connection bound to attestation, and providing encrypted inputs. It receives encrypted outputs back.

Key management is outsourced – a hardware security module or cloud key broker – but keys are only provided on a positive attestation verification against policies. If you replace model weights the measurement will change, attestation will fail, and the keys won’t be released.

I realized one of the challenges in practice is this attestation policy management. Determining what constitutes a “good” measurement, managing policies across model version changes, and making this work with existing authentication and access management tools – that’s what takes time.

What’s Actually in Production vs. What’s Still Being Built

What’s ready and shipping today (2016):

- Confidential virtual machines (VMs) on all three clouds (TDX on Azure, SEV-SNP on AWS and GCP) – ready for general purposes

- NVIDIA confidential GPU functions on Hopper, with Blackwell starting to roll out

- Simple confidential inference pipelines for some AI workloads, especially those which fit on single GPUs

- Infrastructure for attesting to cloud providers, usually integrated into their key management tools

What’s still evolving:

- Partitioned inference – performing some inference layers on a CPU TEE and some in a GPU data center, with differential privacy, so they don’t learn as much CMIF-type systems investigate this but network performance is a challenge

- Cross-cloud trust chains – projects like CCxTrust use TEEs and TPMs to establish cross-cloud attestations, which can be used for multi-party computations

- Confidential serverless computing – enclaves with fast start up that can parallelise inference with confidentiality Still not solved: the cold-start problem

- Side-channel resistant – the core isolation is great, but the ability to attack via micro-architectural means is still a threat

The Challenges Nobody Puts in the Marketing Copy

Here’s the truth. Confidential computing helps but it is a hindrance.

Side-channel attacks are real. There have been Spectre-style attacks on SGX, TrustZone and other platforms, despite memory isolation. Recent work from Lou et al. successfully stole sealed model weights and architectures from AI models obfuscated using a TEE. Protocols such as Aegis defend against the attacks, but it’s only an arms race.

Large-scale models can’t fit in the enclave. To run GPT-4-scale models, you need partitioned TEEs on CPU and GPU, increasing the attack surface and attestation difficulty. The more boundaries, the more trust to manage.

Programming experience is poor. For example, only partial visibility into what’s happening in enclaves, limited memory on process-based TEEs and non-trivial build systems mean that moving to confidential computing for complex workloads requires engineering effort. I know teams have been three times shy of this.

Attestation fragility. If the firmware or its attestation logic within the TCB is flawed, then it can be faked. This is where TCB minimisation – reducing the amount of secured code – is important, and difficult in practice given the size of the ML codebases.

For those considering edge deployment, this links directly to issues with edge infrastructure – the same types of issues explored in Why Your Edge Computing Project Will Fail, and good food for thought if you are prompted to design an architecture that relies on trustworthy hardware roots of trust at the edge.

My Take on the Practical Adoption Path

Here’s how a pragmatic team should go about this in reality (not theory):

Start with threat modeling. Refer to the Confidential Computing Consortium’s Technical Analysis whitepaper to determine what threats will be applied to data-in-use for your workload (malicious cloud admins, malicious hypervisors, leakage between tenants, exfiltration of AI model IP). Threats map to different architecture.

Use confidential VMs. Confidential VMs with SEV-SNP or TDX provide you with protection without require upgrades. This is a good starting point for experimenting with the technology without having to construct enclave-centric applications.

Add key management for attestation. Secrets and weights should only be delivered after attestation. Most teams neglect this and it’s where the security is really added.

Play with open interfaces. BlindAI (from Mithril Security) provides a hands-on guide to inferencing with SGX, and a security audit. Watching their example of [encrypted request from user] → [SGX enclave] → [run model] → [encrypted response] makes the architecture more intuitive than whitepapers.

Expect to re-align to PQC. Confidential computing’s attestation and key exchange protocols will require post-quantum cryptography upgrades. It’s worth thinking now about these choices as time becomes more limited to avoid lock-in to vulnerable algorithms.

Teams focused on deploying AI inference (the last step where the AI model is evaluated) closer to the user face different performance versus confidentiality tradeoffs – see AI at the Advertising Edge for thoughts on how these tradeoffs apply to low latency, data sensitive settings that are starting to be addressed by confidential computing.

Best Free Resources The Ones Worth Your Time

There’s a lot of content on confidential computing. The majority of it is gushing about the vendors. Here’s what you should read:

“A Technical Analysis of Confidential Computing” (CCC v1.2 / v1.3) – vendor agnostic, includes threat models, side channels, attestation and TCB evolution. Start here.

Awesome Confidential Computing (GitHub) – talk list, research papers, SDKs and vendor literature. Saves hours of searching.

Anthropic’s Confidential Inference Paper – reference architecture, threat model, and host vs. GPU trust models. One of the best practical publications on this subject.

BlindAI Webinar (Mithril Security) – great demonstration of remote secure inference using SGX (and an audit!). Better than many vendor’s material.

“Machine Learning with Confidential Computing: A Systematization of Knowledge” – academic SoK survey of TEE-based ML systems, confidentiality/integrity guarantees, and issues. Dense but comprehensive.

Looking for an external source of the current state of the hardware, the Confidential Computing Consortium and NVIDIA confidential computing documents are two of the best non-vendor-neutral sources.

FAQs – The Questions That Actually Come Up

Does confidential computing replace zero-trust or IAM?

No. It provides a hardware root of trust, but it can’t function without SSO, IAM and network security. It’s an innovation, not a substitute.

Can GPU TEEs fully protect model IP?

Yes, they greatly increase security – encrypted HBM, attestation, encrypted weights. But side-channels and bugs remain. Watermarking and monitoring are still needed, even with GPU TEEs.

What skills does a team actually need?

Containerization, cloud, secure software engineering, basic applied cryptography (certificates, keys, attestation flows) and hardware isolation fundamentals. It’s not necessary to understand CPU microarchitecture to begin with, but you will need someone at some point.

Is this only for AI?

Not at all. TEEs fit into any type of data-in-use case: key management, multi-tenant analytics, risk management, and so on. AI is now the main use case because sensitive data and use of managed infrastructure is inevitable with LLMs.

How does this interact with post-quantum crypto?

Confidential computing is about data in use. PQC defends confidential data at rest and in transit from future quantum attacks. The attestation and key exchange protocols in the confidential stacks will require PQC-secure algorithms – these need to be migrated now not later.

Data-in-use is the missing piece, we have the hardware to fill it and we have the tools being developed to provide it. The people who build with confidential computing now are laying the foundation for trusted AI infrastructure in 36 months. Knowing the architecture – even if just broadly – must be part of the thinking of anyone building AI infrastructure.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!