The vast majority of the users open the GPT Image 2, type something nonspecific, like cool tech background, get some generic response, roll their eyes, and return to Canva. That is not an issue with a tool, that is an issue with a workflow.

Well-utilized GPT Image 2 can substitute the hours of weekly design work. It does not make it magic, but when you create the correct prompt environment around it, it becomes virtually a mechanical process to generate on-brand visuals. It is what is broken down in this guide.

You can start it no matter whether you are running your own brand, are running content on a startup, or you are simply fed up with the unpredictable nature of what you are seeing in your feed.

Table of Contents

What GPT Image 2 Actually Does (That Older Models Couldn’t)

The current image generation model of OpenAI is called GPT Image 2, and it is a considerable upgrade over the earlier image generation model – particularly when applied to social media.

The difference in practice here is:

Imagery texts ultimately work. The older AI image models were infamously poor at text being legible on the image itself. GPT Image 2 is competent to an extent that creators are already developing YouTube thumbnails and hero banners based on prompts in its entirety, not requiring text to be overlaid in Canva later.

Aspect ratios are in-camera, and not clipped. You can place your nudge at 9:16 vertical or 16:9 banner and the composition actually heeds that frame. The previous models would only produce a square and fiddle around with the editing. The subject location, negative space and the visual weight are now constructed on your selected ratio.

Hierarchical structures are unified. Social cards, multi panel carousel frames, UI-style mockups – GPT Image 2 can do these without the appearance collapsing. LinkedIn carousals or posts to compare are a big deal.

Editing is in-built. You can use the edits endpoint to edit the background of an existing image, replace elements or parts, or modify an image. This facilitates iterative processes of work which were previously unattainable.

I have gotten the most significant change in the text-in-thumbs formats. Where in the past I would always have required a post processing step, GPT Image 2 frequently gets within my range on the 1st or 2nd attempt which saves me a lot of time in editing.

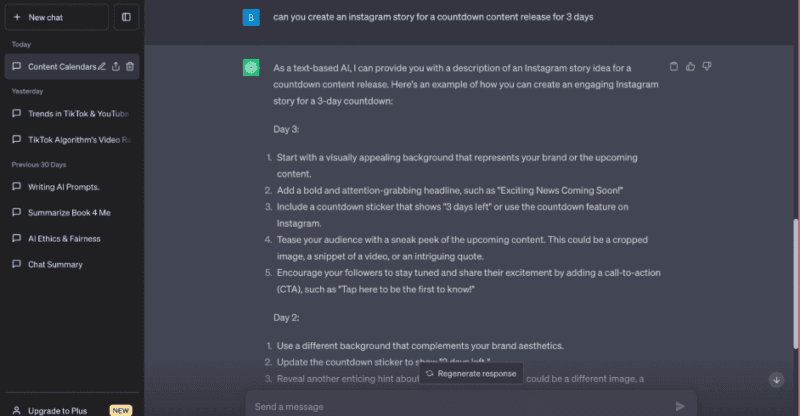

The Prompt System Most Creators Skip And Why It Matters

This is what the difference between individuals who achieve good performance and also those that cannot is the reusable prompt structure.

One-off images are created by one-off prompts. When you want an ongoing feed that really appears to be a brand, then you must have timely templates, not just every once-in-a-while thoughts that you type out.

Start With Content Pillars

Pre-touching the tool, outline 3-6 content pillars. Such are the repetitive topics that your brand writes on. In the case of a tech/AI site, that would be:

- How-to guides

- Tool comparisons

- Security tips

- News takes

- Product breakdowns

Every pillar receives its baseas prompt. That prompt remains unchanged. It is the subject that varies after each post.

Anatomy of a Good Base Prompt

A good base prompt consists of four parts:

- Imagery type – “minimalist flat illustration” / “film photography” / “editorial collage”

- Color scheme and feeling – deep navy and electric cyan, confident and modern.

- Lighting and composition – “the gentle studio lighting, middle-ground subject, abundant negative space in which to place text over fingerprints”

- Frame shape: platform 1:1 square / 9: 16 vertical Pinterest pin / 16: 9 LinkedIn banner.

An iteration of a wellness brand-morning routine pillar: Lifestyle scene, natural soft-lighting, earth-tones, minimalistic composition, cozy and relaxational atmosphere, 4:5 portrait, subject centred with space to add top-text.

In the case of the iPhone brand, in a how-to element: Clean isometric illustration, dark background, neon accent detailing, UI based layout, 16:9 landscape, minimal and crisp.

Then with such a foundation you simply replace the subject. The next week, it was a “Cybersecurity checklist” and a week after that it became an AI tool comparison. The visual language does not change which makes the feed appear to have a cohesion.

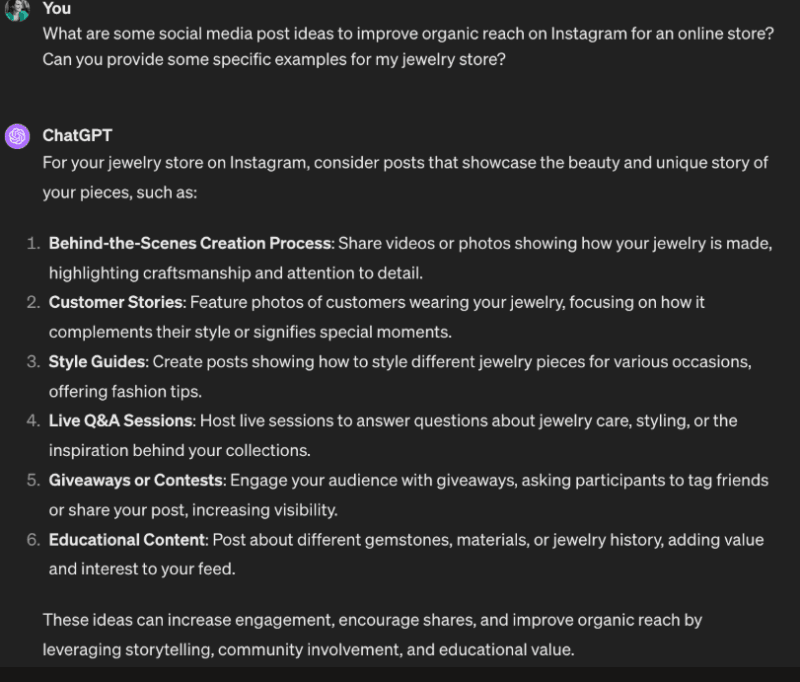

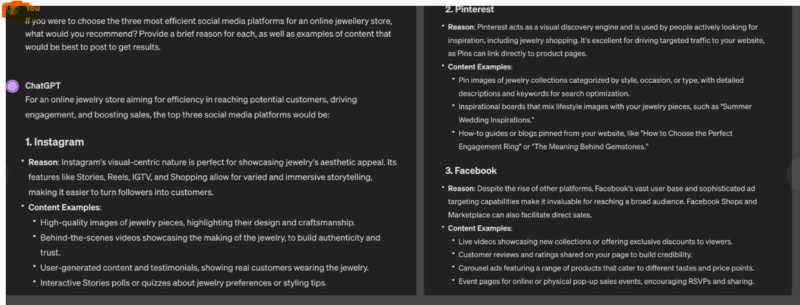

Platform-by-Platform – What Actually Works Where

Not all images can be used on all platforms. The variability in feeds contributed by my experience with testing across feeds affirmed the advice of most prompt guides: context of the platform alters it.

Instagram (4:5 Portrait or 1:1 Square)

Instagram is a visual reward system. There is high saturation, high focal objects, aspirational or emotional images. Name the mood: should be invoked in your prompt – exactly: warm and inviting, aspirational morning light, bold and energetic. In no other place do generic prompts generate generic results.

LinkedIn (16:9 Horizontal)

LinkedIn feed is designed to suit fast scrollers of professionals. Muted and pale colors are more effective as opposed to bright colors. Lots of whitespace. Background Authoritative questions that have a professional essence, sparse background, and isometric or flat drawings seem to take off. The image must be what it seems to be honest.

Twitter/X (16:9 or Square)

High contrast. Strong focal element. The picture should convey a message within less than one second since users are on a hurry. Prompts such as bold contrast, single dominant visuals or attention-grabbing graphics generate graphics which actually halt the scroll.

Pinterest (9:16 Vertical)

Pinterest is designed to support vertical-oriented content, comprised of a definite topic and a substantial amount of text overlay, either built in or inserted post-facto. Immediate: 9:16 Pinterest pin, focused subject, aspirational, background light, allowed top and bottom text.

It is also a format of AI-generated illustrations to use as the background that you will overlay real typography in Figma or Canva.

How I Actually Run a Batch Workflow With This

Below is a workflow that has been successful in generating content that can be used throughout a week in a single sit:

Select a content topic – such as, 5 cybersecurity mistakes most people make. Now produce platform versions of it:

- Instagram 4:5 promotional photo or lifestyle image.

- LinkedIn 16:9 iconographic banner.

- Twitter/X 16:9 bold, high-contrast card.

- Pinterest 9:16 vertical checklist-like illustration.

Four pictures of a single thought. Using a good prompt template it can be completed in minutes, perhaps 2030 with review and a minimum of light editing.

Once you have created 5-10 variations per important post, your scheduler will be able to A/B test over time. Label each photo with a prompt ID to be able in the future, to view some of the prompt patterns that result in the most activity. The improvement of that data loop is how you get better, not by guessing, but knowing what visual styles your audience really enjoys.

Alternatively, you can plug GPT Image 2 into web proxies such as that of Cloudflare, which uses AI that handles user prompts and promises automated scaling of its services, or you can use OpenAI itself and call its API directly, logging prompt->image URL->performance measures to your stack. Here it enters the boundary of being an artistic instrument, into being an actual system of production.

What Most People Get Wrong About Consistency

Being consistent does not imply using the same tool. It is a result of common visual language.

The pitfall many creators have descended into is creating various styles every week since they are trying something new every time. The feed appears as having several various brands.

The solution here is easy the shared prompt library. Approach it as a system of design.

Prompts are your brand guidelines. Each new content is placed into an established pillar with the specific visual concept. You can develop it further as time goes by but the shifts must not be accidental.

Another thing to consider is to add in your own brand touchpoints to the output of GPT Image 2 – your logo, your own typography, shape overlays in your brand color. Since a lot of makers are utilizing the model with more or less the same prompts, the images have a potential to arrive at a somewhat familiar AI appearance. This is just the layer on which you can add your design making your content unique.

The Copyright and Ethics Side Nobody Wants to Read (But Should)

This is the section that most guides pass by. It is a lot more than somebody thinks.

Ownership is murky. Numerous sites permit commercialisation of AI-generated images, and exclusivity is virtually absent. And a different person can create something that can be very similar by using the same model. That would be a reality when it comes to brand campaigns or a marketing process that is about to make or break.

Training data risk. AI image models are trained with huge datasets are capable of harboring copyrighted works. Outputs would accidently look like current brand assets or photos. Best practice: Do not use prompts that mention particular brands, characters, or named artworks. In case of large campaigns, do a reverse image check on the final assets.

There is a changing of the norms of disclosure. No rule as yet on timing of disclosure of AI-generated images but there is an movement by the industry in that direction. It is prudent to have internal rules regarding what content remains as human-shot (anything that consists of real representation, sensitive issues or community-specific storytelling).

Beyond Still Images – Turning Outputs Into Motion Content

An angle that is not discussed enough: GPT Image 2 can be used as a good starting point to create motion content, not an end product.

Start with your base image, and apply it to a lightweight animation platform – Animaker, the AI functions of CapCut or another of such systems – to apply zoom, parallax, or a text reveal. That one still picture is then turned into a Reel or Story without you having to shoot or edit video independently.

This workflow complements tech and AI content creators, in particular, with generators of captions or animated diagrams. A single image may give birth to as many as three formats of content within an hour.

Here, the ability to develop with the changing feature set of OpenAI also makes sense. The model continues to get better and integrations with such platforms as New ChatGPT Agent features and productivity tools imply that any workflow that can no longer be created today can be the usual in half a year.

Free Resources to Actually Learn This Properly

You do not require an online course or subscription. Here’s the place to raise your time:

The official image generation docs of OpenAI – This is the reference. Understand the parameters: size, quality, output format, and the edits endpoint functionality. Although you may not actually touch the API, what you know regarding what is possible will influence your own prompting.

Image generation with GPT 2 in Cloudflare guide – Helpful when you need to generally use image generation or machine learning-generated content in your own applications, interfaces, or content pipelines. It details how the model can be called using their AI/ML API layer.

HitPaw and NoteGPT prompt guides – The following are not the most glitzy resources, but lists of 30-50 copy-paste prompts sorted by platform. It is not the prompts, but perceiving the language of patterns. You begin to internalize what constitutes a prompt work after studying 20-30 examples.

Recraft and such generators- Use these to practice with low stakes. Although the underlying model may be different than GPT Image 2, prompt structures are a quick way to experiment, building your total prompt literacy.

To all who might be interested in the larger ecosystem of AI productivity, we encourage exploration of how products such as ChatGPT, and Microsoft Outlook integration are transforming end-to-end workflows on content – image generation is a part of a much larger stack that is becoming more and more interconnected.

My Take After Spending Real Time With This

GPT Image 2 is truly helpful with social media, although it must be viewed as a system, and not as a vending machine.

Individuals who perform optimally are not those with the most inventive prompts. It is them that created systematic prompt libraries, clarified their content pillars, and then became disciplined to review and iterate over what really works.

If you are new: select one platform, make two or three foundation prompts to your primary types of content, and run them a month. Monitor the type of visuals getting engagement. Refine the prompts. It is that feedback loop that makes you become good at this.

And when you happen to be running content on behalf of a brand or a client: it is in the scale-to-A/B testing of a single idea, with four platform variations, that the real power is discovered. It is not any quicker than paying a designer to do one image. It is quicker than generating 40 images monthly in a regular time, without constraints.

Two people should use GPT Image 2 in social media? Creators, independent advertisers, start-up companies, and anyone who requires visual output on a scale that the conventional design processes cannot support. Neither is it an alternative to a designer in case brand direction is not defined. Once that direction is captured, it is a grave force multiplier.

Quick Setup Note – Security and Account Management

In case you are developing this into a team workflow or API-based production system, ensure your OpenAI account is well secured. When you use paid API access, it is not negotiable to not use multi-factor authentication. A quick tutorial on How to Set Up 2FA Authentication on ChatGPT? has easy to follow steps in case you are yet to do so.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!