Table of Contents

Something Shifted Around 2024 and It Wasn’t Subtle

In past times, data centers were under wraps. You knew they must be sometime, somewhere close to a highway, in a kind of a nondescript building, silently humming along and keeping the internet alive. Then there was AI at scale, and then these facilities were the most disputed tangible property in technology.

It is not aal causal developement. It’s a hard pivot. Regulations pertaining to the design, powering, cooling and metering of data centers have evolved at a pace in the past two years than in the past decade.

In case you are in the tech, cloud, AI, or infrastructure field, or in case you are just a person, who wants to grasp the relevance of the data centers in the world, which is going to operate on AI, this is where the things actually are at the moment.

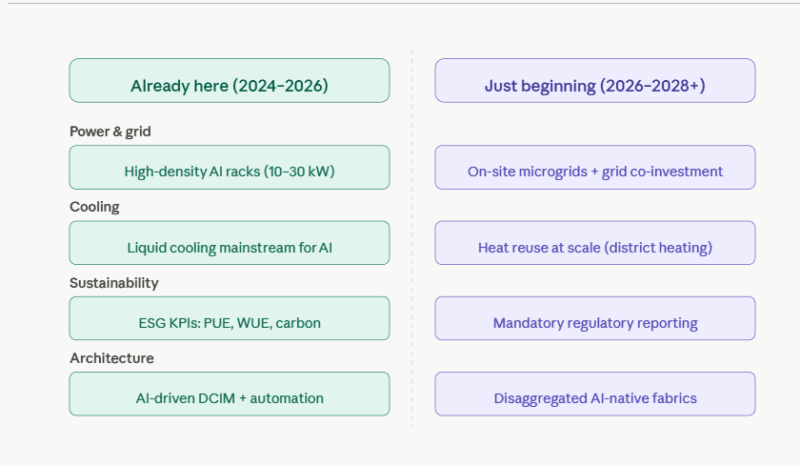

The Future of Data Centers Is Already Underway

The most common mistakes in the contents on the future of data centers are that they are written in the future tense. The reality? Much of what is termed as emerging is in use at scale.

The best is the rack density.

Conventional server rack setups used 5-10 kilowatts/rack. That worked well with virtualized workloads – web servers, databases, normal compute. The math was transformed by AI. The typical rack densities are moving to the 10-30 kW range (or higher) with the use of GPU clusters to train and infer. I have encountered specification of new AI colocation uses in which 50 kW per rack is being used as a minimum and not high-end.

This is not so dramatic until you note the thermal consequences. The higher the power per rack, the higher the amount of heat per square foot. Air cooling which has been known to work very well in decades can just not match up with such densities.

Liquid Cooling Stopped Being Optional

The original perspective on liquid cooling presented when I initially began to listen to cooling architecture talks was that liquid cooling represented a niche solution to HPC (high-performance computing) clusters. Permanently altered is that discussion. Any new AI-oriented construction has become the norm of direct-to-chip and full-immersion cooling.

The liquid cooling data center market is estimated to increase by more than 20 percent in its compound annual growth rate up towards the end of the decade. That is not a factual projection, it embodies contracts already being signed and facilities already being retrofitted.

What is less talked about is what this entails on the operational side. Liquid in a data center presents plumbing, leak checking and maintenance procedures that are literally new to air based cooling. Those engineers with the knowledge base to appreciate both the thermal engineering and the implications that it has in the operation are hard to come by -and precious to themselves.

The Power Problem Is More Serious Than Most People Realize

This is the figure that re-values all this: data centers are today using approximately 1.5 percent of world electricity about 415 terawatt-hours per annum. By 2030, this might triple to more than 945 TWh principally due to the AI compute demand. That is no straight up growth tale. That is a hyperbolic curve of demand.

The result is not only environmental chicanery. It is a geographical limitation as to the location of new facilities.

Approval of grid connection of a large-scale data center campus, which especially requires 100 Megawatts of power is now taking a multi-year queue in certain markets. Substations are not being constructed as quickly as possible. In areas with stressed grids already, AI campuses are politically controversial initiatives that attract the attention of regulators and utility commissions.

This has seen the discussion in the industry increasingly focusing on operators the role of becoming what some analysts refer to as grid stakeholders that is, establishing a co-investment in grid infrastructure, installing on-site generation (solar, backup gas, small modular reactors in some long-range plans), and providing utility with flexible load capabilities, in exchange of faster connection.

Water Is the Overlooked Variable

Everybody is discussing electricity. There are not as many individuals discussing water.

Evaporative cooling (popular and in use) may require millions of gallons per large plant per year. This is a real flashpoint in areas already at risk due to water shortage. I have been observing that the most progressive data center operators are now implementing water use efficiency (WUE) in initiatives, as opposed to adding it in after regulators started frowning upon them.

What was originally advantageous, the transition to liquid cooling, comes into play, as more advanced waste heat of a liquid cool can be more readily harnessed and reused – in district heating systems, agricultural uses, or industrial processes nearby. Waste heat evolved into a type of liability to a possible source of revenue in areas where it is normal to have district heating.

AI-Native Architecture: Not Just AI Workloads Inside Old Buildings

There is a big distinction between the idea of a traditional data center that performs AI workloads and the one that is AI-native. The latter does not exist at scale actually, but this is the direction that design thinking is moving to.

The idea considers the whole facility as one computing system – disaggregated CPU, GPU and memory pools, inter-linked with high-speed optical fabrics with link speeds of 1.6 terabits. When academics such as Luiz Barroso and Urs Hohzel characterized warehouses scale computers in their early work, now used at commercial scale in the case of AI infrastructure specifically, it is more reminiscent of this.

Practical implications: not only power distribution, but also cooling topology, network fabric, and physical layout must be co-designed. It is not possible to repurpose a 2015-era enterprise colocation facility with any meaningful degree of repurposing. That is what is driving new AI campuses to be purpose-built, and why older colocation providers are now being strained into either retrofit or loss AI-driven business.

The Future of Data Centers Depends on a Skill Set That’s Still Being Built

Talent is one of the issues that were not reported in this space. Data provided by operators have constantly reported sharp shortages, such as data center knowledgeable electricians, HVAC experts that comprehend the high-density liquid cooling, and hybrid positions, integrating information technology infrastructure experience with power engineering and sustainability compliance expertise.

My practice indicates that the engineers in this setting that shine do not happen to be pure IT specialists or pure facilities specialists. They are individuals capable of easily switching between UPS topology, containment strategy, PUE/WUE benchmarking, GPU cluster architecture and ESG reporting requirements discussions and/or knowing how decisions of one field influence the other.

To the person who may be developing towards such a role, learning direction is important. An adequate AI cybersecurity deployment guide and power and cooling basics are becoming more topical due to the emerging category of security surface area: attacks on intelligent PDUs that manages intelligent PLCs on a firmware level, hacked DCIM systems that operate physical cooling systems.

Free Resources Worth Actually Using

The following resources are practical pointers, rather than generic lists:

The Introduction to Datacenter path with Microsoft Learn is a vendor-neutral approach to design, components, operations, and sustainability – good vocabulary, and mental models.

The on-demand training of Lawrence Berkeley National Lab aims at energy efficiency, cooling systems, and electrical infrastructure. It has just enough technicality to be really useful but not to the extent that a facilities engineering background is necessary to begin.

Underlying knowledge of warehouse-scale architecture involves The Datacenter as a Computer (3rd edition) which is a free PDF book from CMU, even though the network view aspects have evolved since then. It is more of a systems engineering document than a textbook, and this is one of the reasons as to why it is helpful.

Energy University of Schneider Electric provides its customers with free courses on the cooling methods, UPS size optimization, distribution of power, and reliability planning.

The annual Global Data Center Survey by Uptime Institute (2024 and 2025 editions) offers the best perspective on the actual operating conditions of real operators; plateaued PUE, staffing pain points, readiness gaps in sustainability, and trends in AI integration.

To gain a better sustainability background, both the Asia-Pacific region of PwC reports, such as its report entitled clean energy gap for data centres, and the EU-based briefing report, entitled energy-hungry data centres, describe the regulatory and grid forces that are transforming the global location choice of data centres.

What’s Actually Just Beginning and Why It Matters More Than the Headlines

The conversation on data centers has generally been reduced into two groups: big growth is happening because of AI and this is not sustainable. Both are true. Both are not much of use in themselves.

What is really interesting to observe is the 3 rd layer; the reaction of operators to the tension between the two pressures.

The grid-interactive data center are facilities that can dynamically respond to grid signals, provide demand response services to power utilities, and share the risk of renewable generation, represent a radically new way of the relationship between these buildings and the energy services they rely on. This is only commencing in large scale but the groundwork and frameworks to facilitate the same are already being established.

The same is with heat reuse. Few European plants have been injecting waste heat into district heating systems over the years. The difference is in the economics: liquid cooling generates better quality heat that can be used in practice and since the cost of energy is quite high then municipalities and industrial partners are willing to pay it. What my experience as an operator sustainability report reviewer indicates is that this is becoming more of a PR narrative than a revenue line.

The regulation reporting regulations are tightening in the EU and a number of the Asia-Pacific markets. According to the surveys conducted by the Uptime Institute, there are less than 50 percent of operators who follow all the metrics that are going to be demanded in the future by the regulations.

That is a readiness deficit that has a strict time limit on it – and that is generating a pressing need in networking, those who can comprehend the measurement technical aspect as well as the compliance reporting aspect.

The Sustainability Calculation Is More Complex Than PUE

Power Usage Effectiveness replaced the common metric of efficiency in data centers mainly due to its simplicity to compute and share information. But it is less and less adequate.

The overall PUE in the industry has gotten stuck at an average of 1.5-1.6 after years of efficiency work. The simple victories are very much dwindled. What we are starting to see is a more comprehensive measure of metrics such as water usage efficiency, carbon usage effectiveness, and renewable energy percentage and hardware circularity that can provide a more tangible vision of the environmental footprint of a facility.

This is of importance to the engineers as the requirements of sustainability are now being included in architectural requirements rather than an afterthought. Making the telemetry infrastructure available to support audits and disclosures, as well as designing it to achieve measurable efficiency, is becoming more of a fundamental deliverable, rather than a value add-on.

Who Should Pay Attention to All of This

Even when you are not working in cloud infrastructure, DevOps, or IT architecture, the physical layer beneath your stack is evolving so it can make capacity planning, latency, and cost structures and relationship with vendors different.

Most infra or facilities engineer and looking to remain an expert by the AI compute build-out, the knowledge chasm between can wire a rack and can design a 30 kW liquid-cooled AI zone to meet ESG reporting requirements is wide – and can be closed through intentional learning.

And even when you are in policy, sustainability, or finance, and you are wondering why data center infrastructure is continuing to feature in the discussion of energy grids, carbon commitment, and water rights- the technical context above is what is making them happen.

The next wave of data centers is not just one technology. A collision of physical constraints, regulatory pressure, AI-driven demand, and the engineering capability is what is cohortially playing out. It is only those who know it all- not merely one section- who will determine what is constructed next.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!