The quiet crisis is going on in most big corporations now.

Staff is inputting confidential information into public AI. Consumers check quotes with refreshable chatbots and finance teams conduct client projections.Consumer checks quotes with refreshable bots, finance departments conduct client projections using consumer grade chatbots. The poor session information is a problem because health workers are asking LLMs with real patient context, and often times they don’t even realize that’s the issue.

IT and security staffs know. However, as they’ve seen, they have not been able to thwart this, since blocking it altogether simply means people get around it.

On one side, this is the big challenge of enterprise AI adoption in 2025: Useful enough that people will find a way to use it, on the other; regulated industries can’t afford to be exposed to the show. There has to be a compromise.

This “something” is the infrastructure itself.

Table of Contents

What the Shift from “Public Experiment” to Confidential AI Actually Means

The path to AI adoption for most companies began by giving a handful of teams access to a publicly available LLM that generated a summary and drafts of their content. It was low-risk because in the data it had to transfer to it, it was going in a low-sensitivity data. It was good enough to foster internal demand.

Then a law person or maybe a risk compliance person asked the seemingly simple question: what happens if this is utilized for something that really matters?

This is the time that cracks start to show.

Public models send to third-party infrastructure, even API. You can’t tell if your prompt was used or not and you can’t be certain who used it or whether it was used for training. That’s a reality not just a possibility in a healthcare system or bank or government department. This is not an easy to break regulatory wall.

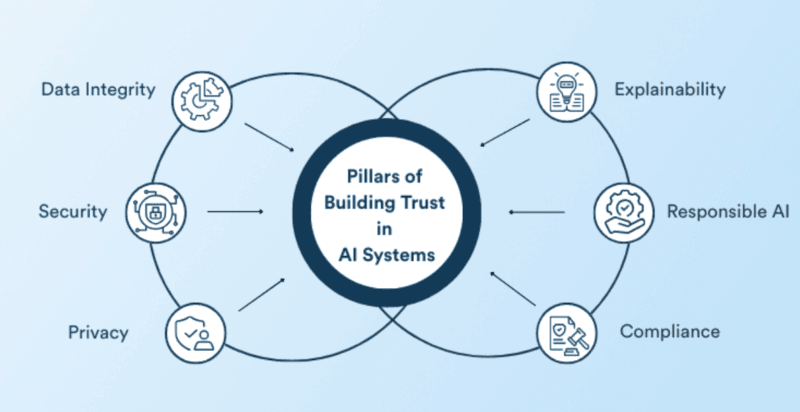

Confidential Infrastructure” is a particular technical solution to the problem. It is equivalent to running AI workload applications within hardware-enforced trusted execution environments (TEEs) — memory regions even the cloud administrator can’t reach. Not only is the data kept encrypted at rest or during transmission, but also as it is being processed. And then it can create a cryptographic proof of what software was used, on what hardware, in what policies? An attestation report.

This is AI infrastructure, which is audit-able by a regulator.

Why Traditional Security Wasn’t Enough

Based on the time in which most enterprise security frameworks were created. All of these actually mean something. However, one cannot use them to protect the data when a model is running on it.

This is the ‘data-in-use’ exposure that confidential computing addresses. There is a way to discover what is inside a normal computation: via a root user on a cloud host or a memory-scraping attack. Inside a TEE it can’t be done cryptographically.

For those wishing to get into deeper discussions on the underlying technology before enterprise strategy, Confidential Computing 101 is great place to start in which they will find a valuable introduction to TEEs, attestation, and the underlying hardware stack of these things in simpler terms.

What’s Already Shipping – Not Just on Slides

Many of the “Confidential AI” pieces sound like a road map document. Here is a list of what is currently available and being used in the real world:

Cloud-native confidential compute: Azure’s confidential GPU VMs is a production service as are Google’s Confidential Space. You can deploy RAG pipelines or use fine-tuned models in the pipelines, even now, while built with encrypted memory and attestation as part of the infrastructure. This isn’t beta. It is being used by enterprises.

High-Throughput Application(HTA) GPU TEEs for high performance workloads: Most demanding AI tasks require GPUs, for which the H100s are capable of execution using TEE. Early confidential AI pilots were forced to make use of CPU-only TEs – slower and more limited. The restriction is being removed.

Mutual attestation patterns: If you want to share data that contains sensitive information with an AI model provided by a vendor, you’ll need to verify who you are before you share it, something one of the more difficult parts of confidential AI is that you have to verify the data owner as well as verify the AI model. There are example model patterns, like this one which Red Hat has published. The technical work is in place, it’s not prevalent, but it is there.

Governance takes AI products and processes to a new step: Enterprise AI governance frameworks Now, organizations can get AI-specific risk guidance from organizations such as ISACA. Liminal’s enterprise AI governance guide details data exposure, IP leakage, in addition to compliance controls in sufficient detail for possibly the regulated industries to use effectively. These are not only concepts but checklists attached to tangible controls.

I’ve seen some of this stuff marinating myself and it’s really been faster than I thought it was going to be moving from the ‘Should we use AI?’ question to the ‘How do we make sure that we can do it safely?’. It is now the auditorial trail that is prominent.

The Threats That Changed Everything

It’s worth pointing out what scares enterprise CISOs to take this seriously.

Valuable injections ended up being more difficult to patch up than anticipated, where a model’s behavior is being compromised by malicious injection. The fact that model extraction attacks became an actual threat to IP rights (in which an adversary queries the model sufficiently to be able to reconstruct it) was truly a new IP issue. What we also see documented are examples of LLM’s exposing internal password or confidential information from their context window, often in unknowing or unavailable ways for the enterprise to determine and audit.

These aren’t hypothetical. They have made the shift from governance-as-policy to governance-as-infrastructure.

What’s Still Early and Where Things Are Heading

There are other things that are just starting and it’s okay to admit it.

End-to-end trust fabrics: The vision is to provide a platform that ensures data governance, model registries, model policy enforcement and confidential runtime with auditable across multi-cloud and hybrid scenarios. There are some sellers promoting this. Not many people have managed to have it shipped. The pieces do not live together, but it is integration that will be difficult.

At scale of a garden, mutual attestation is not a thing: At pilot-scale, bilateral verification between data owners and model vendors is proven. Much work remains to be done if we want to get it working across and between organisations and across all cloud providers—particularly with high volume inference.

Regulatory clarity: Technical standards are moving at a higher speed than legal standards. These are all in flux and developing rules in various sectors (HIPAA, Basel III for banking AI). Companies creating confidential AI platforms now are taking risks on how the laws will turn out eventually.

One of the more practical technical guides that would be helpful to explore in detail, if it’s actually going to be implemented in the cloud, is Architecting Confidential AI in the Cloud Google’s reference architecture. It provides examples of real architectural diagrams for confidential RAG, federated learning and analytics pipelines.

As I was looking through this material I was aware that the most significant challenge isn’t the technical aspect it’s the organizational aspect of bringing the legal, security, and engineering departments together. The technology is still a few steps ahead of the process in majority of businesses.

Where Confidential AI Is Actually Being Used

In research papers, no. In production pilots.

Banks are utilizing cross-institution fraud detection models inside TEEs without sharing of customer data. Confidential computing is being applied to clinical analytics where the travel of PHI does not transcend the ability to be on the inside on a hospital’s trusted network.

Government agencies are experimenting with AI that interacts directly with citizens and encrypts sensitive case information to avoid the public cloud.

These aren’t moonshots. They’re certain cases in which the data disclosure proved to be an insurmountable obstacle but the contribution and utility of AI was evident. To overcome this hurdle and verify that the blocker can be addressed without a revolutionary change in ML-based methods, Confidential AI’s solution was developed.

My Take: The Playbook That Actually Works

What it suggests to be on practice comes like this:

Step 1: involves identifying and categorizing AI workloads based on data sensitivity.The first step is to inventory and classify the AI workloads by the sensitivity of the data.

Numerous businesses have already participated in experimentation with AI. Which of those types of workloads do you interact with regulated data? Identify flag “blocked but valuable” use cases – the uses where there is clearly a need for AI, but there is a hard “no” on exposing data.

Step 2: Select one workload with a high value for a confidential AI pilot. Avoid trying to cook the entire ocean! Select one use case requirement that is blocked, and rough out a prototype of that requirement using cloud confidential computing services. Consider not only performance, but also the amount of risk reduction, and if risk reduction can be shown to a regulator in an “audit trail.

Step 3: Develop the governance layer (in parallel of the technical layer). TEEs protect data-in-use. They are not a tool that addresses bias automatically, nor do they explain, nor do they add security to the supply chain. The governance system should also include attestation policies, runtime logs and model provenance – beyond the infrastructure checklist.

Step 4: Identify a pattern with the pilot to repeat. The objective will not only be a working pilot. It’s creating the diagrams, threat models and attestation reports that allow other business areas to follow along without having to reinvent the process.

So my observation and experience across the generalities of the deployments that I have seen tends to have the teams that win not always being the technologically best teams. These are the people who identified a specific issue, won buy-in from stakeholders early on and brought this evidence back to the compliance team, which they were able to use.

Free Resources Worth Bookmarking

Fortunately, that’s not one for a paid course!

- Confidential Computing for Secure AI Pipelines: Practical micro-content on end to end pipeline security, Linux Foundation. Free.

- Zero-Trust Architecture for Confidential AI Factories: Covers the GPU threat model and design patterns, on the NVIDIA Developer Blog. Unexpectedly readable.

- ISACA AI Resources: Risk-centric and governance-centric resources, including most articles are free and open to access without membership.

- Red Hat : Improving AI Inference Security with confidential computing: dives into mutual attestation and confidential containers on Kubernetes. Technical but well-structured.

A interesting thing to look at for the wider Confidential AI market – at its future trajectory of standards organisations, open-source organisations etc. – is the Confidential Computing Consortium. It is the nearest neutral/vendor independent perspective of the space.

FAQs: What People Actually Ask About This

Is confidential AI the same as using a private model?

No. A private model simply refers to modeling it yourself, without an agent or another company. Inference in confidential AI is cryptographically protected so that even the IaaS infrastructure cannot access the data or model internals.

Do you always need TEEs, or is this overkill for most use cases?

If its internal productivity tools that rely on non-sensitive information — that’s often redundant. When it comes to financial records, health information, legal documents or government information, TEEs are no longer a luxury, they seem to become the standard.

Does confidential computing fix bias or explainability problems?

Not directly. It’s about confidentiality and integrity (NOT fairness). But what it does enable are better provenance (the model version that was deployed on which data) for the audit dimension of responsible AI.

Where should a bank or hospital actually start?

Select one workload that has a significant impact, but is presently held up due to data accessibility issues. Prototype it in a cloud Confidential VM. Create a report of attestation. Demonstrate that to your compliance staff. This is a better argument than an architecture diagram.

Who Should Be Paying Attention to This Right Now

No longer is this a niche subject if you’re involved in tech and engaged with ‘regulated data’ in any capacity, be it your own company, or advising companies that do. It is emerging as the infrastructure on which enterprise AI is developed.

These questions are already being put by the security architects, cloud architects and the AI product managers in Banking, Healthcare, Insurance and Government sectors. Confidential infrastructure falls somewhere in the middle of “we use AI” and “we can prove we use AI safely”.

The transition from public experimentation to AI that’s ready for use and production is not all happening at the same time. But most of them are taking place at a rate that was faster than expected; and it is the business that realizes that the technical and governance layers are one and the same, rather than separated that will have the ability to implement the use of AI in the right place at the right time.

This is the actual possibility. Not only great AI, but trusted AI – thanks to industries.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!