Last updated on May 13th, 2026 at 03:22 pm

Table of Contents

Why the 4Cs Model Exists and Why It Actually Makes Sense

Security guidelines for kubernetes generally shower us with technical jargon. Harden your nodes. Scan your images. Watch your RBAC. The list could be endless, you are bombarded by them out of order.

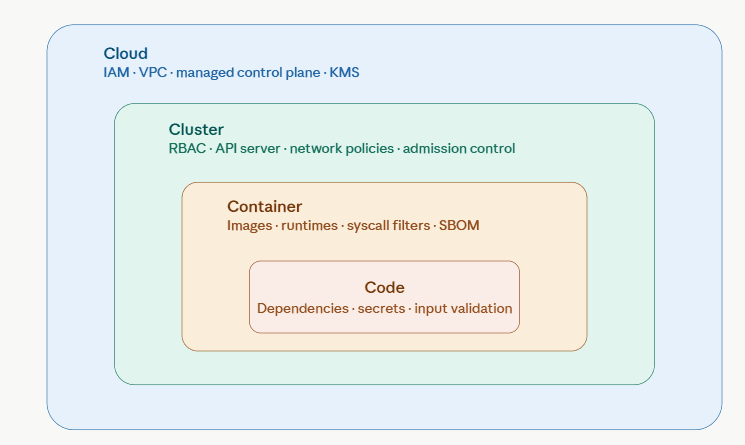

The 4Cs framework – Cloud, Cluster, Container, Code – cuts through that. It provides you a mental model, layer by layer, starting from the underlying infrastructure and ending on the layers on which you build your application. Any layer covers the inside of the one inside. When there is a hole in the outside, all that is inside is compromised.

It’s the same “defense in depth” concept employed across the spectrum of security disciplines, but applied specifically to the way that Kubernetes environments are deployed.

Below is a quick visual representation of the relationships of the layers:

Weaknesses propagate inward. The cloud IAM role could be misconfigured, preventing an otherwise locked down cluster. If the library used in your code is not secure, it can go right past the robust cluster and cloud controls. The direction matters.

Cloud: The Foundation That Most Teams Under-Secure

The Cloud layer encompasses under Kubernetes everything else: IAM, VPC networking, storage, key management, and managed control planes (EKS, GKE, AKS). The attack surface-the broadest-and is typically missed because once the cluster is up and running it appears as “someones else’s issue.

The knowledge gained so far:

- Access full admin should be avoided, should only access what they need.

- Extra hardened VPC designs and restrictions on the amount of control-plane exposure

- Protection for data at rest and in-transit, such as etcd backups.

- Network segmentation where the Kubernetes control-plane nodes are separated from workloads.

There is no doubt that protecting etcd and kubeconfigs is considered to be basic requirements and not hardening.NSA/CISA guidance explicitly states that etcd and kubeconfigs are considered to be basic requirements and not hardening. Leaked kubeconfigs are always one of the most impactful failures at this level.

So far what is surprising teams:

In recent years, cluster-to-cloud lateral movement has become a threat here. If a node’s IAM role is overly granular, an attacker attacking a pod may be able to move directly to the cloud resources themselves. This is an acknowledged problem for the OWASP Kubernetes Top 10 and is definitely a problem that is many times forgotten by teams until after a breach.

Cloud providers are restricting their public access (defaults, managed control planes are less public than before), and posture management is starting to include checks for the Kubernetes configuration in addition to cloud configuration scans. The difference between what is available and what most teams are actually using, however, is still large.

The Kubernetes Security Documentation from the CNCF is also the best resource as a reference for Kubernetes Security at the infrastructure level.

Cluster: Where Most Security Guidance Lives and Where Gaps Still Hide

The Mature Baseline Everyone Should Already Have

There is a large amount of existing guidance available for the Cluster layer: Kubernetes control plane, worker nodes, RBAC, admission control, network policies. This layer is the focus of CIS Kubernetes Benchmarks (kube-bench) and the NSA/CISA Kubernetes Hardening Guide.

The non-negotiables at this point:

- No cluster-admin type role binding or service accounts are present that have RBAC enabled.

- No strong authentication support and anonymous access is disabled.

- Traffic between API and database encrypted; no public IP in front of the API server

- Audits logs are monitored continuously and nodes are isolated when necessary and permitted.

These are “must haves”, now. They’re baselines. When you are not using them for your production workloads, it is big risk.

Kubernetes Cluster Hardening against CIS Benchmarks provides a checklist to a set of pass/fail results, rather than open-ended suggestions.

What People Miss: Policy-as-Code Isn’t Optional Anymore

A new cluster space emerges, between the Cluster layer and centralized policy enforcement. Most teams implement the controls on, say, a per-namespace or per-team basis, on an ad-hoc basis. The lack of cluster level policy enforcement is identified as one of the major risks and is specifically mentioned by OWASP.

Admission controllers (such as Open Policy Agent, Kyverno and others) provide a way to declare pod security policies, image provenance policies, and workload configuration policies within the cluster prior to the scheduling of workloads into the cluster. Multi-team environments, I’ve been using OPA Gatekeeper in, and this is a huge difference between per namespace yaml rules and a policy engine that’s enforced centrally. Misconfigured pods in production are eliminated.

From policy “audit after deployment”, to policy “enforce at admission”. To add to this, kube-bench scans and CIS benchmarks will be integrated into continuous posture management (CPM) workflow, as opposed to single audits.

Container: Images Are the Attack Vector Nobody Takes Seriously Enough

The Vectors That Keep Showing Up in Incidents

All containers contain weaknesses based on their shared OS layer. While this may seem like a trivial point, my experience was that teams would do the same (as I expect they still do, and the downloads won’t noticeably time out if you don’t).

The regular checklist applied at this layer:

- Pre-scan base images and packages before deployment (and continuously in registry)

- Steer clear of unsafe or untrusted source images.

- Apply non-root restrictions on users within containers.Implement non-root privileges on users within containers.

- Possibly remove unnecessary Linux capabilities.

- Install read-only root file systems, to the extent possible.

One of the most prevalent misconfigurations is privileged containers. A privileged container can attach to the host file system, load local kernel modules, and can practically break out of the container. This is a well-known attack path, and is seen frequently in incidents.

Container Security at the OWASP Kubernetes Top Ten provides you a direct insight into what the biggest problems at a container level are in real application of container compromise scenarios.

What’s Actually Evolving Here

There are three things going on at the container layer at the moment:

- The Software Bill Of Materials (SBOM) documents are now becoming the norm, providing a full inventory of all the contents of each image. That allows you to react quicker to the arrival of a new CVE against the library that you’re shipping out.

- Sigstore and cosign bring cryptographic image signing to everyone. An attestation may be verified that does not require a trust in an image tag.

- A growing number of tools look at system calls and network activity in production, which is known as runtime detection. They detect abnormal usage of containers that could not be detected by static scanning, e.g., when a container has been built as clean container, but it now looks like cryptomining malware.

Code: The Layer That’s Understood But Inconsistently Applied

Why Secure Coding Advice Doesn’t Stick in Kubernetes Environments

Security policies and software aren’t exactly known for their enduring popularity.Readers of security policies and software aren’t people who love to keep them in their heads.

Good development practices are known – sanitization of inputs, storage of secrets, dependency updates, no hardcoded logins, etc. It’s not about what you know;It’s not about what you know; The consistency is between the teams sharing the same cluster.

Even if the cluster and cloud layers are secured well, many images can be broken through a single vulnerable library to compromise images. Indeed, Log4Shell brought this to the forefront of attention: A vulnerability in a code layer had penetrated a truly vast number of workloads running in containers, no matter how well you had locked them down or guarded them.

Secrets Management in Kubernetes is a nice reference guide on how to deal with secrets correctly from a code/conf level – especially when connecting with some external secret stores.

Where Code Security Is Heading

Integration is the key evolution that dominates this layer. Application code, container manifests and Kubernetes manifests are amassed in the CI/CD pipelines as Software Composition Analysis (SCA), secrets scanning and IaC security checks are incorporated.

That change – going from individual, standalone scans to a single pipeline validation – is noteworthy. Means a vulnerable library can be caught before it becomes a vulnerable image, which means that it will never get to the cluster.

I discovered that teams that have SCA enforced at the merge time have a much better difference than teams that perform a weekly batch scan for SCA. The weekly approach is always translated to known vulnerabilities being in production for days, while queueing up tickets.

The Challenges That Cut Across All Four Layers

There are no maps depicting the attack that are clearly assigned to one layer. Typical incidents tend to be a chain:

- Because vulnerable code (Code) allowed initial code execution, the Cloud can access a policy-targeting cloud IAM role, and the Container can access an instance metadata API by the cluster network policy (Cluster network policy failure).

Inspiring students through failure, the recurring failure patterns across the 4Cs:

Stale workload configuration – Misconfigured pods, containers with excessive privileges, and exposed dashboards on the internet appear in the first three spots of the OWASP Kubernetes risk list. This is the most common problem.

We get these two role bindings a lot – Broad role bindings or cluster-admin service accounts – these tend to be found in incident reports. The default in most clusters is “body, aka give it what it needs to work” vs “body, aka give it the minimum to function.”

Secrets management failures – Such as long-lived tokens, leaked kubeconfigs, and application secrets in environment variables instead of a secrets manager. This goes for the four layers.

End-of-life components – Un-supported versions of Kubernetes and unpatched base images reduces your observality and response capabilities when it comes to detecting and responding to compromise.

Lateral movement in clusters can be carried out in ways that come as a shock to teams that thought pods were isolated by default; with the help of Kubernetes Network Security policies. They’re not.

Where Things Are Heading: The 4Cs in 2025 and Beyond

The path through all four layers is increasingly one of automation and continuity as opposed to manual hardening and one-off audits.

A Prevent-as-One-design technology principle. Today, more and more guides, whether from CNCF or vendors, make the assumption that at least one layer will fail. The emphasis is on containment and reduction of the radius of effect – making it more difficult for an attacker to access after a failure in the first ring.

Policy-as-code at scale. Policy engines and admission controllers are becoming “standard units,” rather than “add-ons,” in the cluster. It’s frustrating when crumbling controls at the per-namespace level pervasively affect your entire cluster, which is why centralized enforcement may rescue the Cluster layer.

Continuous posture management. kube-bench results should not live in a spreadsheet, they should be automatically and frequently scanned.

Runtime forensics and kernel level visibility are integrated.Runtime forensics and kernel level visibility integrated. Known vulnerabilities are identified through static scanning before deployment. Takes behavioral anomalies caught by the runtime tools – cryptomining, lateral movements, odd network connections, etc. Both are necessary. None is anymore complete than the other.

Supply-chain focus. The discussion has gone upstream. Build pipelines and library ecosystems are home to vulnerabilities and misconfigurations. You have not only got your cluster under the security fence, but your CI/CD pipeline, image registry, and dependence sources under the security fence, too.

Free Resources Worth Actually Using

These are useful, actually not a “padded” list, they’re starting points:

- The official guide, from the CNCF, that directly maps to the 4C model: CNCF Kubernetes Security Best Practices

- Burghers DSM r OWASP Kubernetes Top Ten — real risks, in the privileged order determined by the frequency and impact of those risks, mapped to the 4C layers.

- Authoritative and detailed, NSA/CISA Kubernetes Hardening Guidance is broken down into more digestible pieces by ARMO.

- Explicit coverage of 4C and kube-bench labs in community course notes, documented on the KodeKloud CKS GitHub.

- Relevant, current and easy-to-follow Best Practices for securing Wiz Kubernetes clusters – from RBAC to runtime forensics, upgrades and logging

If you’re interested in the runtime security of containers while they’re running, next to its own documentation, Falco’s documentation is a great resource (performs kernel-level runtime detection, whereas a lot of blog posts don’t). SLSA security levels are an attack-response model that indicates the security of security build pipelines – from source to deployment.

Who Should Use the 4Cs Model and How

The 4Cs framework isn’t just for security engineers. It’s useful for:

- Any app developers who will make decisions that impact another layer in a containerized application

- A series of admission policies and the defaults set in the cluster by platform engineers

- Security teams on audit or creating a security backlog

- The 4C model is explicitly mentioned, and stepping through the reasoning in layers instead of using tools benefits the student’s mental model as well as test achievement — everybody who studies for the exams CKS or KCSA is referencing the 4C model.

Actions to take:

- Assign all misconfigurations and incidents to a particular layer. Cloud IAM problem, Cluster RBAC problem or a Container runtime problem? This naturally leads to the improvement of root-cause analysis.

- Conduct a structured review: sequentially explore each application, examining cloud configuration, cluster controls, container posture and code issues.

- Construct the roadmap to security incrementally, build the backlog of work based on OWASP risks and apply CIS Benchmarks to your security requirement.

Honest Take

The 4Cs model works in reality because it honors the reality of how Kubernetes environments actually break (outside-in, layer-by-layer, chain of minor issues) instead of in single blasting explosions. If a team gets each layer all alone, and then takes it that the others are taken care of, they will find out a lot of the holes in the worst manner.When it results been a team that makes each layer individually, and is assuming others are taken care of, they find out a lot of the holes in the worst context.

Security in the framework is no easy feat. It does make it structured, but most teams are looking for a practical starting point first.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!