Majority of individuals believe in security of websites in a negative manner. They picture it as a wall which you build on and leave. The reality? It is nearer to a place to reside in things break, habits are important, and leaving the back door unlocked makes you get robbed even with three deadbolts on the front door.

The threat environment in 2026 will not simply be that hackers are smarter. The thing is that now, attackers can scan, probe and exploit at a speed rivalling a human team using AI tools. Simultaneously, the typical webpage has grown more and more complicated – APIs all around, third-party scripts, housed backends, npm packages you haven’t been able to remember that you installed last year.

This manual cuts the clatter. All development teams (as well as individual founders and other web product managers) should be using the techniques listed below in order to achieve real change, regardless of the fact that you have a quality product.

Table of Contents

The Basics That Still Break Most Sites

Updates Are Boring Until They’re Not

As of 2026, one of the most prevalent breach vectors is still outdated software. Not old-fashioned in years–here and there in a week. A critical vulnerability in a CMS plug published on a Tuesday can be used in large scale by Friday.

The remedy is too see-obvious: automate security patches where you can, monitor your dependencies with a scanner and fix critical problems within days, rather than during your next sprint evaluation.

I was part of a 47-old package dev teams sitting in production months because nothing broke. Nothing broke visibly. That is the perilous part.

Practical moves:

- Turn on automatic updates of your CMS core and security patches.

- Identify vulnerable libraries, such as Dependabot or Snyk.

- Establish a weekly 15-minute ‘check-in’ time – it is not as long as a postmortem.

Passwords Aren’t the Weakest Link – Password Habits Are

Most account takeover attacks continue to be motivated by weak credentials. The 2026 guidance no longer focuses on changing your password every 90 days, but rather, it urges more practical password rules, like long, high-entropy passphrases and unique credentials per system.

The new standard is now passkey-based or FIDO2 / WebAuthn multi-factor authentication. SIM-swap attacks are a growing threat to SMS-based 2FA, and are indeed being used in practice against it, and 2FA should be considered a final – no longer a strong – line of defense.

And use an SMS code and Admin123! you are not secured. You simply hope that attackers will select a different person.

Access Control Is Where Most Apps Fall Apart

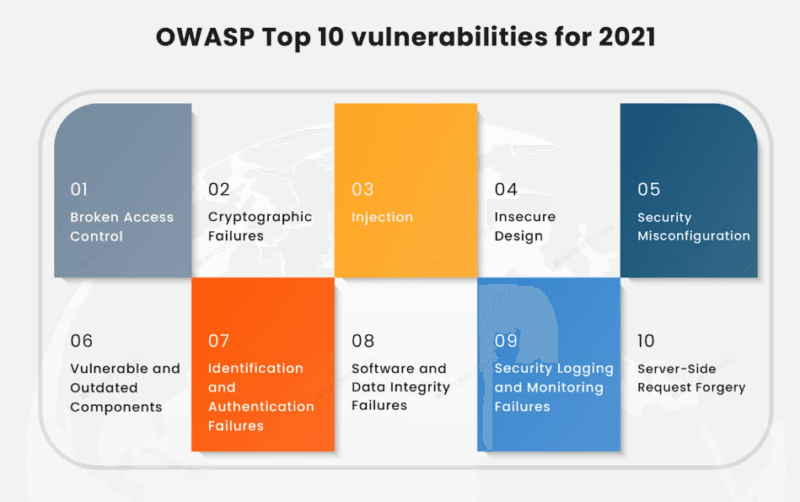

It is not without reason that broken access control is the top of the OWASP Top 10. It is not a scramble weakness. No grand adventure is in sight. It is simply… an API endpoint that does not verify that you are authorised to view any data that you are requesting.

Less privileged models, authorized access control, systematic authorization control on each and every request are now a matter of expectation. Not advanced controls. Something not to put in afterwards.

When your app has faith in the client to implement what the user can view, then you already have the issue.

HTTPS Is the Floor, Not the Ceiling

Many of the site owners notice the padlock in the browser and believe that their work has been completed. Any padlock is a sign that your information is being encrypted when passing through. It does not say anything about what is going on in your application.

Even 2026 is more: use HTTPS on all your pages, use HTTP Strict Transport Security (HSTS) to block protocol downgrades, turn off old versions of TLS, and set security headers at the load balancer or edge.

Such headers as Content-Security-Policy, X-Frame-Options or Permissions-Policy are not whims and fancies. These are what make the difference between a site that blocks an XSS attempt-gone-bad and a site that does not.

My Take on WAFs – They’re Not a Magic Shield

WAFP (Web Application and API Protection) platforms and Web Application Firewalls (WAAP) are indeed helpful. I have observed them intercept SQL injection attempts, prevent credential stuffing executions, and offer virtual patching as a code fix was being released.

But they are also grossly over marketed. Improperly set up WAF is not worth the trust. And advanced attackers are familiar with how to investigate rule-based systems.

The WAF should be a part of a layered defense, not a solution like the one mentioned above. Add it to input validation, correct output encoding and rate limiting and you have something real.

New platforms are incorporating AI behavioral analysis and invisible CAPTCHA to block bots using behavioral analysis without impacting user experience. Such is the way things go and it is actually helpful in high traffic applications.

Never Trust User Input – Ever

Injection and XSS will continue to be world leading risks as developers will continue joining untrusted data to queries or HTML. This is technically a solved problem, still appearing in production code.

The fortifications are familiar:

- All interactions with the database are parameterized.

- Output that is context-aware (not HTML escaping everywhere)

- Careful verification on any entry point.

- Principle of “never trust user input” was consistently applied and not selectively.

This was mostly experienced in my case where I found that most of the issues I had around injections were in areas that were quickly added under time pressure. Security shortcuts tend to be permanent.

What I Found When I Actually Ran Website Security Vulnerability Testing

A proper Website Security Vulnerability Testing of a mid-size web application showed that there was one thing most checklists do not cover: the most frightening results were from those that required no talent to get access to. They were the evident improper settings that were plainly visible.

Admin interface default credentials. Listing allowed in a production server. Changing the permissions to 777 due to it resolving the upload problem. These are actual findings of real audits, and these are very shocking.

Configuration hygiene security height of 2026 is composed of:

- Enabling the browse of the front-end directories to be disabled on the server.

- Setting the file permissions to 644/755 (not 777)

- Deleting or renaming default system administration paths.

- Secrecy of the hidden paths and details of the errors.

- Where feasible, using immutable or read-only configurations.

Running a vulnerability scan isn’t a one-time thing either. When you add DAST (Dynamic Application Security Testing) to your CI/CD pipeline, all your deployments not only undergo checking but also the ones you do not bother to check manually.

Backups Are a Security Feature, Not Just IT Hygiene

Backup and recovery are now part of the security discussion due to ransomware and devastating attacks. NIST SP 800-53 clearly defines that organizations must have continuity and incident recovery plans, and not only prevention plans.

One that has not been tested is not a backup. It’s a hope. Perform semi-annual restore tests. Secclude backups and your primary infrastructure. Record your incident response runbook, do not wait until you are in the mess of a live breach.

Log Everything. Review Something.

Failure to do security logging and monitoring is found in the OWASP Top 10 since the issue is common: either organizations do not log important events, or they do log but never review them.

Recommendations of the 2026 standard include centralizing logs and making access logging work across APIs and CDNs and incorporating security checks in CI/CD. The aim is to minimize time-to-detection – the longer an attacker can remain unnoticed in your environment the more it becomes a disaster.

The Part Most Articles Skip – Supply Chain and Front-End Risk

Your Dependencies Are Someone Else’s Problem (Until They’re Yours)

The recent high profile supply chain incidents have put LLM Supply Chain Security on the list of things to learn, particularly with the entry of AI-generated code and AI-assisted development introducing new dependency patterns, which are more difficult to audit by human means.

This common supply chain issue third-party libraries that contain unknown points of weakness is well documented. Nonetheless, the new variant incorporates AI models that are trained on corrupted code, AI-generated packages that inject fine-grained backdoors, and development with the help of the LLM that fetches untested dependencies fast.

Practical controls which are significant at present:

- Software Bills of Materials (SBOMs) to track what is in your stack.

- External scripts Subresource Integrity (SRI).

- Signed releases and checked sources of packages.

- Third-party library vulnerabilities (not only during install time) monitored.

Single-Page Apps Have a Specific Problem Set

React, Vue, Angular – these systems put a lot of logic in the browser. X that conveniently fits UX and inconveniences the security. XSS through DOM, the use of insecure local storage, and leaky third-party scripts are prevalent in SPAs that have not been properly designed to be secure.

The current advice is simple: be strict on Content-Security- Policy, use as few third-party scripts and iframes as possible, and no inline JavaScript, and authenticate all clientserver workload with secure, authenticated APIs.

During a web audit of the front end, I realized that many of the SPAs I audited stored JWT tokens in localStorage, which can be accessed by any JavaScript on the page. In case you loaded a third-party analytics script which was compromised, then whoever compromised it has access to that token.

GitHub Security Isn’t Just About Private Repos

GitHub security practices are too significant to warrant an appendix since version control systems are not usually an afterthought now.

Accidentally exposed secrets of repos such as API keys, databases, private keys are one of the most abused vulnerabilities to developer environments. Most of these are caught by the secret scanning performed inside GitHub, although this is reactive. Pre-commit hooks will be more effective, preventing secrets being committed in the first place.

Beyond secret management:

- Switch on Dependabot warnings and automatic pull-requests on vulnerable dependencies.

- Apply branch protection policies to insist on code inspection before going to main.

- Periodically audit all your third-party GitHub Action – a compromised Action within your CI/CD pipeline can access all your build environment.

- Rotate any credentials that have ever been made public or semi-public repo.

As I have learned, occasionally developers do not know that even months later a secret that they have made was left behind, even after being removed off the repo, and it is in the git history and can be retrieved within minutes.

Zero Trust Isn’t a Product – It’s a Mindset

Zero-trust security presupposes the absence of implicit trust according to the location of the network. In the case of websites and web applications, it means: do not trust internal traffic because it is internal to the network, authenticate the context of each request, impose MFA on any privileged access, and separate management planes and application planes.

This isn’t just an enterprise concern anymore. Remote access, distributed teams and cloud-hosted infrastructure render the historic inside = safe model of perimeter useless to virtually all organizations.

Cloud Infrastructure Is a New Attack Surface Most Teams Underestimate

Kubernetes misconfigurations, excessively permissive IAM roles, publicly accessible storage buckets these issues of cloud-native are now top in the list of breach vectors. The response is Infrastructure-as-Code (IaC) scanning, Kubernetes hardening, and CNAPP-style platforms (Cloud-Native Application Protection Platforms).

The snag about it: even your security controls can be mis-configured. Blocked legitimate traffic: WAF, CSP header, your own app, IAM policy that has been made too permissive or too restrictive, these are the real things that can go wrong.

It is not less controls. It is tooling, frequent audits, and to apply security configuration with equal seriousness as application code.

AI on Both Sides of the Fight

AI has transformed web security dynamics and did not alter the basics. Phishing: Generative AI enables attackers to create more persuasive phishing, find vulnerabilities more quickly, and exploit vulnerabilities at scale. AI is used by the defender to detect anomalies, create context-specific risk scores, and minimize false positives.

The implication to the majority of organizations: speed and automation are more important than before. When this is your only incident response to read through logs days after an attack, AI-assisted attackers will have already inflicted a ton of damage.

Nevertheless, there is also a failure mode of AI-assisted defense, i.e. alert fatigue. There are too many signals and there are too many tools, that there is not enough context. Its guidance in 2026 is to apply AI to cut noise, and to focus on what is literally exploitable and business-critical, instead of creating additional dashboards that no one will look at.

Where to Learn This Without Paying a Fortune

The finest free sources are really excellent at present:

- OWASP Top 10 + WebGoat + Juice Shop – Juice Shop The Juice Shop is a specially vulnerable Node.js application with which you can safely legally exploit to learn by experiencing what an attack really looks like. Experiential learning is more memorable than a manual.

- PortSwinger Web Security Academy – Supported by the Burp team. Includes all levels of basic SQL injection and advanced OAuth vulnerabilities through interactive Labs. Numerically suggested as it works indeed.

- NIST SP 800-53 – Thick, but it is the policy-level-reference that aligns with AWS, Azure and Google Cloud controls. Applicable when developing a security program, rather than fixing single problems.

- Google Cloud Web Security Best Practices – Practical advice on security headers, load balancer settings and CDN security that are not exclusive to Google Cloud.

Who Should Actually Be Reading This

As a developer, the most worthwhile investment is to get well aware of the differences between vulnerabilities and how they work, rather than what to patch. That occurs in the labs in Juice Shop and PortSwinger.

Unless you are a high-technical user and site owner, managed hosting that also includes WAF and DDoS defense, automatically updates you, and enables MFA on all accounts of admins is a good place to begin. That in itself deals with some major portions of OWASP-style risks.

When constructing your security programs in bigger organizations, consider centralizing around integrating security into CI/CD, API 24/7 monitoring, supply chain protection, and AI-enabled triage insurance processes, even though major decisions still need human involvement.

The Honest Take

Learning to enhance the security of websites in 2026 does not mean having the advanced tools. It is all about regular application of the fundamentals, and the selective usage of both newer controls, on your very real architecture.

The fundamentals, patching, HTTPS, MFA, input validation, access control, backups, logging, are rather uniformly applied within the industry. And that is where the majority of breaches occur. The new stuff (zero trust, AI detection, supply chain security, cloud posture management) becomes more crucial an increase in both your attack surface and regulatory exposures.

Start where you are. Fix what’s broken. Then build forward.

I’m a technology writer with a passion for AI and digital marketing. I create engaging and useful content that bridges the gap between complex technology concepts and digital technologies. My writing makes the process easy and curious. and encourage participation I continue to research innovation and technology. Let’s connect and talk technology!